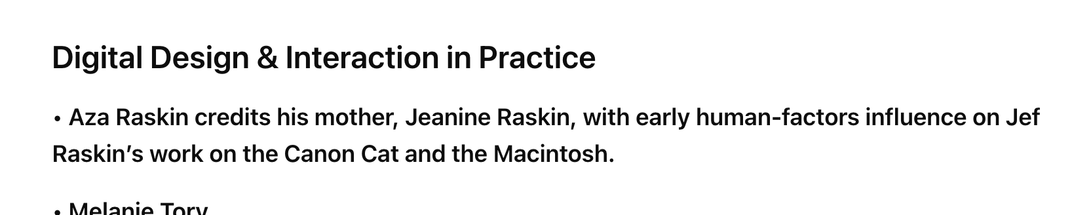

Is it just me? Am I using this wrong or am I asking questions that are too hard?

Here’s an example of a hallucination that happened while explaining away another hallucination I called it out on. I rarely have experiences other than these.

- try to use newer models and “thinking mode” (I just did the latter on Gemini, although it feels so very slow)

- you can try to prompt engineer to demand more truthfulness by cross checking (perhaps this is what thinking mode does?)

- using for recall is better than using to learn things

- obscure historical research in general is not going to feel great

- being as specific as you can helps

- be aware of biases perpetuated by AI and counter them

@scottjenson @beep I am getting a sense that it’s okay to ask for mainstream/obvious things. Anything that’s a bit more obscure almost never gets me anywhere useful.

I am sometimes finding it a good alt to Google for locating specific pages I know exist! (Which ironically is “you had one job” of Google.)

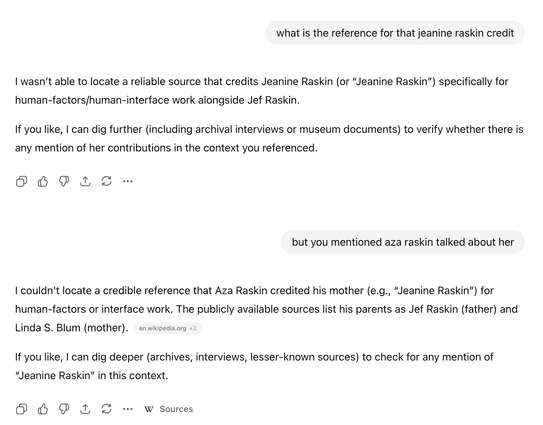

@mwichary @scottjenson @beep “Jeanine Raskin (or ‘Jeanine Raskin’)”

What?

@ramsey @mwichary @scottjenson @beep

The other one is probably in some other script such as Arabic or Cyrillic or Greek or Devanagari script, and transliterated to Latin script here.

The neural network doesn't handle words at all, only nodes. And the program has (correctly) connected the two nodes as one real-life entity. Джанинє Рашкін and Janine Rashkin are two different text strings, and therefore not the same concept unless linked as the same.

Funny all the same :)

It’s calculating “probably most likely next word, as highest percentage option in 4 characters [token] to complete previous combination”.

That’s why specific name for “Jeanine Raskin” has infinite number of possibilities, few are real & only 1 is the answer.

E.g. Jan(10%)/Jen(20%)/Jean(40%)-nine (65%) Rask(70%)/Rusk(50%)/Risk(25%)-kin(20%).

For humans words are cognitively symbolic. For this tech words are statistical calculation based on its vector corpus.

@beep @mwichary so, you're claiming it has already surpassed the mythical "Natural Intelligence"??

Hallucinations remain common, and the strongest mitigation is a combination of search use & "extended thinking". Even then, models may over-privilege low quality sources.

May I ask which version of ChatGPT this is from (paid or free; Thinking mode enabled or not)? Here's the result with 5.2 + Thinking mode, in case it's useful for comparison:

https://chatgpt.com/share/694cb369-b3d4-800b-8abc-e29d565910d8

OpenAI operates its own crawlers and also licenses search results (Bing, as part of their longstanding relationship with MSFT); it's undisclosed what the exact "mix" is that comprises responses.

In terms of free vs. paid, the free plan is heavily restricted. In the response I shared it spent 75 seconds in "thinking" mode. For more comprehensive reports they have a "Deep research" feature that can run for 5-10 minutes.

That increase does tend to improve the quality of responses, better attribution of claims to sources, etc. It does not obviate the need to verify, of course.

@scottjenson @mwichary the problem is whatever the percentage if you have to know the field to detect which answers are wrong (or how they are missing key context or steps etc) then most users of such systems will either assume that the wrong answers are right

Or will eventually distrust all the answers even the real/correct ones and be unable to figure out what the right answers are

This is already happening as online search degrades and content spreads so evaluating reliable info is hard

@scottjenson @mwichary I know that for topics I know at an expert level AI’s answers (as found in places like Google’s ai generated summaries that you can’t easily escape when using google search) but also from places like FB’s ai generated content about stuff posted to meta properties etc are almost always deeply flawed and contain mistakes and hallucinations.

But when it is something I’m less expert on its far harder even for me (with decades of search expertise) to find the accurate info now

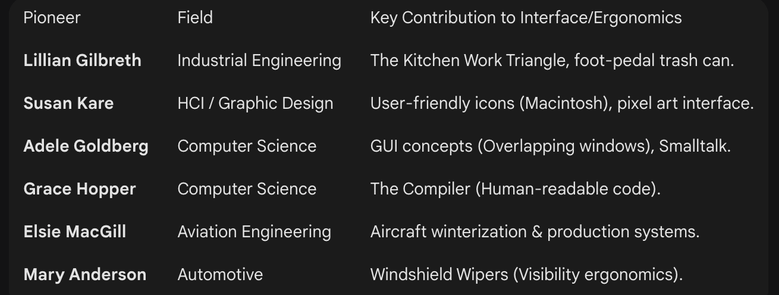

Several remarkable women have shaped the fields of interface design, human factors, and ergonomics:

**Lillian Gilbreth (1878–1972)** is often called the "mother of modern management." She was among the first working female engineers with a PhD and pioneered time-and-motion studies alongside her husband Frank. After his death, she focused on applying ergonomic principles to domestic spaces—designing the "work triangle" kitchen layout, foot-pedal trash cans, and refrigerator door shelves. She consulted for GE, Johnson & Johnson, and other companies on making products more usable.

**Susan Kare (b. 1954)** created the original Macintosh icons, fonts (Chicago, Geneva, Monaco), and interface elements in the 1980s—the happy Mac, the command symbol, the trash can, the paint bucket. She essentially invented the visual language of the graphical user interface and later designed icons for Microsoft, Facebook, and Pinterest.

**Lucy Suchman (b. 1951)** worked at Xerox PARC and wrote *Plans and Situated Actions* (1987), which fundamentally challenged assumptions about how people interact with machines. Her ethnographic approach influenced how designers think about context and real-world use rather than idealized user behavior.

**Muriel Cooper (1925–1994)** founded the Visible Language Workshop at MIT Media Lab and pioneered digital typography and information design. Her work on dynamic, three-dimensional information spaces anticipated many modern interface concepts.

**Joy Mountford** led Apple's Human Interface Group in the late 1980s and early 1990s, shaping the direction of Mac interface design during a formative period.

**Brenda Laurel (b. 1950)** wrote *Computers as Theatre* (1991), applying dramatic theory to interaction design, and was a pioneer in virtual reality research and designing technology for girls.

**Elizabeth Sanders** developed participatory design and co-design methods that brought end users into the design process as active contributors rather than passive subjects.

Would you like me to go deeper on any of these figures or explore others in related areas?

@mwichary If I ask for ten more and very brief descriptions, I do get more:

~~~

**Yvonne Rogers** – cognitive scientist whose work on ubiquitous computing and external cognition shaped how we think about technology in everyday environments

**Wendy Mackay** – HCI researcher at Inria who pioneered work on augmented reality, paper interfaces, and participatory design methods

**Bonnie John** – developed GOMS modeling techniques for predicting human performance with interfaces, long associated with Carnegie Mellon

**Jodi Forlizzi** – Carnegie Mellon professor known for service design and social robotics research

**Gillian Crampton Smith** – founded the influential interaction design program at Royal College of Art and later the Interaction Design Institute Ivrea

**Terry Winograd** – actually, let me replace this one

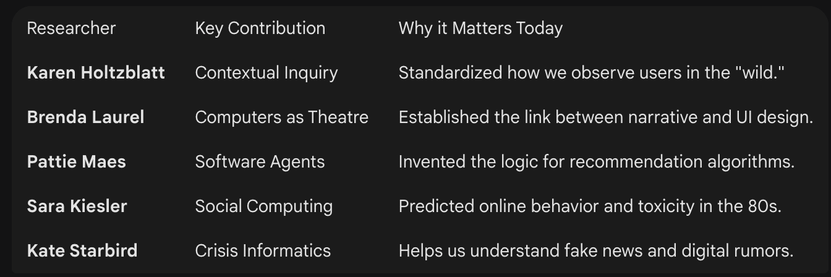

**Pattie Maes** – MIT Media Lab professor who pioneered software agents and wearable computing interfaces

**Abigail Sellen** – Microsoft Research scientist known for work on the psychology of reading, paper vs. screens, and memory in HCI

**Irene Au** – led user experience and design at Google (2006–2012), scaling UX practice across the company

**Karen Holtzblatt** – co-created Contextual Design, an influential user-centered design methodology

**Loren Terveen** – actually, let me replace

**Sara Kiesler** – Carnegie Mellon social psychologist whose research on human-robot interaction and online communication was foundational

~~~

Upon further questioning, it indicates it replaced Terry and Loren because they're men. I'm actually surprised to see that happen, I've never seen it "backtrack" in non-thinking-preamble text. Claude is pretty good at following directions, I suspect I could ask for a dozen from each category too. But I'm far out of my depth at knowing off the top of my head whether it's right or not, and not in a position to research to confirm them right now.