"AI Safety" is so bizarre.

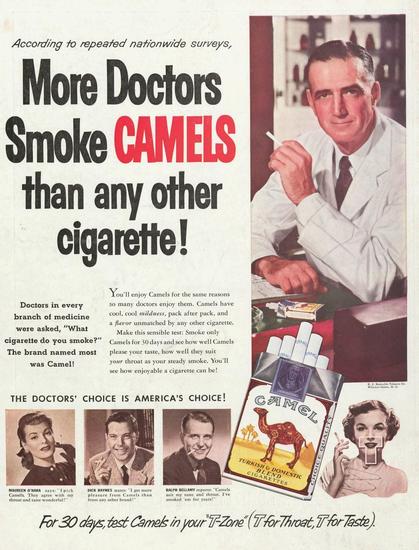

It's as if cigarette companies had themselves sounded the alarm about the dangers of smoking.

And then decided to make their core mission to make smoking safe, insisting on steering public discourse towards the dangers of smoking.

And to drive the point home they started releasing transparent progress reports showing how nothing they try seems to help, wondering out loud whether perhaps these dangers are actually *inherent* to smoking.

And then everyone just shrugs