Without fail, after using Screen Sharing with my MacBook Pro, I cannot use that MacBook Pro afterward without restarting it. The screen just stays black forever, no matter whether I close and open the lid, type on the keyboard, whatever. It's as if the machine thinks it’s still screen sharing or something. This is even if I *properly end screen sharing* on the other end. Anyone else dealt with this? It's super annoying when my workflow often uses Screen Sharing.

The Mac Pro, like the cicada, has one of the most fascinating life cycles in all of tech. It spends most of its life hibernating underground without update, often being mistaken for dead by trained pundits. But like magic, every 5 years it awakens, creating an inescapable buzzing throughout the jungles of Silicon Valley. In a breathtaking display, it sheds its exoskeleton to reveal a completely new carapace. Blink and you’ll miss it though! As it then burrows back down to begin the cycle anew.

Looking back, it was crazy that parents in the 90s let their kids just roam around all day knowing full well that Donald Trump was loose and could snatch them at any moment.

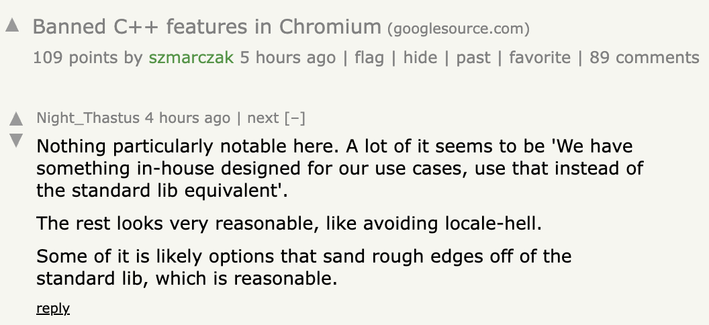

Nothing notable here.

Very reasonable.

Very reasonable to have a list of banned features longer than the spec of most languages.

Trying to use drag-and-drop feels like being a medieval peasant looking at the ruins of the Roman aqueducts, incapable of doing what they were built to do, instead only serving as a persistent reminder of a more technologically advanced past.

Like the aqueducts it’s moved past the state where its disrepair is even noteworthy. Most people have only ever known it like this. They don't ask for it to be fixed, because to them the crumbling walls aren't a "condition,” they're just *what it is*.

One of the places AI has arguably been most widely deployed is law enforcement, yet no one has pointed to this as evidence of a coming “end to police jobs” like they do for all other fields. What a fascinating discrepancy.

Mar-a-Lago Face is the Liquid Glass of cosmetic surgery.

I always figured that MongoDB was nothing but exploits that when put together experienced the emergent behavior of roughly (and I do want to emphasize *roughly*) approximating database-like behavior. It’s to software what a retro-virus is to life.

This behavior is certainly understandable from the perspective of AI companies. There’s some agreement that all this performative ”public worrying" is being used for a variety of ulterior motives (everything from indirectly trying to impress everyone with how "powerful" these things are, to trying to scare politicians into regulating in their favor). The thing that is more confusing is how everyone on the outside doesn't either see through this, or if they do believe it, actually act on it.

To be clear, whether the "dangers" of AI are "real" or not is irrelevant to this observation.

Also, I am using "AI Safety" here not only in the traditional “will it kill us all" sense, but also the very practical “security problems that are present *today*" sense, *and* the also very practical "social” problems that are also already present *today* sense.

All three of these are treated very similarly: acknowledged without equivocation and also... ignored?