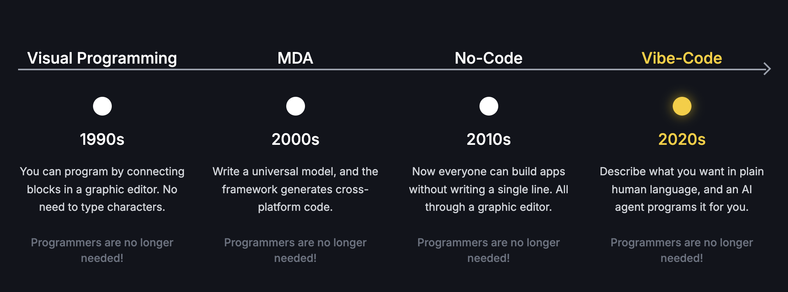

Programmers are no longer needed!

But really, I feel like the people who use this phrase to pitch their product either don’t know how many people actually find it difficult to break down tasks into logical components, such that a computer would be able to use, or they’re lying.

Software engineering is a mindset, a way of doing something while thinking forward (and I don’t mean just scalability), at least if you want it done with quality. Today you can’t vibe code but proofs of concept, prototypes that are in no way ready for production.

I don’t see current LLMs overcoming this soon. It appears that they’ve reached their limits without achieving general AI, which is what truly would obsolete programmers, and humans in general.

programmers, and humans in general

With current levels of technology, they would require humans for maintenance.

Not because they don’t have self-replication, because they can just make that if they have a proper intelligence, but because their energy costs are too high and can’t fill AI all the way.

OK, so I didn’t think enough. They might just end up making robots with expert systems, to do the maintenance work which would require not wasting resources on “intelligence”.

Yeah why is it always coders that are supposed to be replaced and not a whole slew of other jobs where a wrong colon won’t break the whole system?

Like management or C-Suits. Fuck I’d take chatgpt as a manager any day.

LLMs often fail at the simplest tasks. Just this week I had it fail multiple times where the solution ended up being incredibly simple and yet it couldn’t figure it out. LLMs also seem to „think“ any problem can be solved with more code, thereby making the project much harder to maintain.

LLMs won’t replace programmers anytime soon but I can see sketchy companies taking programming projects by scamming their customers through selling them work generated by LLMs. I‘ve heard multiple accounts of this already happening myself and similar things happened with no code solutions before.

Your anecdote is not helpful without seeing the inputs, prompts and outputs. What you’re describing sounds like not using the correct model, providing good context or tools with a reasoning model that can intelligently populate context for you.

My own anecdotes:

In two years we have gone from copy/pasting 50-100 line patches out of ChatGPT, to having agent enabled IDEs help me greenfield full stack projects.

Our product delivery has been accelerated to while delivering the same quality standards verified by our internal best practices we’ve our codified with determistic checks in CI pipelines.

The power come from planning correctly. We’re in the realm of context engineering now, and learning to leverage the right models with the right tools in the right workflow.

Most novice users have the misconception that you can tell it to “bake a cake” and get the cake ypu had in your mind. The reality is that baking a cake can be broken down into a recipe with steps that can be validated. You as the human-in-the-loop can guide it to bake your vision, or design your agent in such a way that it can infer more information about the cake you desire.

If you’re already good at the SDLC, you are rewarded. Some programmers aren’t good a project management, and will find this transition difficult.

You won’t lose your job to AI, but you will lose your job to the human using AI correctly. This isn’t speculation either, we’re also seeing workforce reduction supplemented by Senior Developers leveraging AI.

Cursor and Claude Code are currently top tier.

GitHub Copilot is catching up, and at a $20/mo price point, it is one of the best ways to get started. Microsoft is slow rolling some of the delivery of features, because they can just steal the ideas from other projects that do it first.

Claude Code is better than just using Claude in cursor or copilot. Claude Code has next level magic that dispells some of the myths being propagated here about “ai bad.”

Cursor hosts models with their own secret sauce that improves their behavior. They hardforked VSCode to make a deeper integrated experience.

Avoid antigravity (google) and Kiro (Amazon). They don’t offer enough value over the others right now.

If you already have an openai account, codex is worth trying, it’s like Claude Code, but not as good.

JetBrains… not worth it for me.

I get it. I was a huge skeptic 2 years ago, and I think that’s part of the reason my company asked me to join our emerging AI team as an Individual Contributor. I didn’t understand why I’d want a shitty junior dev doing a bad job… but the tools, the methodology, the gains… they all started to get better.

I’m now leading that team, and we’re not only doing accelerated development, we’re building products with AI that have received positive feedback from our internal customers, with a launch of our first external AI product going live in Q1.

What are your plans when these AI companies collapse, or start charging the actual costs of these services?

Because right now, you’re paying just a tiny fraction of what it costs to run these services. And these AI companies are burning billions to try to find a way to make this all profitable.

What are your plans when the Internet stops existing or is made illegal (same result)? Or when…

They are not going away. LLMs are already ubiquitous, there is not only one company.

Ok, so you’re completely delusional.

The current business model is unsustainable. For LLMs to be profitable, they will have to become many times more expensive.

What are you even trying to say? You have no idea what these products are, but you think they are going to fail?

Our company does market research and test pilots we customers, we aren’t just devs operating a bubble pushing AI. We are listening and responding to customer needs.

I don’t know what your products are. I’m speaking specifically about LLMs and LLMs only.

Seriously research the cost of LLM services and how companies like Anthropic and OpenAI are burning VC cash at an insane clip.

That’s a straw man.

You don’t know how often we use LLM calls in our workflow automation, what models we are using or, what our margins are or what a high cost is to my organization.

That aside, business processes solve for problems like this, and the business does a coat benefit analysis.

We monitor costs via LiteLLM, Langfuse and have budgets on our providers.

Similar architecture to the Open Source LLMOps Stack oss-llmops-stack.com

Also, your last note is hilarious to me. “I don’t want all the free stuff because the company might charge me more for it in the future.”

Our design is decoupled, we do comparisons across models, and the costs are currently laughable anyway. The most expensive process is data loading, but good data lifecycles help with containing costs.

Inference is cheap and LiteLLM supports caching.

These tools are mostly determistic applications following the same methodology we’ve used for years in the industry. The development cycle has been accelerated. We are decoupled from specific LLM providers by using LiteLLM, prompt management, and abstractions in our application.

Losing a hosted LLM provider means we prox6 litellm to something out without changing contracts with our applications.

We using a layered architecture following best practices and have guardrails, observability and evaluations of the AI processes. We have pilot programs and internal SMEs doing thorough testing before launch. It’s modeled after the internal programs we’ve had success with.

We are doing thus very responsibly, in a way our customers are asking. We are not some junior devs vibe coding garbage.

Accelerated delivery. We use it for intelligent verifiable code generation. It’s the same work the senior dev was going to complete anyway, but now they cut out a lot of mundane time intensive parts.

We still have design discussions that drice the backlog the developer works off with their AI.

I seriously doubt your quality is maintained when an LLM writes most of your code, unless a human audits every line and understands what and why it is doing it.

If you break the tasks small enough that you can do this each step, it is no longer writing a full application, it’s writing small snippets, and you’re code-pairing with it.

We have human code review and our backlog has been well curated prior to AI. Strongly definitely acceptance criteria, good application architecture, unit tests with 100% coverage, are just a few ways we keep things on the rails.

I don’t see what the idea of paircoding has to do with this. Never did I claim I’m one shotting agents.

Great? Business is making money. We’re compliant on security, and we have no trouble maintaining what we’ll be maintaining less of in the future.

As more examples in the real world

Aider has written 7% of its own code (outdated, now 70%) | aider aider.chat/2024/05/24/self-assembly.html

LibreChat is largely contributed to by Claude Code, it’s the current best open source ChatGPT client, and they’ve just been acquired by ClickHouse.

Such suffering from the quality!

Your product is an LLM tool written with LLM tools. That’s is hilarious.

If the goal is to see how much middleware you can sell idiots, you’re doing great!

Well, have you seen what game engines have done to us?

When tools become more accessible, it mostly results in more garbage.

You’re guess is wrong. :P And anyways, I didn’t say all games using an easy to use game engine are shit.

If you use an easy game engine (idk if unreal would even fit this, btw), it is easier to produce something usable at all. Meanwhile, the effort needed to make the game good (i.e. game design) stays the same. The result is that games reach a state of being publishable with a lower amount of effort spent in development.

The good massively outweighs the bad.

That’s your opinion.

I know I have stopped purchasing as many games because the average quality has tanked.

59% of games sold on steam either use unity or unreal. Not released, but sold. I think it’s the general opinion and your an outlier here. I invite you to post lists of your recently bought games or most played games. I doubt they all have their own custom engine.

Only 10% of new games used a custom engine, and they are by far mostly triple A games from big companies. Unless you just don’t play indie games, you have bought unreal or unity games even if you buy less in general.

And yes, that means 10% account for 40% of sales but it’s no suprise since having your own engine and a big budget go hand in hand.

It’s absolutely insane to actually think we are worse off. Would rather a walled garden, where only multi million dollar companies can afford to develop games, just because of a few shovelware garbage title that just get ignored anyways? Do you get a shit game as a gift every Christmas or something? The negatives aren’t even affecting anything.

Here are the stats if you are interested. creativebloq.com/…/unreal-engine-dominates-as-the…

help me. I am stuck working in SDET and my job makes me do a cert every 6 months that’s “no code” and I need to transition to writing code.

I’ve been SDET since 2013, in c# and java. I am so fucking sick of selenium and getting manual testing dumped on my lap. I led a test team for a fortune 500 company as a contractor for a project. I can also program in the useless salesforce stack (apex, LWC).

I am the sole breadwinner for my household. I have no fucking idea what to do.

If you’re not already messing with mcp tools that do browser orchestration, you might want to investigate that.

I don’t want to make any assumptions about additional tooling, but this is a great one in this space www.agentql.com

While it’s possible to see gains in complex problems through brute force, learning more about prompt engineering is a powerful way to save time, money, tokens and frustration.

I see a lot of people saying, “I tried it and it didn’t work,” but have they read the guides or just jumped right in?

For example, if you haven’t read the claude code guide, you might have never setup mcp servers or taking advantage of slash commands.

Your CLAUDE.md might be trash, and maybe you’re using @file wrong and blowing tokens or biasing your context wrong.

LLMs context windows can only scale so far before you start seeing diminishing returns.

www.anthropic.com/…/claude-code-best-practices

There are community guides that take this even further, but these are some starting references I found very valuable.

Yup. It’s insanity that this is not immediately obvious to every software engineer. I think we have some implicit tendency to assume we can make any tool work for us, no matter how bad.

Sometimes, the tool is simply bad and not worth using.