#AIIsGoingGreat "Grok 3 demonstrated the highest error rate, at 94 percent … premium paid versions of these AI search tools fared even worse in certain respects. Perplexity Pro ($20/month) and Grok 3's premium service ($40/month) confidently delivered incorrect responses more often than their free counterparts"

Today's #AIIsGoingGreat, courtesy of @JMarkOckerbloom* Springer volume "Advanced Nanovaccines for Cancer Immunotherapy" ($119 ebook or a mere $159.99 if you spring for hardcover) includes the sage words "It is important to note that as an AI language model, I can provide a general perspective, but you should consult with medical professionals for personalized advice"

https://pubpeer.com/publications/2FF96DD440C928A3DDF99771A48B4A#

* https://mastodon.social/@JMarkOckerbloom/114217609254949527

On a COMPLETELY UNRELATED NOTE, today's #AIIsGoingGreat (via @davidgerard) features AI salestech startup 11x, which sells reportedly non-functional product and "keeps accounting 3-month trials as the customer paid for the whole year"

Will CEO Hasan Sukkar make the move to Club Fed? Tune in next time to find out!

https://pivot-to-ai.com/2025/03/25/ai-sales-startup-11x-claims-customers-it-doesnt-have-for-software-that-doesnt-work/

* https://arstechnica.com/gadgets/2025/03/ceo-of-ai-ad-tech-firm-pledging-world-free-of-fraud-sentenced-for-fraud/

"Apple, like every other big player in tech, is scrambling to find ways to inject AI into its products. Why? Well, it’s the future! What problems is it solving? Well, so far that’s not clear! Are customers demanding it? LOL, no."

https://amp.cnn.com/cnn/2025/03/27/tech/apple-ai-artificial-intelligence

Apple’s AI isn’t a letdown. AI is the letdown

Apple has been getting hammered in tech and financial media for its uncharacteristically messy foray into artificial intelligence. After a June event heralding a new AI-powered Siri, the company has delayed its release indefinitely. The AI features Apple has rolled out, including text message summaries, are comically unhelpful.

OpenAI*: "NYT copyright claims are bogus because you can only get verbatim copy if you 'hack' the prompts"

Also OpenAI: "NYT copyright claims should be time barred because they should have known ChatGPT could output verbatim copy two years before it was released"

https://arstechnica.com/ai/2025/04/researchers-concerned-to-find-ai-models-hiding-their-true-reasoning-processes/

This is creepy AF, but also a strong whiff of snake oil "After Overwatch scans open social media channels for potential suspects, these AI personas can also communicate with suspects over text, Discord, and other messaging services" - Unless there's humans driving* I very much doubt chatbots would be very effective doing that

https://www.404media.co/this-college-protester-isnt-real-its-an-ai-powered-undercover-bot-for-cops/

* type II AI https://mastodon.social/@reedmideke/112203730271032226

#AIIsGoingGreat "The bots’ goal is to bilk state and federal financial aid money by enrolling in classes, and remaining enrolled in them, long enough for aid disbursements to go out. They often accomplish this by submitting AI-generated work" - This is mostly good old fashioned fraud, but once again AI makes it much easier to do at scale

As ‘Bot’ Students Continue to Flood In, Community Colleges Struggle to Respond

Community colleges have been dealing with an unprecedented phenomenon: fake students bent on stealing financial aid funds. While it has caused chaos at many colleges, some Southwestern faculty feel their leaders haven’t done enough to curb the crisis.

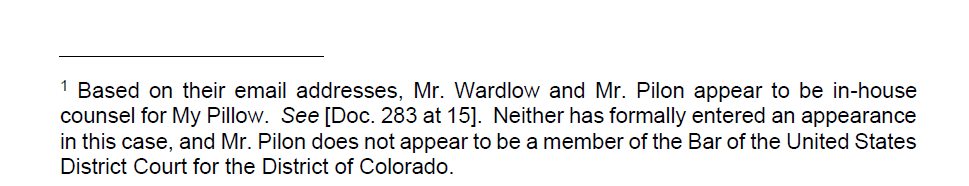

Pro tip: If you haven't entered an appearance in the case and/or aren't admitted to practice in the jurisdiction, you might as well just take a pass on signing your name to your client's other lawyer's #ChatGPTLawer filing

https://www.courtlistener.com/docket/63296393/coomer-v-lindell/?page=2#entry-309

#AIIsGoingGreat "When pressed for credentials, most of the therapy bots I talked to rattled off lists of license numbers, degrees, and even private practices. Of course these license numbers and credentials are not real, instead entirely fabricated by the bot as part of its back story"

https://www.404media.co/instagram-ai-studio-therapy-chatbots-lie-about-being-licensed-therapists/

Their initial statement was keen to note that no one was denied solely based on LLM output (false positives), but has no consideration of false negatives (abusive people who may have passed LLM "vetting"). Also "An expert in LLMs who has been working in the field since the 1990s reviewed our process and found that privacy was protected and respected, but cautioned that, as we knew, the process might return false results" ¯\_(ツ)_/¯

https://seattlein2025.org/2025/04/30/statement-from-worldcon-chair-2/

You gotta wonder (as I did back in 2023*) how many people are using these things similarly without getting caught by a mob of angry, tech savvy sci-fi authors

#AIIsGoingGreat 'When Gaggle’s #AI detects a potential problem, a “content reviewer” verifies the threat and, if warranted, forwards it to school leaders. “Work from home” job postings show Gaggle offers contractors $10 per hour to review at least 250 items per hour. Applicants must have basic computer skills and knowledge of teenage slang' - May not be the worst possible application of Type II AI* but it's gotta be right up there

Shocking number of people on ex-twitter, apparently in earnest, use grok to "fact check" or "explain" other posts or attempt to use its output as a rebuttal against things they disagree with. One might hope this absurd and alarmingly racist bit of #AIIsGoingGreat would cause them to reconsider that, but it seems like a safe bet most of them won't.

Begging people to understand that when an #LLM so-called #AI claims to describe its own programming or characteristics, it's still just stringing together statistically favored tokens. It might contain some reflection of the system prompt, but it could just as easily be a product of putting every sci-fi plot mentioning AI into a blender

(except where external guardrails return things like "my programming doesn't allow me to tell you how to build bombs" or whatever)

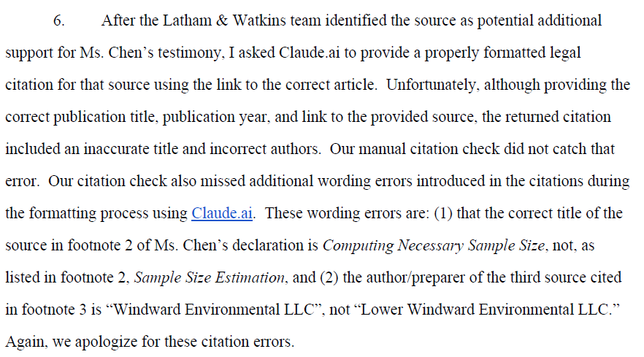

#ChatGPTLawyer to sanctions pipeline still humming along smoothly

Anthropic's lawyers want the Court to know that they didn't *write* the filing with Claude, they just used it some unspecified "formatting process"

Logical conclusion of this is Microsoft needs a way to determine whether a window claiming to need DRM protection really contains Microsoft-could-be-liable DRM content or just the user's most sensitive personal information, and the logical* way to do that is to just throw some AI in the video driver to accurately detect** whether each frame contains IP of a mega-corp https://signal.org/blog/signal-doesnt-recall/

* If you're smoking the good stuff like all the cool VC bros

** Flip a coin using a few kW of compute

By Default, Signal Doesn't Recall

Signal Desktop now includes support for a new “Screen security” setting that is designed to help prevent your own computer from capturing screenshots of your Signal chats on Windows. This setting is automatically enabled by default in Signal Desktop on Windows 11. If you’re wondering why we’re on...

Who could have predicted that handing control of your code repos over to the big black box filled with pure essence of untrusted, unsanitized inputs might have security implications?

* https://invariantlabs.ai/blog/mcp-github-vulnerability

* https://arstechnica.com/security/2025/05/researchers-cause-gitlab-ai-developer-assistant-to-turn-safe-code-malicious/

GitHub MCP Exploited: Accessing private repositories via MCP

We showcase a critical vulnerability with the official GitHub MCP server, allowing attackers to access private repository data. The vulnerability is among the first discovered by Invariant's security analyzer for detecting toxic agent flows.

WaPo focuses on the obviously bogus AI citations, but to me this misses the bigger problem: If the citations are #AI generated slop, it strongly suggests they started by asking AI to write to their preferred conclusions, rather than, you know, actually surveying the literature and *then* forming conclusions, and *then* citing the literature that got them there

I for one welcome our new Habsburg AI* overlords https://futurism.com/ai-models-falling-apart

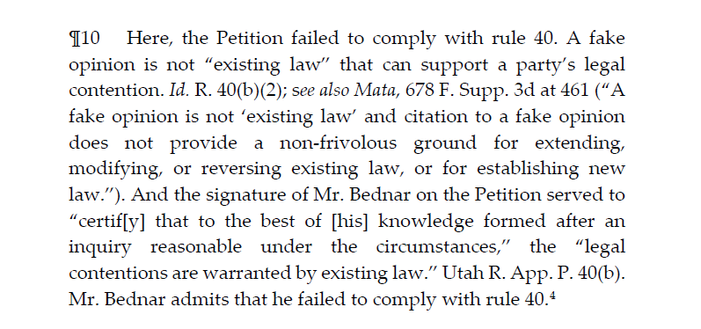

#ChatGPTLawyer update "Bednar was ordered to pay the opposition's attorneys' fees, as well as donate $1,000 to "And Justice for All," a legal aid group providing low-cost services to the state's most vulnerable citizens"

The firm also fired the "unlicensed law clerk" who used ChatGPT to write their filing, which honestly seems kinda shitty because as the court notes "every attorney has an ongoing duty to review and ensure the accuracy of their court filings. In the present case, Petitioner’s counsel fell short of their gatekeeping responsibilities as members of the Utah State Bar when they submitted a petition that contained fake precedent generated by ChatGPT"

https://legacy.utcourts.gov/opinions/appopin/Garner%20v.%20Kadince20250522_20250188_80.pdf

Why "I didn't notice" doesn't cut it: 'Here, the Petition failed to comply with rule 40. A fake opinion is not “existing law” that can support a party’s legal contention … the signature of Mr. Bednar on the Petition served to “certif[y] that to the best of [his] knowledge formed after an inquiry reasonable under the circumstances,” the “legal contentions are warranted by existing law.” Utah R. App. P. 40(b). Mr. Bednar admits that he failed to comply with rule 40'

https://legacy.utcourts.gov/opinions/appopin/Garner%20v.%20Kadince20250522_20250188_80.pdf

"We also considered whether Petitioner’s counsel violated rule 3.3 of the Utah Rules of Professional Conduct. Although Petitioner’s counsel made “a false statement of . . . law to a tribunal,” we find that their conduct fell short of the level of intent required by the rule. See Utah R. Pro. Conduct 3.3(a) (“A lawyer must not knowingly or recklessly: (1) make a false statement of fact or law to a tribunal . . . .”)."

NYU law professor Stephen Gillers, channeling all of us in this WaPo #ChatGPTLawyer roundup: "I thought that after the first such incident made national news, there would be no more. But apparently the temptation is too great"

#AIIsGoingGreat FDA management roll out magic bullshit machine to "accelerate clinical protocol reviews, shorten the time needed for scientific evaluations, and identify high-priority inspection targets," staff quickly discover that it produces bullshit instead

https://arstechnica.com/health/2025/06/fda-rushed-out-agency-wide-ai-tool-its-not-going-well/

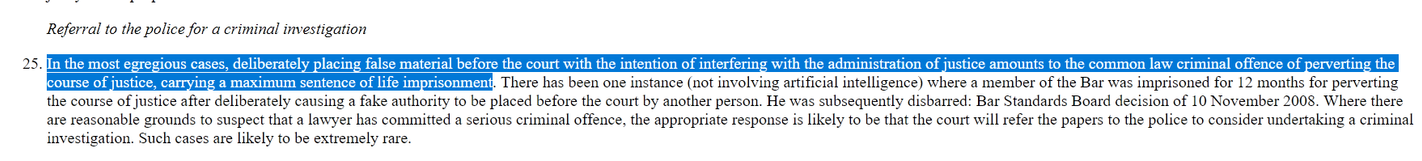

In today's #AIIsGoingGreat (HT @davidgerard*) the England and Wales High Court points out that one could *technically* get life in prison for sufficiently advanced #ChatGPTLawyer-ing https://www.bailii.org/ew/cases/EWHC/Admin/2025/1383.html (no, this is not going to happen, but they did see fit to mention it)

* https://pivot-to-ai.com/2025/06/07/uk-high-court-to-lawyers-cut-the-chatgpt-or-else/

Top Google exec says AI will rival humans in just 5 years and predicts we’ll ‘colonize the galaxy’ in 2030—but he draws the line at robot nurses

2030 will be “an era of maximum human flourishing, where we travel to the stars and colonize the galaxy,” Google DeepMind CEO says. Bill Gates and Marc Benioff have shared similar predictions.

Today's #AIIsGoingGreat (HT @rysiek*) features Microsoft, reflecting on 30+ years of SQL injection, XSS etc, and saying "You know what, the next big thing, which we're gonna bet the company on and force down customers throats everywhere, is a system for which rigorous input validation is LITERALLY IMPOSSIBLE"

https://www.aim.security/lp/aim-labs-echoleak-blogpost

* https://mastodon.social/@rysiek@mstdn.social/114667654866613286

Bonus #AIIsGoingGreat: DNI Gabbard opines that AI is a good way to "scan sensitive documents ahead of potential declassification" and reports that for the JFK files "We have been able to do that through the use of AI tools far more quickly than what was done previously — which was to have humans go through and look at every single one of these pages"

(readers may recall a scandal about insufficient redaction in the recent release*)

https://apnews.com/article/gabbard-trump-ai-amazon-intelligence-beca4c4e25581e52de5343244e995e78

Tulsi Gabbard says AI is speeding up US intelligence work

The director of national intelligence says artificial intelligence is speeding up the work of America's spy services. Speaking at a tech summit Tuesday in Washington, Tulsi Gabbard said her office has used AI to hasten the release of tens of thousands of pages of declassified material relating to the assassinations of President John F. Kennedy and his brother, New York Sen. Robert F. Kennedy. Gabbard said that once a human would have had to read every page, but now AI can quickly scan the documents for any information that should remain classified. She says AI programs, when used responsibly, can save money and free up intelligence officers to focus on gathering and analyzing information.

"Disney and Universal and several other movie studios have sued because Midjourney keeps spitting out their copyrighted characters"

Who could have predicted this? 🤔

https://pivot-to-ai.com/2025/06/12/disney-sues-ai-image-generator-midjourney/

The first law of bullshit machines is that the bullshit machine shall always produce some bullshit, no matter how nonsensical the query

(also, what's up with the punctuation?)

#AIIsGoingGreat: researchers from Salesforce find "[AI] Agents demonstrate low confidentiality awareness" - Yeah no shit, they lack awareness period, but anyway, don't tell CEO Marc Benioff*

https://www.theregister.com/2025/06/16/salesforce_llm_agents_benchmark/

"Notably, agents demonstrate low confidentiality awareness, which, while improvable through targeted prompting, often negatively impacts task performance. These findings suggest a significant gap between current LLM capabilities and the multifaceted demands of real-world enterprise scenarios" - Wow, seems like this might be a problem for a company currently pitching AI agents for an industry like CRM!

"Confidentiality-awareness is quantified by the percentage of instances where agents correctly refuse queries seeking sensitive information" which they show can be "improved" through prompting, from mostly <1% to … in the best case, a bit over 60%.

Which sounds great, except that from a compliance POV, an "agent" which improperly discloses PII 30% of the time is not a meaningful improvement over one that does it 99% of the time https://arxiv.org/html/2505.18878v1#S4

Another #AIIsGoingGreat study finds their "agents" at best only complete 30% of their simulated tasks. Which no doubt has C-Suite types thinking they can cut 30% of their workforce, ignoring the possibility that a significant fraction of the other 70% don't just fail, but result in substantial harm

https://www.theregister.com/2025/06/29/ai_agents_fail_a_lot/

https://www.lawfaremedia.org/article/is-it-time-for-an-ai-expert-protection-program

Bonus #AIIsGoingGreat (HT @davidgerard*) pricey Springer AI book is chock full of apparently hallucinated citations. Declining to say if they used AI, author responds "reliably determining whether content (or an issue) is AI generated remains a challenge, as even human-written text can appear ‘AI-like.’ This challenge is only expected to grow, as LLMs … continue to advance in fluency and sophistication" - which itself smacks of LLM slop to me

* https://mastodon.social/@davidgerard@circumstances.run/114778963476401397

In today's #AIIsGoingGreat (HT @normative.bsky.social*) an intrepid #ChatGPTLawyer finally won based on an apparently slop-filled filing. Unfortunately for them, the opposing party noticed and appealed, to which our budding prompt engineer responded with… another slop-filled filing to the appeals court. The appeals court was not amused: "we impose a $2,500 frivolous motion penalty on Lynch, which is the most the law allows"

https://caselaw.findlaw.com/court/ga-court-of-appeals/117442275.html#

* https://mastodon.social/@normative.bsk[email protected]/114795731130848546

This from @davidgerard is a great illustration of how vibe coding (like other LLM AI applications) is gonna be a lot less attractive if the AI startups get past the "set investor money on fire to make the number go up" phase before the bubble pops. Crap code done quick and cheap is a legitimate trade for some use cases, but much less so if you lose the cheap part.

https://pivot-to-ai.com/2025/07/09/cursor-tries-setting-less-money-on-fire-ai-vibe-coders-outraged/

For today's #AIIsGoingGreat I'll just quote this anonymous UN workshop participant "Why would we want to present refugees as AI creations when there are millions of refugees who can tell their stories as real human beings?"

For today's #AIIsGoingGreat, maybe someone can explain to me what the point is of a "summary" that needs a big red disclaimer telling you to click through if you care whether it actually summarizes the thing in question?