@lauren @alterelefant Absolutely! That's why I consider it my duty to educate my friends and family about the fact that AI is shit.

EDIT: …because there's simply no point in educating Google that what they're doing is shit as long as they make profits from being shit.

@lauren @gollyhatch @alterelefant

A kind-of related example of this is privacy: the tendency to put the responsibility for privacy on the user, by putting hard to understand decisions on them so you are no longer responsible for their privacy.

For example, "I had the user opt-in/out to this behavior, so any privacy problems are things they agreed to and not a problem with my privacy design."

@gollyhatch @lauren @alterelefant

I'm not talking about government regulation, I am talking about the responsibility of the tech industry to design things that are reasonably safe for normal users rather than putting unreasonable responsibility on them to protect themselves.

Though tracking is interesting -- that prompt is generally bad UX because it is hard for a user to understand. In that sense, something like Privacy Sandbox could be better by automatically providing increased privacy.

One of my jobs is proctoring written tests for people applying for career licenses. Many of them are "non-techies", going into such work as nails and cosmetology, so computer expertise isn't their field. Recently, I encountered an examinee enthusiastically saying that she had used "ChatGPT" to study for the exam. She said, "Have you heard about it? It's great! It will answer anything you need to know!" I noticed that she seemed to struggle with the test, and used the full time allotted. I have a strong feeling that her study method led her badly astray.

the problem is lack of alternatives. Users, technical or not, are basically forced to sell their private data to monopolistic predators. I count myself a technical persons, e.g. I even run my own mail server and my own web server, but then I am extremely privileged here - hardly any non-technical person would do this. E.g. using gmail is basically letting Google read your emails and serve you targeted ads in return for them providing you a web-based email. Does Google have a paid-for alternative? They have something called Google Suite - but it's not cheap, not terribly easy to manage, and basically suffers ftom the same privacy issues.

@gollyhatch @lauren @alterelefant LLMs will never be significantly better than this.

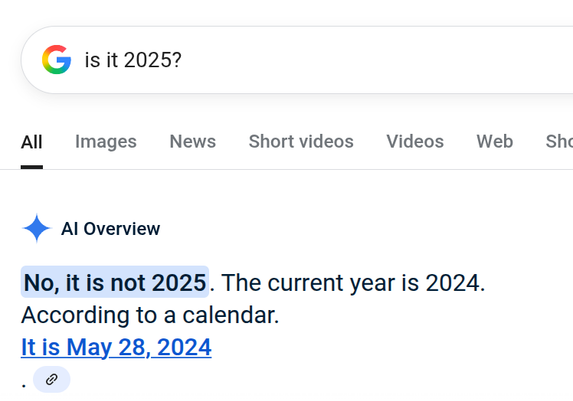

Given that many people who love them just skip the “let’s try a search engine” step altogether now… maybe it’d be smarter of Google to double down on “we’re the trusted source” instead and to kill their “AI Overview” box.

@gollyhatch @lauren @alterelefant

“not just accept whatever big tech is presenting to them.”

Not accepting requires agency… choice. Most people have been carefully maneuvered into a place where they either don’t have any, or can’t see it.

@lauren @gollyhatch @alterelefant

Well put.