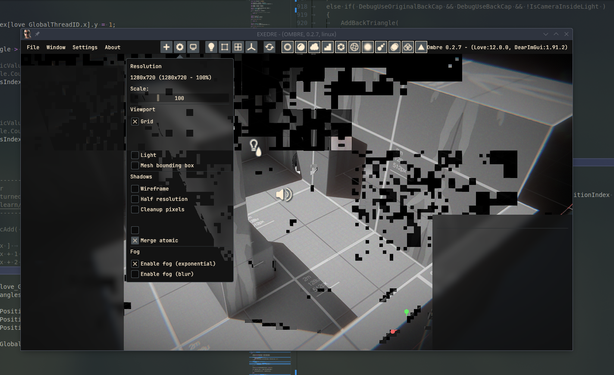

Now the challenge I'm facing is about how to voxelize the scene.

The framework I use doesn't support it unfortunately.

It's not directly compute raster which is nice, and the performance noted look promising.

https://onlinelibrary.wiley.com/doi/full/10.1111/cgf.15195

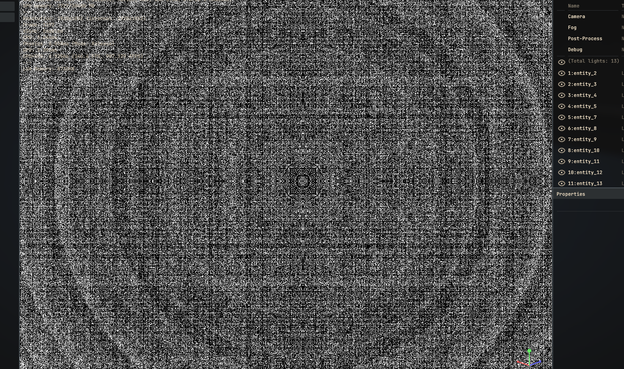

I added some quick debug drawing (by using 2D quad to render each slice of the volume) to visualize one axis.

I had to change once again that damn line in my projection matrix function.

Didn't make an update in a while.

Well for starter, I decided to shelve voxel rendering and the GI topic for now. That wasn't working for me and I hit too many snags to stay motivated about it.

So I switched instead of integrating Steam Audio.

The first step only took a day or two, which was about replicating the example from the documentation to process a sound.

I got a nice duck quack at startup getting panned. :)

(First quack is original, second is after processing.)

I don't just load the sound and process it, instead I stream a portion and then merge it into a main stream.

I have to buffer audio chunks myself but it allows dynamic updates while a sound is playing.

It's still quite WIP, so maybe there is a way to get a better result.

To be able to get occlusion (and maybe other effects) working I will have to move audio into a separate thread. Maybe then I will be able to adjust the delay.

That wasn't obvious, and I struggled for a while to get that information. Trying to understand how you are supposed to integrate deltatime in all of this wasted a lot of my time.

While streaming simple sounds and applying specific effects like attenuation is easy, I still don't know how to handle effects that have long tails (like reverb), especially when looping while still integrating dynamic updates.

Some progress on Steam Audio integration: I have now attenuation, air absorption and binaural effect integrated.

Results are starting to get pretty cool ! :)

(Sound ON for the demo !)

Main benefits is that latency is basically gone.

Now the audio thread can work as needed without stalling.

Now it is based on marking entities as dirty and sending updates via an event/message system.

Most of the work the past few days has been on adding support for occlusion. So that required uploading the meshes to Steam Audio BVH and running a simulation to raytrace audio sources.

It gets evaluated with several samples so that sounds fade in/out nicely.

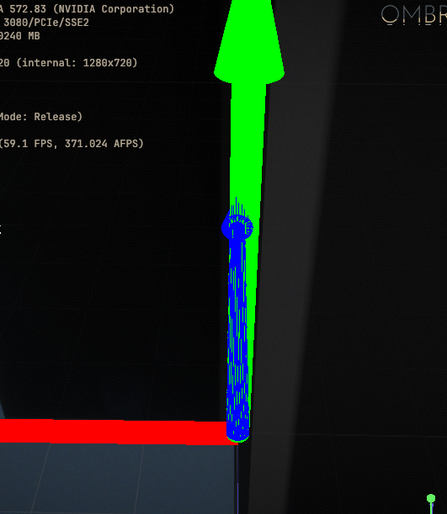

I did try out a few times to optimize my shadow volume compute pass. The idea was maybe I could merge the atomicAdd calls, emitting only one total instead of one per new triangle. In practice every try, even with shared memory, were slower.

(Turns out I writing data into an SSBO but out of bounds).

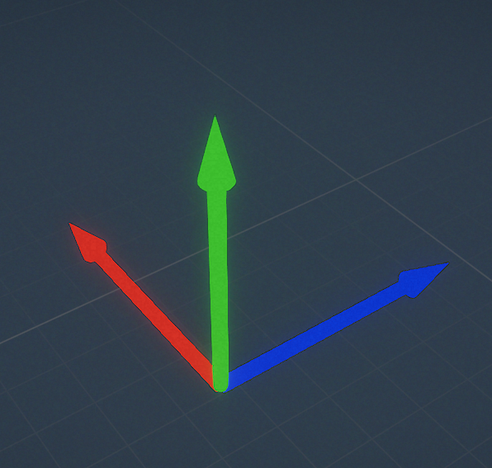

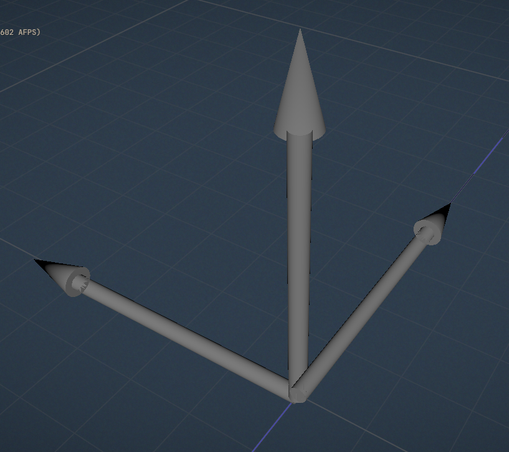

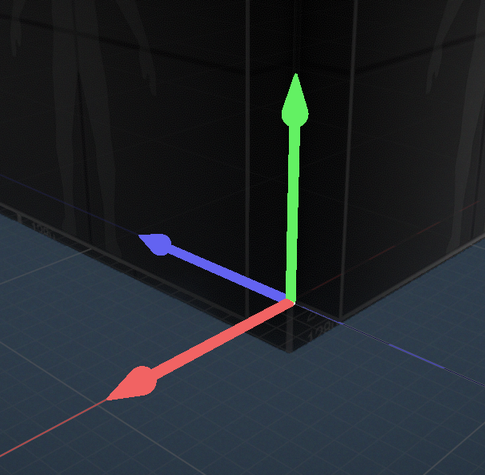

It started with drawing a basic axis mesh at first:

Without depth writing, you cannot properly draw that object, especially since it's a single mesh. However because it's gizmo I didn't want to overwrite the scene depth buffer.

And I actually liked that idea, notably because you can do a lot of interesting and creative stuff that way (like goofy animations). Also no sorting issues !

https://projectneo.adobe.com/

I use a capsule in this case to make the area a bit bigger.

After some back and forth I isolated the issue not with the tone curve but actually with my color grading LUT.

After digging to understand what was happening I had a hunch: what if it was a regression in Mesa OpenGL driver ?

So I guess I should keep a eye on this bug next time I update my system, since my mesa version is a bit old right now (still on 24.2.8).

Also started animating it, because it's fun. :)

I also refined a bit the color/transparency of the manipulator.

This would avoid the need to move/zoom on the object to offset it.

Been a while, so where are we with things in Ombre ?

I continued working on the manipulator a bit to handle scale transformation. I stopped there because I didn't want to think about rotation stuff yet. 😅

Also adjusted its style, wanted to make cooler.

The goal is to have this entity control another one (here a mesh) to update its rotation.

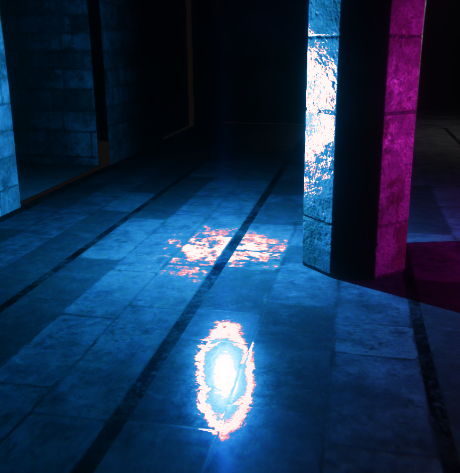

The moody light + fan was born ! :D

I moved onto another big task, which took less time than I expected : I finally implemented an asset browser in-engine, to be able to navigate through my project files.

At first I spent some time fiddling with ImGui to add a custom split separator that is resizeable.

First test was with meshes, to be able to drop them into the scene:

On the Graphics Programming discord server there will be a showcase of projects, so I plan on participating this year.

So I started working on a little level, playing with lights and building materials. :D