One of the hot technologies preceding one of the several “AI Winters” we’ve seen since software was even a thing was called Expert Systems. And they were hot for well over a decade!

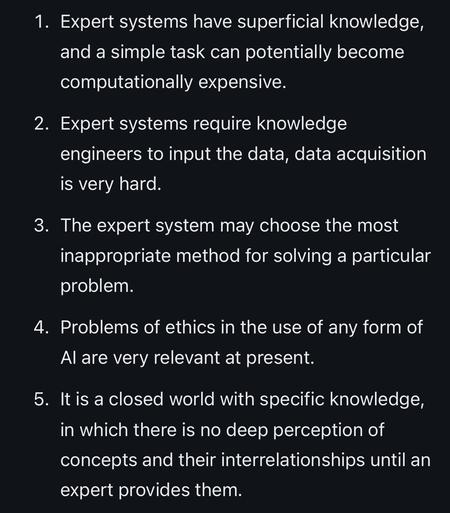

…but as the hype started cooling and people looked at the cost/benefit analysis and the real capabilities of such a system in the long term, the whole house of cards came crashing down. Below is the summary the Wikipedia page gives on why they failed. Look familiar?