@jasongorman The coding part of programming is generally the easiest part of the work, and agreeing on the problem to be solved and what good looks like the hardest.

I started out programming at the microcode and machine code level. Ever since it has just been further and further abstraction. I don't think the next stage will be that different.

I remember the hype around 4GL and the imminent demise of programmers. Didn't happen then and it will not happen this time. Programmers will just evolve and mover higher up the stack again.

@jasongorman @gruntfutuk The problem is going to be when the models output code you yourself can’t understand.

It could be a decent tool in someone who is well educated.

It may seem like a good tool to those who aren’t but they won’t be able to tell the difference. That way lies subtle, non-obvious bugs compounding at a rate that they cannot be fixed.

And it doesn’t seem like we’ll be able to train a model to not hallucinate or choose appropriate abstractions.

"It's more like 65 years and counting. Folks said this about COBOL and FORTRAN"

IT was more along the lines of "There aren't enough programmers to write all the software that needs to be written, so we need to make it so scientists (FORTRAN) and managers (COBOL) can write programs."

Also, there were dozens of machine languages back then and two languages that could run on all of them was a huge win.

I can imagine a lot more people were saying this when the majority of coders were women.

This sounds like an argument for Agile methods. I don't think inserting AI in the process resolves the 'issues' with Agile development: lay people can't adequately describe a starting point, they're "too busy" to stay involved in the process, and they don't want to start using a new system until it's feature complete (which limits useful feedback).

And I'm agreeing with the original statement: "we won't need programmers, just people who can describe to a computer precisely what they want it to do". Working from vague to precise either takes a lot of trial and error or a detailed understanding of the development system. Business-oriented subject matter experts will either not be suited to that work or they'll be too busy producing reports and analysis for upper management.

I would say that "people who can describe to a computer precisely what they want it to do" are coders. So, yes, we'll still need coders.

@mathiasverraes @AdamDavis @jasongorman that "LLM-generated throwaway app" might work for simple tasks, but not for important things like pensions, passport applications, e-commerce, banking, where a high degree if correctness is required. Surely?

Or are you suggesting that people will accept approximations of bank balances, pension pots, purchases, etc ?

🤔

@matthewskelton @mathiasverraes @AdamDavis I did a short gig once for a logistics firm. They'd paid 2 programmers a fixed price to develop a traffic management system for a client contract, and it had gone badly. Their monthly invoices were about 5% out. Which was about £25K a month.

The manager I dealt with actually said to me that "as long as it was 90%+ accurate, that was fine.

They hadn't realised their client had developed a reconciliation system in Oracle to check the invoices.

The user described a fluid set of criteria, but there's never a notion of accuracy or completeness. Payroll etc is of course still handled by a more traditional system.

@mathiasverraes @AdamDavis @jasongorman that makes sense to me: ad-hoc tasks that are not (yet) a consistent ongoing need.

But this also suggests to me a kind of backwards step: people just using GenAI codegen like a human. It's effectively not repeatable and so then like a step backwards towards unspecified, random needs from business people without the clarity that comes from actually industralising the activities.

🤷🏼♀️

Step 1: Random unspecified needs. Step 2: Traditional SDLC. Step 3: Profit!

Step 2 is where IT forces clarity, and business would rather skip it (and has always tried to skip it). Programmers assume AI will make step 2 faster. I'm speculating there could be a category of problems that benefits from not having a step 2.

@mathiasverraes @AdamDavis @jasongorman yep... And actually GenAI may provide a useful option where the business can skip the actual thinking... If they need something truly one-off, but with the risk that the business is creating a mountain of unfathomable dross code that slows things down later.

GenAI is going to create jobs, not take jobs in IT.

2030: "Dross-clearing Engineer wanted to clear up GenAI code" 🧹

@krisbuytaert @mathiasverraes @AdamDavis @jasongorman hmm, well we're not training many juniors in Assembly or vanilla C language any more, but instead in higher level languages.

I expect to see some formalisation of how to constrain an LLM to generate useful code, so maybe that becomes the new "coding" ? Basically, prompt engineering v2 or v3?

@jasongorman @krisbuytaert @mathiasverraes @AdamDavis sure but going back to Mathias example, some constraints might be "good enough" even with non-determinism.

And maybe that non-deterministic output is sometimes a feature, not a bug? Or at least marketed as such. "Personalised" "Unique" etc. 🤷🏼♀️

@mathiasverraes @matthewskelton @AdamDavis @jasongorman

I might be mistaken, but it started as a beautiful joke.

@mathiasverraes @jasongorman

18 months? That sounds like 'waterfall' to me.

Continuous Delivery is a bit of a surprise at first, but it's worth the effort in quality, as well as speed.

Humans can do that, in fact they have to in the normal development process. LLMs are incredibly bad at it.

You'd be in the "arguing with the LLM about how many 'r's are in the word strawberry" scenario, except with your company's financial reporting.

Although even with humans, developing incrementally from *clear but minimal* requirements is much less likely to be an expensive failure than working from *vague* requirements.

@mathiasverraes @jasongorman that’s a false dichotomy. There is a world of variance between these, such as frequently (daily? hourly?) releasing a new _precise_ definition that can elicit feedback.

‘Be vague and iterate’ is a counter to a problem that largely no longer exists, and may introduce more risk than it eliminates.

Not to mention the planet-burning thing. Or the IP theft and plagiarism thing. Or the systematic bias thing. Or the privacy violation thing. Or the…

@jasongorman Oh well, no problem, then.

O

COBOL – the Business Oriented Language – is so simple that managers can do that.

Or so we were told when it was introduced.

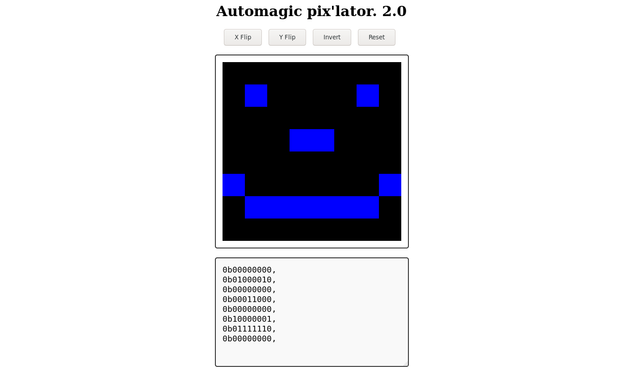

@jasongorman Yesterday I made this javascript tool. Although chatCPT cant code worth **** I prompted it along, correcting errors myself and gradually built this up.

There were two advantages to going to an AI for programming assist on this:

a) It was there. Where are all the f******* humans all the time? There are supposed to be billions of them.

b) chatGPT NEVER ONCE said "thats a stupid question, go read the manual" or "This question is below me, go ask someone else." Why are the few people that do exist on the planet such A**holes sometimes?

During *my* existence, web programming has changed a lot, divs, CSS, and even new versions of javascript can be like "you can do WHAT?" along with the huge selection of functions now available.

I have existed through a lot of programming languages and its often nice to be able to ask "How do I write a FOR loop in *this* language"

ChatGPT was able to give me (though intermittently wrong or bugged) guilt free assistance.

So don't be a jerk to someone asking for help and we wont need AI.