Today was the #PapersInSystems event on the paper:

How to Perform Hazard Analysis on a "System-of-Systems" by Nancy Leveson

Thanks to @adrianco I learned a lot about #STPA & #STAMP (but much more is there still to learn)

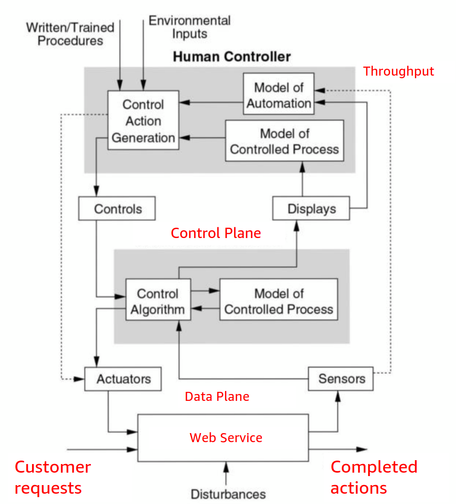

And I got some more ideas how it fits or can be applied to #cybersecurity.

The following is my (probably flawed) understanding

Lets dive in :

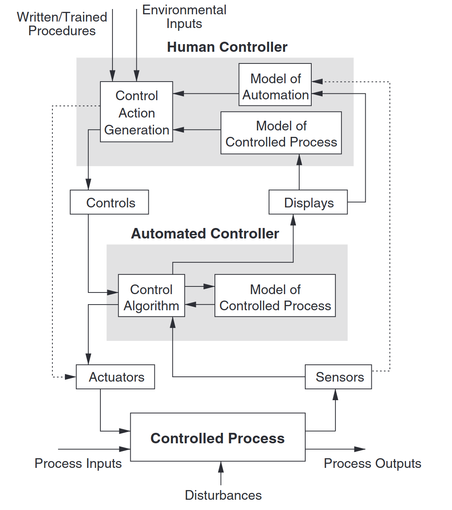

In STAMP (System-Theoretic Accident Model and Processes) safety is treated as a dynamic control problem rather than a failure prevention problem.

This leads to the following generic abstraction (model) of a safety relevant system as a socio-technical system

Source: Engineering a Safer World: Systems Thinking Applied to Safety

by Nancy G. Leveson