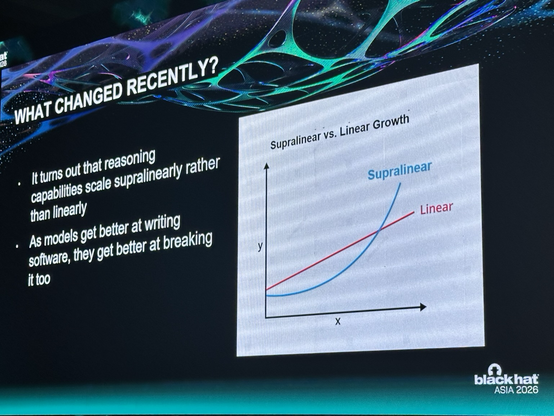

Day two of Black Hat and I got a chance to see my friend Ariel Herbert-Voss

do the keynote. It opened a floodgate of thoughts.

Oh and yay, GPT-5.5 is here and it feels like we’re entering another mad period of growth for frontier models and security research.

The big thing I’m seeing is that we need less scaffolding around these models. Give them code, context, a goal and some tools, and they are getting much better at cracking on.

That matters for bug hunting. A lot

But let’s not pretend the machines have solved vuln research. They haven’t.

The biggest gains are still at the shallow end.

Low severity bugs, obvious logic mistakes, unsafe patterns, missing checks, boring-but-real issues, that’s where models are starting to clean up. If the bug class is well documented and the code is clear, they move quickly.

The low-hanging fruit is getting hoovered up at pace. The easy stuff is becoming cheaper to find, well sorta cheaper.

We’re also seeing decent gains on modest bugs. Not deep chains. Not always novel research. But useful findings where the model can read, reason, trace, and join enough dots to help.

Where it still gets spicy is state.

Models can talk about state all day, but they don’t really feel it. They still struggle with temporal bugs, race conditions, lifecycle weirdness, multi-step flows, and those “only happens after you do these seven things in this exact order” bugs.

Spotting something dodgy is not the same as proving it is exploitable.

On the exploit side, validation, exploitability and reliability are improving, but more slowly. This is one of the big areas John and I have been working through with RAPTOR: getting away from “looks interesting, mate” towards “this is real, reachable, and repeatable”.

Because exploit reliability is still a graft. Targeting is still fragile. You still need iterations.

Oh and the human in the loop is still vital.

We all thought fuzzing would solve the bug problem. It didn’t. It changed the economics of bug discovery, but we still needed harnesses, triage, context, exploit dev, judgement, and all the boring engineering bits that make the work useful.

I think frontier models are having a similar moment.

The best results won’t come from throwing a giant model at a repo and hoping it finds magic. They’ll come from layered systems: frontier models for reasoning and code understanding, smaller focused models trained on private data, internal vuln history, remediation patterns, product context, validation loops, and humans who know when the model is chatting crap.

As models get better at writing code, they get better at breaking it. Capability doesn’t scale politely. It compounds.

Better tool use helps validation.

Better context helps reachability.

Better reasoning helps exploit chains.

But it still needs structure.

It still needs evidence.

It still needs humans who know what good looks like.

The shallow bugs are already getting compressed. The interesting bit is what comes next.