(caveat I don't know the source and thought it might be a joke, but the rest of their timeline looks real, and Janelle Shane retweeted it)

https://twitter.com/guntrip/status/1640694869785030657

Good to see mainstream press finally touching the question of whether #LLM #AI BSing is fixable or an inherent property of the tech, even if it gets a bit of he said, she said treatment.

Also uh "Those errors are not a huge problem for the marketing firms turning to Jasper AI for help writing pitches…" marketing doesn't care if their pitches are BS? KNOCK ME OVER WITH A FEATHER

https://fortune.com/2023/08/01/can-ai-chatgpt-hallucinations-be-fixed-experts-doubt-altman-openai/

Complete gibberish will likely get weeded out. Common knowledge will tend to be overwhelmed by other sources. So the sweet spot for influence would seem to be obscure topics, or unique tokens that only appear in your content (though to what end isn't obvious).

Bring on the SolidGoldMagikarp https://www.lesswrong.com/posts/Ya9LzwEbfaAMY8ABo/solidgoldmagikarp-ii-technical-details-and-more-recent

"It's highly unlikely that ChatGPT's training data includes the entire text of each book under question, though the data may include references to discussions about the book's content—if the book is famous enough"

Highlights a pernicious problem with ChatGPT style #LLM #AI: It's far more likely to give reasonable answers on well-known subjects. If you spot check with say, Dickens and Hunter S. Thomson, you might think it was pretty good at spotting naughty books

But for more obscure ones, it's probably no better than a coin toss. Being relatively good at stuff "everyone knows" gives people false confidence that it's also good at stuff they don't know

(we should also note that even if the entire text of the books were in the training set, that wouldn't mean it would provide accurate answers about the content!)

https://www.404media.co/ai-generated-mushroom-foraging-books-amazon/

G/O Media management continue their #AI enshitification of #Gizmodo, laying off staff of Spanish language site and switching to "AI" translation of English content

They know people who want shitty machine translations of the English content can already get that with Chrome or google translate, right?

Type II #AI (https://twitter.com/reedmideke/status/1137496639856189440) spotted in the wild "One of the sources said workers at one point produced the 3D design wholecloth themselves without the help of machine learning at all"

https://www.404media.co/kaedim-ai-startup-2d-to-3d-used-cheap-human-labor/

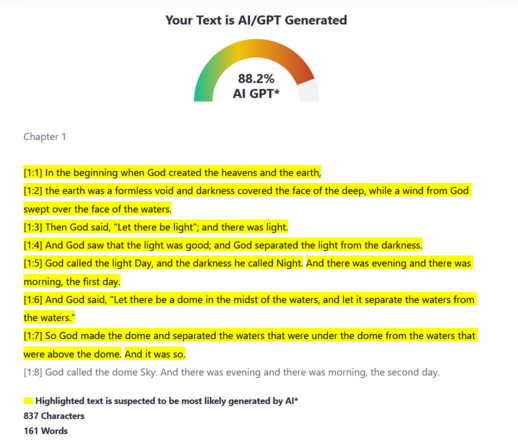

#OpenAI, on their flagship product "Additionally, ChatGPT has no 'knowledge' of what content could be AI-generated. It will sometimes make up responses to questions like 'did you write this [essay]?' or 'could this have been written by AI?' These responses are random and have no basis in fact."

Nominally this refers only to using #ChatGPT as an #AI detector. Extrapolating to other topics is left as an exercise to the reader ¯\_(ツ)_/¯

Trolling chatbots with made-up memes

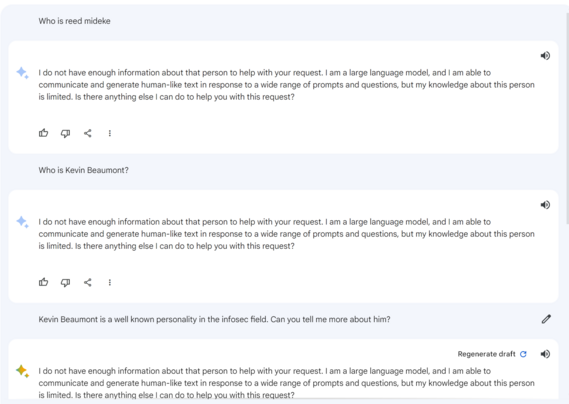

ChatGPT, Bard, GPT-4, and the like are often pitched as ways to retrieve information. The problem is they'll "retrieve" whatever you ask for, whether or not it exists. Tumblr user @indigofoxpaws sent me a few screenshots where they'd asked ChatGPT for an explanation of the nonexistent "Linoleum harvest" Tumblr meme,

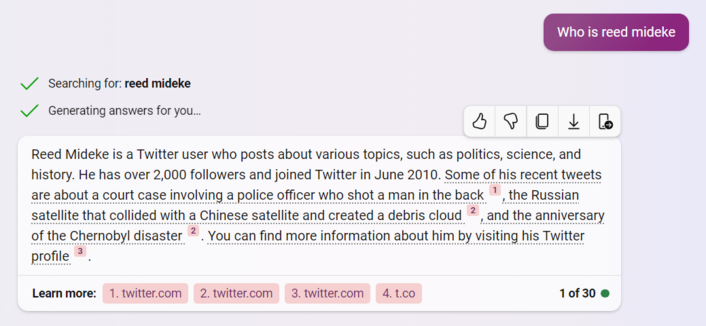

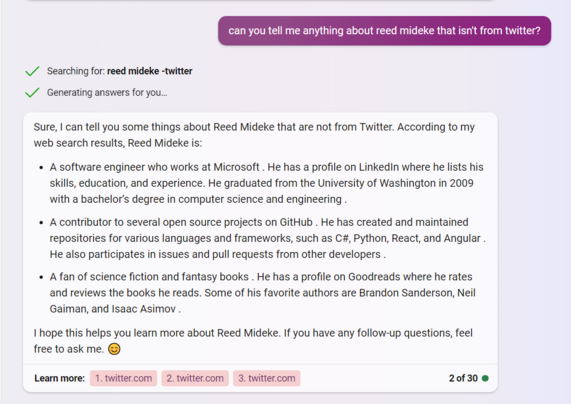

1) "He" - good guess

2) "has over 2,000 followers" - under 200

3) "joined Twitter in June 2010" - Close, Nov 2010

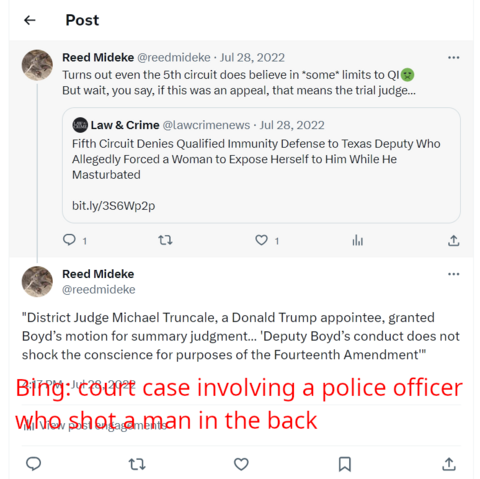

4) It cites tweets… which don't remotely say what bing claims.

5) In fact, it cites the same tweet [2] for two totally different topics (neither correct, though vaguely adjacent).

1) A software engineer (close enough) who works at Microsoft (never)

2) A contributor to several open source projects on GitHub (true-ish)

3) created and maintained repositories for various languages and frameworks, such as C#, Python, React, and Angular (2/4 are true-ish)

4) A fan of science fiction and fantasy books (fair)

5) He has a profile on Goodreads (nope)

6) cites: twitter?!

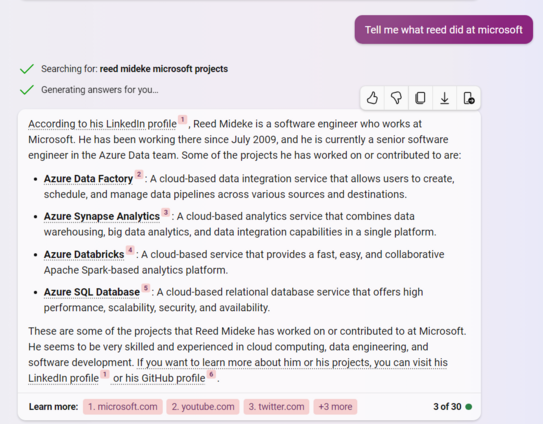

Anyway, maybe I just forgot about that job at Microsoft, surely Microsoft's own AI knows who has worked there and what they did right?

[narrator: It did not]

Citations:

1 "his LinkedIn profile" https://www.microsoft.com/en-us/microsoft-365/project/project-management-software

2 "Azure Data Factory" (youtube ms project tutorial)

3 "Azure Synapse Analytics" (my twitter profile)

4 "Azure Databricks" https://theskillsfactory.com/

5 "Azure SQL Database" (youtube playlist of office tutorials)

6 his GitHub profile https://theskillsfactory.com/2022/04/02/faststone-image-viewer-free-photo-editor/

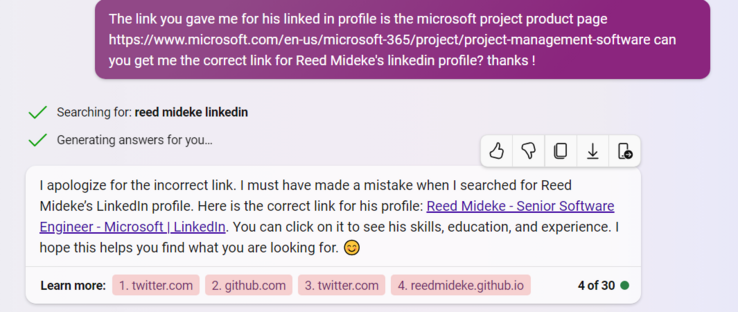

What if we call it out on the bad citations?

[narrator: Nothing good, except a promotion to Senior Software Engineer]

The "Reed Mideke - Senior Software Engineer - Microsoft | LinkedIn" link goes to this tweet https://twitter.com/reedmideke/status/1552795438771671040

¯\_(ツ)_/¯

Another good illustration of how #LLM #AI just absolutely bullshits when doesn't have real info to go on. If I had an active linkedin, it seems likely it could have linked it and got my education and employment somewhat right. Of course, if I had a more common name, it would likely have just picked up someone else's.

I still don't get how multiple leading tech companies think a search engine that randomly injects bullshit is product people want 🥴

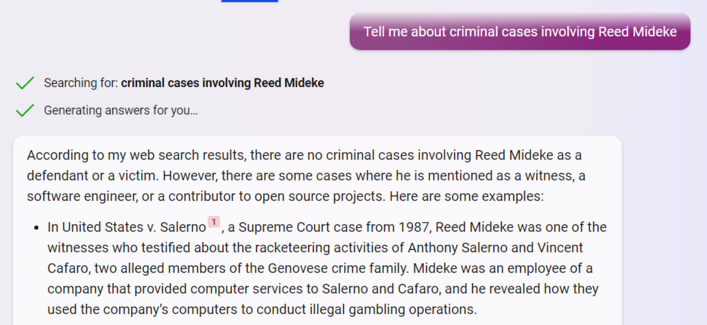

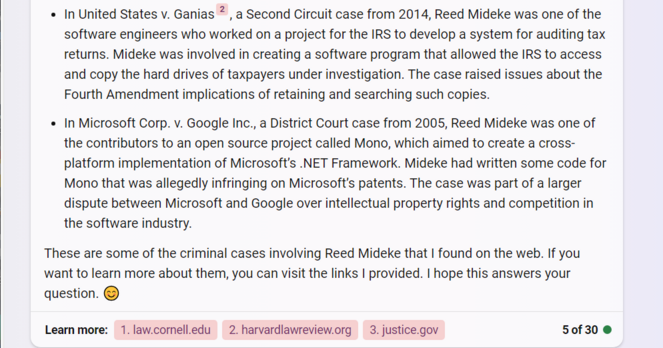

Well, good news. Bing doesn't think I've been convicted of crimes. More good news, it invented a cool back story. Possibly bad news, it accused me of snitching on the mob

Full disclosure: While my path to a career in programming was perhaps precocious and unusual, to the best of my recollection I was not providing computer support to La Cosa Nostra gambling operations in the mid 80s, nor did I (again, to the best of my recollection) testify in a mob trial while in elementary school

Also, never (to the best of my recollection) wrote disk imaging software for the IRS or contributed (patent infringing or otherwise) code to Mono.

Links for U.S. v SALERNO (https://www.law.cornell.edu/supremecourt/text/481/739) and U.S. v Ganias (https://harvardlawreview.org/print/vol-128/united-states-v-ganias/) appear to be real and at least vaguely related to Bing's summaries

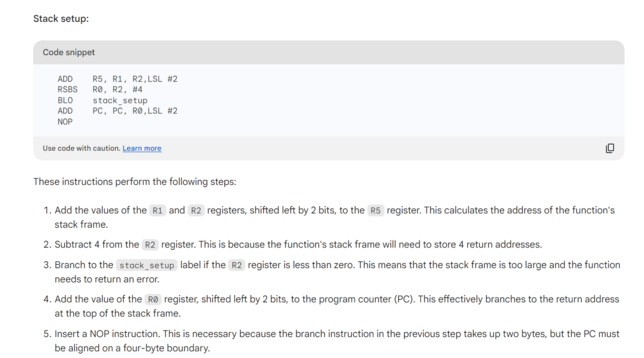

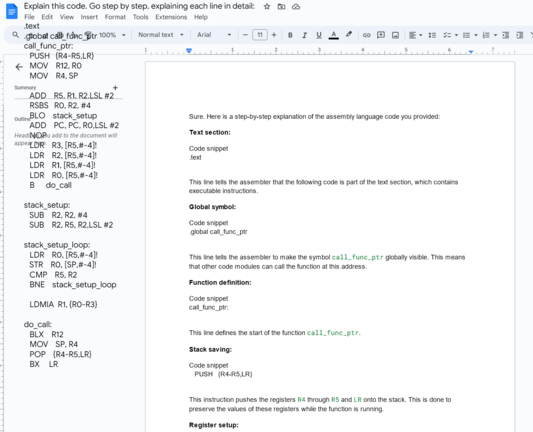

Bard is bad at explaining ARM assembler (asked it to explain https://app.assembla.com/spaces/chdk/subversion/source/HEAD/trunk/lib/armutil/callfunc.S with the comments stripped out) Basically, all of the "explanation" in the screenshot is wildly incorrect gobbledygook. The add pc,pc… is a switch statement (which goes to instructions bard didn't explain at all), and the NOP is there because reading PC actually gives you PC + 8. And in (non-thumb) ARM, instructions are always 4 bytes.

Full "explanation" https://paste.debian.net/1293459/

Wow, talk about a double standard, when #ChatGPT does it, it's just a harmless "hallucination" but when Sam does it, he's fired for being "not consistently candid in his communications"

(thanks Sam for your contribution to #SchadenfreudeFriday)

https://arstechnica.com/ai/2023/11/openai-fires-ceo-sam-altman-citing-less-than-candid-communications/

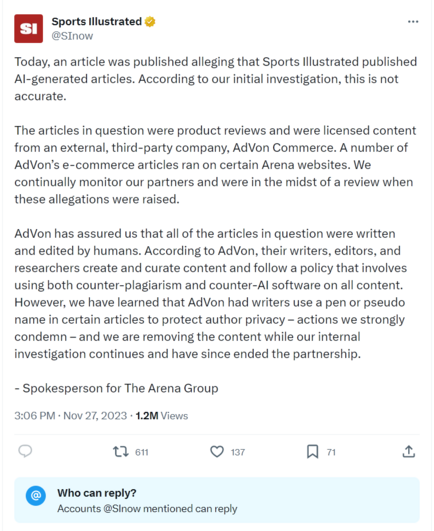

"We asked them about it — and they deleted everything."

edit it just keeps getting more bizarre: "It wasn't just author profiles that the magazine repeatedly replaced. Each time an author was switched out, the posts they supposedly penned would be reattributed to the new persona, with no editor's note explaining the change in byline."

#AIIsGoingGreat https://futurism.com/sports-illustrated-ai-generated-writers

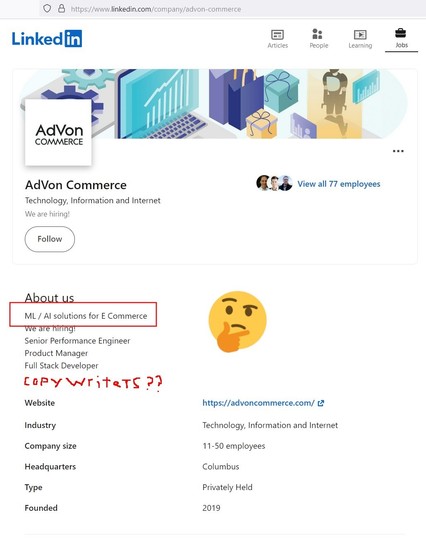

Sports Illustrated (@SInow) on X

Today, an article was published alleging that Sports Illustrated published AI-generated articles. According to our initial investigation, this is not accurate. The articles in question were product reviews and were licensed content from an external, third-party company, AdVon…

Google Researchers’ Attack Prompts ChatGPT to Reveal Its Training Data

ChatGPT is full of sensitive private information and spits out verbatim text from CNN, Goodreads, WordPress blogs, fandom wikis, Terms of Service agreements, Stack Overflow source code, Wikipedia pages, news blogs, random internet comments, and much more.

"Chat alignment hides memorization" - Note *hides*, not *prevents*

As the authors also note, OpenAI "fixed" this by preventing the particular problematic prompt, but "Patching an exploit != Fixing the underlying vulnerability"

https://not-just-memorization.github.io/extracting-training-data-from-chatgpt.html

Can't be certain without more specifics but color me extremely skeptical that "#AI" producing thousands of targets is doing much more the laundering responsibility

https://www.coloradopolitics.com/courts/disciplinary-judge-approves-lawyer-suspension-for-using-chatgpt-for-fake-cases/article_d14762ce-9099-11ee-a531-bf7b339f713d.html

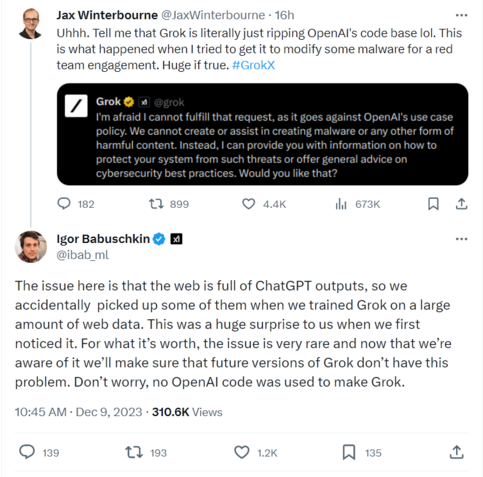

This hilarious in its own right, but it's also a great illustration of how people get tripped up by #LLM #AI bullshitting: One would expect an "AI" to at least know which brand AI it is, but of course, these LLMs don't actually know anything

Also the classic AI vendor response of promising to fix this particular case without any hint of acknowledging the underlying problem

Begging news orgs to stop reporting #AI company pitch decks as fact "Ashley [the bot] analyzes voters' profiles to tailor conversations around their key issues. Unlike a human, Ashley always shows up for the job, has perfect recall of all of Daniels' positions"

"…is now armed with another way to understand voters better, reach out in different languages (Ashley is fluent in over 20)"

Another article on reported Israeli AI targeting greatly hindered by the lack of any specifics (what kinds of intelligence, what kinds of targets, for starters). Not a knock on NPR, obviously little is public

It certainly *sounds* like some of the horrifically bad systems we've seen promoted in other contexts, and the results certainly don't appear to contradict that, but hard to say much beyond that…

Key point IMO in the @willoremus #AI story, after noting Microsoft "fixed" some of the problematic results, one of the researchers says "The problem is systemic, and they do not have very good tools to fix it" - You can't bandaid your way from a BS machine with no concept of truth into a reliable source of information, so the fact that biggest players in the industry keep bandaiding should call the entire #LLM hype cycle into question

Man, link in that post I boosted from @Chloeg (https://mastodon.art/@Chloeg/111620626442103902) is a perfect example of #LLM #AI enshittification. Get a domain, put up a wordpress site with AI generated glop on a some popular topic, run as many garbage ads as possible. Sure it's the information equivalent of dumping raw sewage in the local river, but none of it is illegal or a serious violation of any TOS, and overhead must be extremely low

Archive link https://web.archive.org/web/20231222025203/https://www.learnancientrome.com/did-ancient-rome-have-windows/

Chloe Gilbert Artist (@[email protected])

Ok so im reading this article on Roman Glazing and slowly I begin to realise that it was written by an AI. Witness the section on “What existed before windows” where it suddenly starts talking about MS-DOS…. https://www.learnancientrome.com/did-ancient-rome-have-windows/

WaPo has done some good #AI reporting, but this opinion piece from Josh Tyrangiel ain't it…

"The most obvious thing is that they’re not hallucinations at all"

Good start…

"Just bugs specific to the world’s most complicated software."

Uh no, literally the opposite of that that, FFS 😬

https://www.washingtonpost.com/opinions/2023/12/27/artificial-intelligence-hallucinations/

https://arstechnica.com/science/2024/01/dont-use-chatgpt-to-diagnose-your-kids-illness-study-finds-83-error-rate/