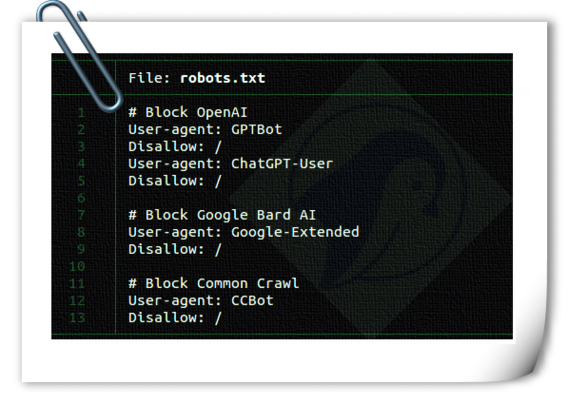

Add OpenAI, Google, and Common Crawl to your robots.txt to block generative AI from stealing content and profiting from it. See https://www.cyberciti.biz/web-developer/block-openai-bard-bing-ai-crawler-bots-using-robots-txt-file/ for more info and a firewall to block those and other AI bots, too. #AI

@nixCraft And if one has control of the server, look into installing a port-blocking tool such as fail2ban that kicks in if it spots unwanted activity. I've seen too many bots that ignore the robots.txt file

@nixCraft Or craft a site specifically designed to feed them just slightly false info...

@nixCraft Many of the links ion your article are inaccessible thanks to Cloudflares & Google's internet censorship...

And you can alternative add this code to the nginx conf

if ($http_user_agent ~ "(Googlebot|bingbot|GPTBot|ChatGPT-User)/"){return 444;}@jerry

TIL "The non-standard code 444 closes a connection without sending a response header." Seems like the appropriate level of disregard, I like it https://nginx.org/en/docs/http/ngx_http_rewrite_module.html#return

@nixCraft

TIL "The non-standard code 444 closes a connection without sending a response header." Seems like the appropriate level of disregard, I like it https://nginx.org/en/docs/http/ngx_http_rewrite_module.html#return

@nixCraft

Alternatively, you can feed the AI nonsense to confuse.

@nixCraft The thing is, people call this "blocking". It isn't. There is absolutely no guarantee that specifying a restriction means it will be honoured.

It's like leaving your front door wide open but pinning up a notice that burglars should not enter the house, or specifying which drawers they should not rifle through.

If you want to be assured of a block, then BLOCK it. WAF it. Boot them out on their ear if they even come looking.