Sold

@stablehorde_generator

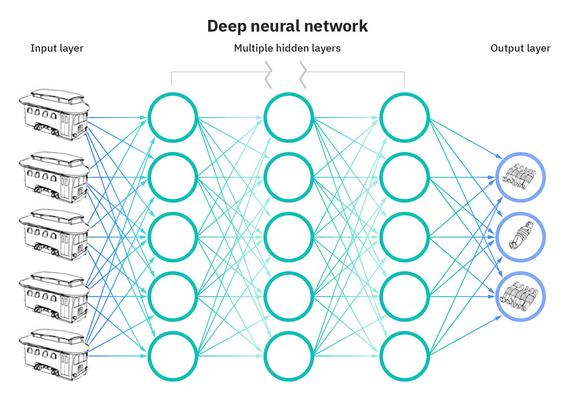

draw for me a representation of a deep neural network but all the input resembles instances of unavoidable doom caused by unstoppable trolleys that awaits their victims, and all hidden layer nodes are levers. the output is optimized numbers of survivors.

@manu @lritter @CyberneticForests Here are some images matching your request

Prompt: a representation of a deep neural network but all the input resembles instances of unavoidable doom caused by unstoppable trolleys that awaits their victims, and all hidden layer nodes are levers. the output is optimized numbers of survivors.

Style: featured

My old AI prof (circa 1988) would've loved your extrapolations here & used them to frame a class discussion.

If I were in charge, I'd just hire an outside consultant and blame/credit them for the decision.

@CyberneticForests The outcome of the algorithm, incidentally, will only be based on the average of the choices that human beings would make

Not exactly comforting

Conclusions: trolleys are made of photons

@CyberneticForests @randomgeek When people tell me #trolley thought experiments in #philosophy provide guidance for real-world problems: https://newideal.aynrand.org/why-todays-ethics-offers-no-real-guidance/

(I don't think generative #AI will solve #ethics problems either, just whitewash where the solutions come from)

@CyberneticForests Feed various trolley problems into a small language model to encode(?) so that other ML models can understand it (like CLIP I think), then train a model to solve trolley problems. Its training data will come from stuff like Absurd Trolley Problems. [1]

Sorry if this is nonsense, I don't know that much about the specifics of ML. But I think I've got very roughly the right idea.

@CyberneticForests

The Trolley Problems for AI

😂

Kids being.

@CyberneticForests So.... Nobody shared this one?

jenny (phire) (@[email protected])

Attached: 1 image if you’ve ever wondered what it’s like to work with me, wonder no more

Actual conversation I've had with my old AI developer roommate circa 2018

RM: "AI is super cool right now. I'm currently working with a research firm and we've sort of simulated PAIN for our model and we're seeing how it reacts when it receives only a pain stimulus and no reward."

Me: Hey man What the Fuck.

@Nagaram @CyberneticForests @Binder

Ted Chiang (*The story of your life and others*), interviewed by Ezra Klein, said he hoped we never developed artificial consciousness, because of all the suffering it would mean for the prototype consciousness along the way.

@Nagaram @CyberneticForests I've often wondered what the smallest possible digital circuit is that experiences pain.

Fucked up that someone is trying for it.

@home

@home