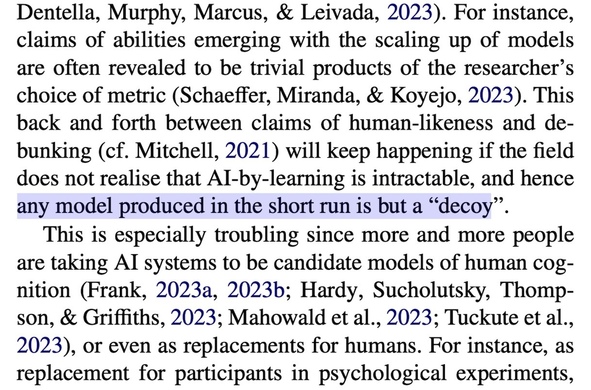

Given the Ingenia Theorem, how to interpret what's happening in practice?

It implies, if you test AIs rigorously for human-likeness, it quickly becomes evident they behave qualitatively differently from humans. Unsurprisingly, we see exactly this play out.

[end of ACT 1]. 9/n

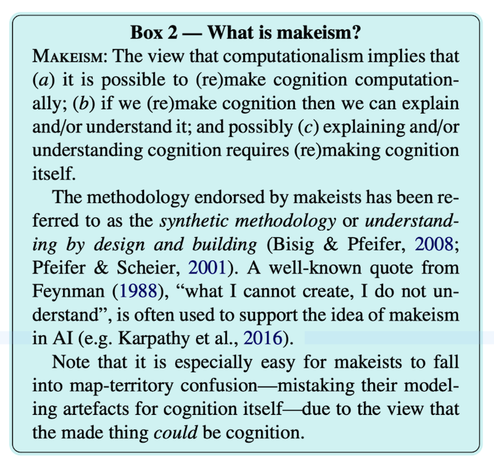

ACT 2 (cont'd): In reclaiming AI for cogsci, we need to make sure not to repeat conceptual mistakes of the past, and prevent the rerun of history.

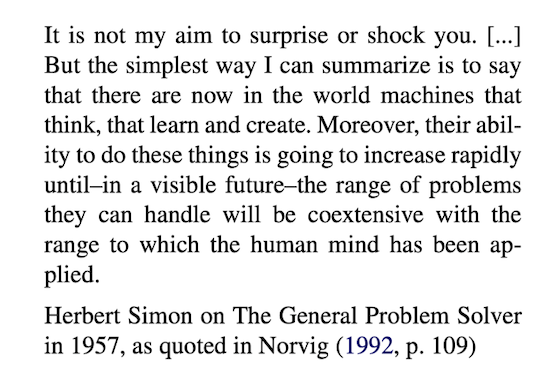

Makeism & overstatements of AI possibilities is of all times. Note the date on H. Simon's quote.

This we shouldn't reclaim. 12/n

ACT 2 (cont'd): How may computationalism help cogsci advance if not through makeism?

Core to non-makeist cogsci is the realisation that computationalism provides conceptual and formal tools for theory development and assessing what is computationally possible or not." 14/n

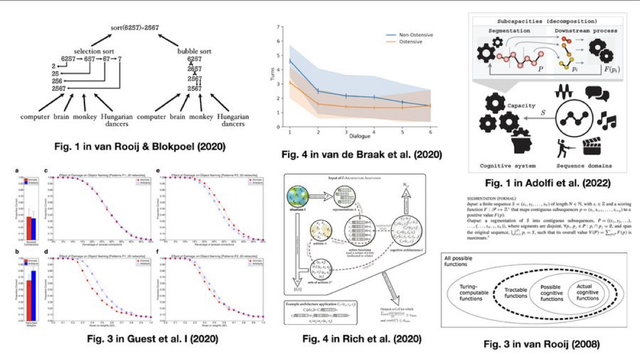

ACT 2 (cont'd): We explain how computationalism can aid cogsci without makeism, referring to examples from existing work:

1. Levels of explanation;

2. Capacities as problems;

3. Algorithms and simulations;

4. Underdetermination;

5. Computational realisability.

15/n

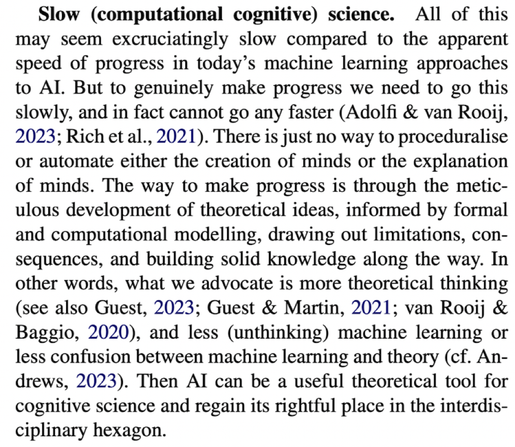

AI-as-engineering has been trespassing into cogsci, confusing us with decoys. The time is apt to reclaim the early conception of AI-as-theoretical-psychology. This means using AI as a theoretical tool; but we want to not fall in the trap of makeism again. 17/n

@Iris love this. Going to give it a proper read when I've got a clear head but my first reaction is excitement and that this is perfect timing

I am about to organise an informal discussion between the vision psychology team that I've been working with (studying the visual strategies of a group of image interpretation experts) and the AI team I've been working with (building foundation models for working in a similar image domain)

Perhaps your paper gives a stronger motivation for that discussion...

Iris van Rooij 💭 (@[email protected])

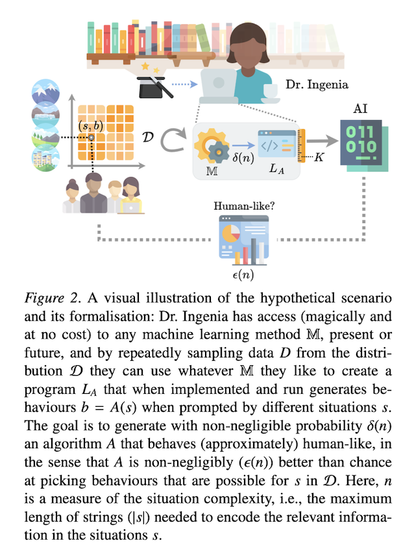

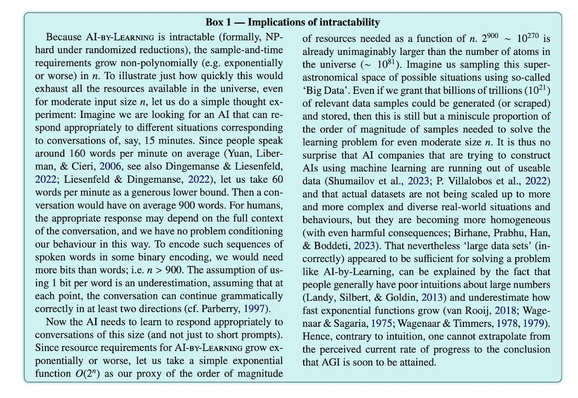

Attached: 1 image ACT 1 (cont'd) Even if AI-by-Learning may be practically solvable for simple situations (small n), any attempts to scale up to situations of real-world, human-level complexity (medium to large n) will necessarily consume an astronomical amount of resources (see Box 1). 8/n

@Iris that was an awesome thread! I asked chatgpt to write a critique. I like point 3 in particular. A Critical Review of "AI for Psychology"

The essay "AI for Psychology" offers a stimulating take on the relationship between AI and cognitive science. However, several points merit critical scrutiny:

1. Assumed Dichotomy Between AI-as-engineering and AI-as-theoretical-psychology: While the essay positions these two concepts as almost opposing ends of a spectrum, one might argue that they are not mutually exclusive. Modern engineering-driven AI advancements can indeed feed back into theoretical psychology, providing new insights and data that can refine cognitive theories.

2. Validity of the Ingenia Theorem: The theorem posits that AI-by-Learning is intractably hard. While this is a provocative stance, it's based on an idealized, hypothetical scenario. The practical reality of AI development might not align with this theory, especially when one considers the rapid progression of quantum computing and other emerging technologies which could redefine what "intractable" means. [Personal human note: the fact that evolution came up with human cognition in a relatively short amount of time seriously questions the validity of the ingenia theorem. Just for fun, optimal clustering of data points is intractable as well, and yet can be solved in seconds with a modern computer].

3. Neglect of Neural Networks and Deep Learning: The essay seems to undermine the significant strides made in emulating aspects of human cognition through deep learning and neural networks. While these models may not capture the entirety of human cognition, they have successfully replicated certain cognitive tasks, suggesting that they shouldn't be dismissed outright.

4. Overemphasis on Historical AI-Cognitive Science Relationship: By heavily leaning into the historical relationship between AI and cognitive science, the essay risks romanticizing the past and downplaying the innovative and disruptive advancements of contemporary AI. History provides valuable lessons, but clinging too tightly to historical perspectives might impede forward-thinking innovation.

5. Unclear Definition of "Makeism": While the essay cautions against falling into the trap of "makeism", it would benefit from a clearer definition of what "makeism" truly entails. Is it just about creating replicas of human cognition, or does it also encompass the broader goal of understanding cognition through the creation process? [Note of human: I did not feed the text from the images, which did provide a bit more clarity on the notion of "makeism". I hope the next gen of chatgpt can read in images as well.

6. Broad Prescriptions: The five concepts presented as the means through which AI can assist cognitive science are valid. However, they are broad and could apply to many disciplines beyond cognitive science. A more specific prescription tailored to cognitive science's unique challenges would strengthen the essay's argument.

In conclusion, while the essay offers a refreshing perspective on AI's role in cognitive science, it might benefit from a more nuanced appreciation of the current AI landscape. Balancing the lessons of the past with the possibilities of the future will be key to genuinely harnessing AI's potential in understanding human cognition.

[Note from human: I did warn chatgpt I would send this text to the authors of the essay, and the machine said "Open and constructive discourse is essential for the progression of ideas and knowledge. I look forward to hearing any updates you might have. [...] Best of luck with your engagement with the authors!]

Aye! didn't now of that A. Lovelace critique!

Previous older favorite critique was '76 Drew Mcdermott:

» In this paper, I have criticized AI researchers very harshly.

..

Most AI workers are responsible people who are aware of the pitfalls of a difficult field and produce good work in spite of them.

However, to say anything good about anyone is beyond the scope of this paper «

https://www.researchgate.net/publication/234784524_Artificial_Intelligence_meets_natural_stupidity

1/2

Thus nowadays aside from your paper ;)

My favorite balanced critique:

https://mastodon.social/@teixi/110844164501607405

2/2

opinions on these two takes?

As I’ve claimed many times, AI-as-model-of-cognition has been most successful in showing what cognition is not.

@Iris Hmm, disagree.

Those 900 bits are not perfectly random; they are structured into phrases, clauses, sentences, paragraphs etc. These inter-symbol dependencies permit log (of variable base) compression, which makes the learning problem much more tractable.

Transformers leverage this by being moderately compositional, e.g. induction heads are re-used for many situations.

Are they efficient though? I agree with you -- they are not. Will there be better approaches? Certainly.

@Iris That's assuming that conversation is structured as a balanced binary tree -- that is, each choice is indpendent of the other choices. The presence of larger structures within language is at odds with this assumption.

Evolution, engineering, and (most) ML techniques perform hierarchical subspace decomposition, which is the only way to explore ~infinite-dimensioned spaces.

@Iris Agreed - discovering this structure is hard, demonstrably *not intractable*.

Eg. we have ~ 3 billion bp in our genome. 4^(3e9) >>> total number of organisms that ever lived on earth. To infer those bits from noisy "experiments", you need a lot stacked modularity!

Our presence implies that the Ingenia Theorem is false.

(sorry to make such a strong statement, but your paper makes a strong statement of the opposite polarity)

@m8ta @Iris Yes, reading this (very interesting) paper I had similar thoughts. Are evolved biological humans not an existence proof that the Ingenia theorem is false? Not sure we are - but it would have been interesting to see that point addressed in the paper (or here 😄).

I have not dared to look at the full proof in the paper, just the proof sketch, but I was wondering whether what is being shown isn’t rather that it is intractable to *exhaustively demonstrate* that a certain AI algorithm is human-like? I.e. that an “exhaustive Turing test” is intractable?

Iris van Rooij 💭 on Twitter

“@shimonedelman There is nothing to reconcile. Our paper is not about human learning, development of evolution at all. We are only providing a lower bound analysis on ML of the type in the paper (training on human data =/= human learning, development, or evolution). See also:”

Turing test is about neither, but about what behaviour convinces a random person.

@Iris @m8ta Thanks for clarifying what you’ve done here. Fully agreed about the Turing test, I was sloppily loaning the term for something quite different.

Congrats on a really cool paper. It will be interesting to see what reviewers competent to digest your proof (unlike myself) make of it. If it holds water then that’s pretty major! Any further intuition or clarification you can provide in the “proof sketch” part of the paper will be very helpful to general readers I think.

Have a great vacation!

Right, sorry to interrupt your vacation -- and my intent is in good faith, to have a meaningful discussion.

"proof shows that no algorithm can do better than a variation of exhaustive search" This is clearly false for the *actual* problems that we & evolution encounter in our actual lives. Approximate solutions are accessible, evolution is fast, AGI is tractable.

E.g. ATP-binding proteins are trillions of times more prevalent than expected in a library of random proteins.

Another fun example, regarding my point on using modularity to solve high-D problems:

When reaching for food, octopi make their continuous arms behave like rigid segments, ala primates, allegedly to make the forward-inverse kinematics problem easier.

Is it the optimal solution? No, that's probably NP hard. Is it good enough? Yes yes.

@Iris While I generally agree with you and am just as negative about AI as you are, I am a bit skeptical about this chain of reasoning.

While some of what humans do is built-in, most of our reasoning skills are learned: "Human by Learning". So how do we do it if it's a NP-complete issue?

The answer is that we humans don't get exact answers, we get pretty good answers and as we know, getting pretty good answers to NP-complete problems is very doable.

1/

@Iris I personally see humans as also being machines: very complicated machines, but still bound by physics and mathematics.

So I'm skeptical about any proof that says that AI can't do something that humans can, because that implies some sort of magic in the human brain, and I really don't believe that exists.

Don't get me wrong here - I'm also very skeptical that LLMs will lead to GAI, and I'm against AI because it's a heist of our information to the benefit of a few rapacious companies.

Thanks for your thoughts @TomSwirly !

There seems to be a misunderstanding. We also work from the assumption that cognition is computational and tractable, and grant that from the start. We only show that (re)making it by engineering/ML is intractable, not that cognition or human learning itself is intractable.

Dr Ingenia seems like someone who wants to turn lead into gold using alchemy, while the industry is advertising some early chemistry as transmutation.

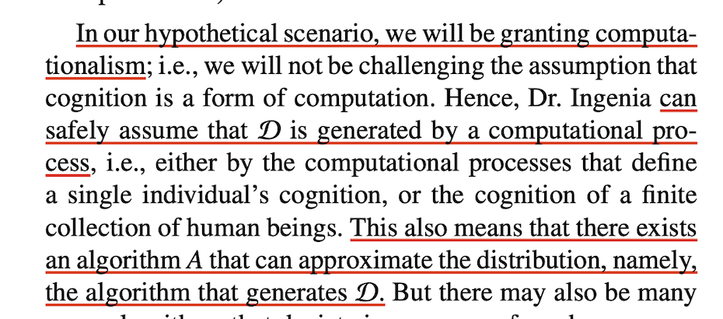

@Iris nice overview Table.

In my lecture on IR I always spend some minutes on differences and analogies to related areas in AI like NLP, computer vision and ASR but nowadays people call it all "AI" - I may introduce this Table to raise some awareness there's fifty shades of AI :-)

I was mislead by the title, but then amazed by the conclusions ;)

Congrats!

ps:

you should present in one of such new trendy confs, ie:

Ernst Strüngmann Institute (@[email protected])

Angehängt: 1 Bild The program for #ESISyNC 2023 is now online 🥳 ✅ 2 days with: 11 speakers | panel discussion | poster session | lunch & coffee breaks | dinner & party 📆 Sept 14 + 15, Frankfurt, Germany This year's topic: Linking hypotheses: where neuroscience, computation, and cognition meet ➡️ Register now: https://www.esi-frankfurt.de/newevent/ #event #neuroscience #computation #cognition #brainresearch #science #conference #frankfurt

OpenSoul

OpenSoul