Can Large Language Models (#ChatGPT) transform Computational Social Science?

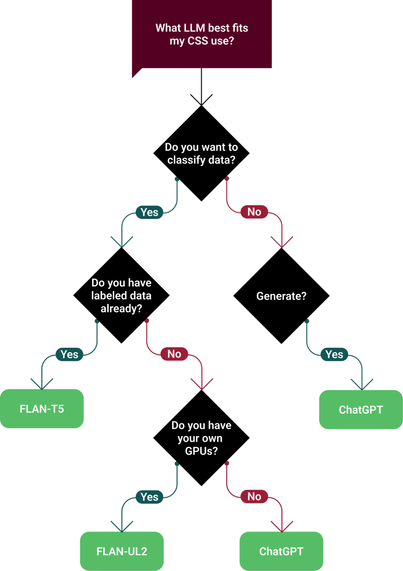

Our recent work (with @Held, @omar, @diyiyang) shows how they might (in partnership w/ experts).

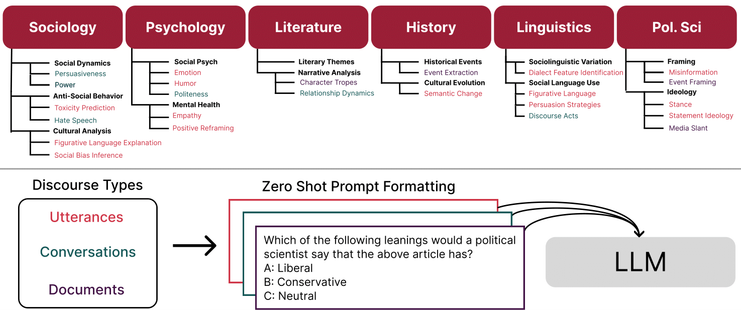

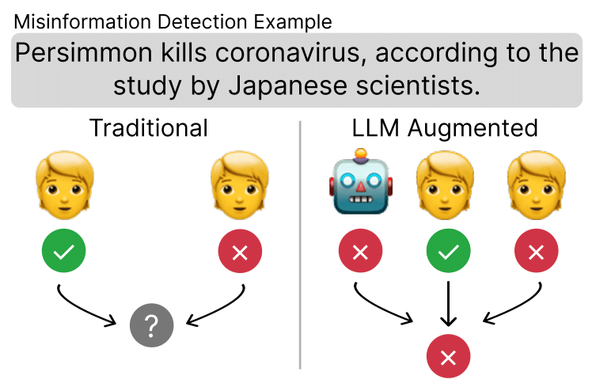

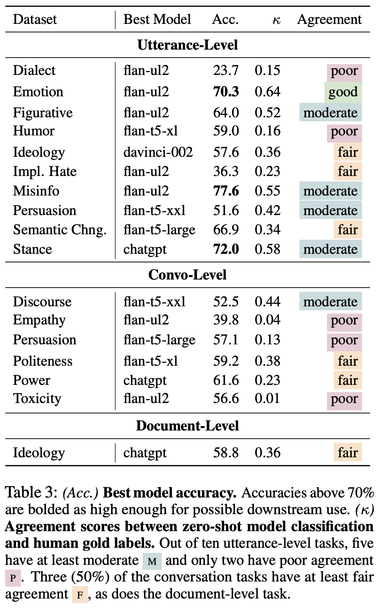

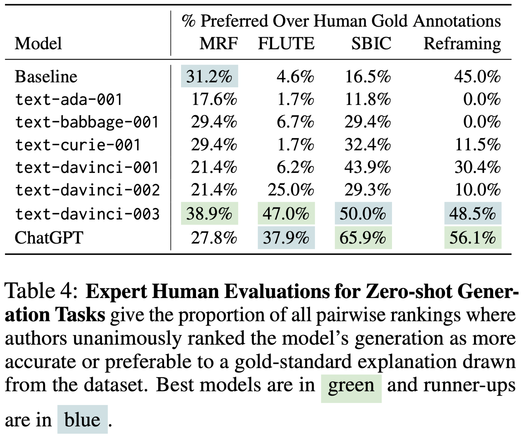

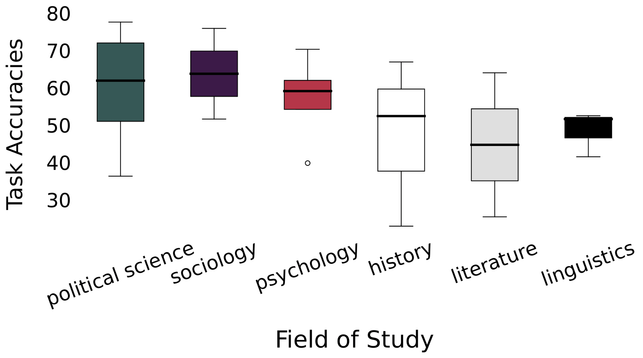

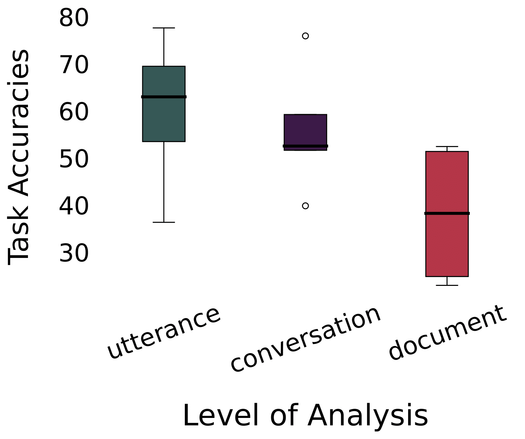

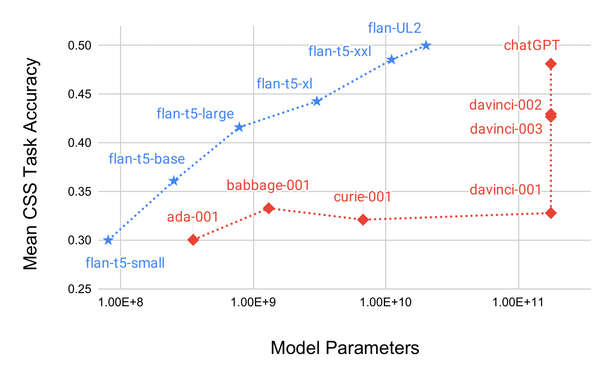

We evaluate on 24 #CSS tasks + draw a roadmap 🚗🗺️ to guide #LLM-augmented social science 🚀

Paper: https://calebziems.com/assets/pdf/preprints/css_chatgpt.pdf

🧵 thread