So OpenAI just released a detector of AI-generated text, I assume because of concerns in education / homework.

https://openai.com/blog/new-ai-classifier-for-indicating-ai-written-text/

Maybe this is good?

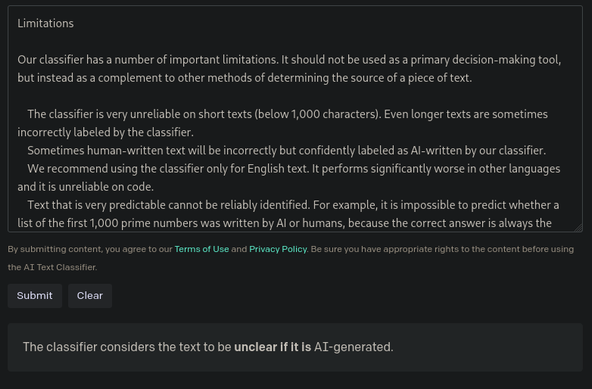

No, it's very bad.

They claim 26% true positives, 9% false positives. Assume 10% of submitted homework is chatgpt generated, you get the classic counterintuitive outcome of poor predictive power: if a homework is flagged, there's a 3:1 chance it's *human* generated.

This is going to cause a lot of harm. It should be immediately recalled.