🚨🚨🚨NEW PREPRINT🚨🚨🚨

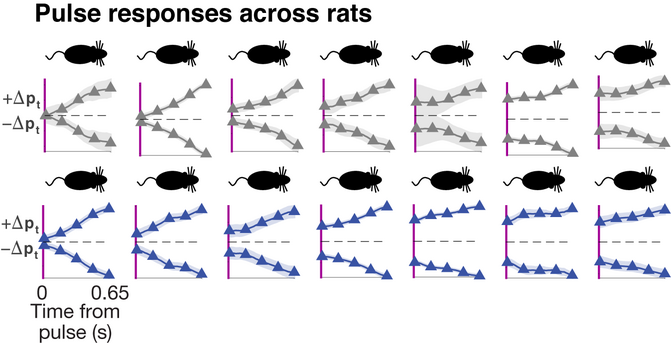

A big mystery in brain research is what are the neural mechanisms that drive individual differences in higher order cognitive processes. Here we present a new theoretical and experimental framework, in collaboration with Vincent Tang, Mikio Aoi, Jonathan Pillow, Valerio Mante, @SussilloDavid and Carlos Brody.

https://www.biorxiv.org/content/10.1101/2022.11.28.518207v1

1/16

Ok, I exaggerate. Important computational principles (mechanisms?) were discovered this way, though they may not be capturing natural brain function.

Ok, I exaggerate. Important computational principles (mechanisms?) were discovered this way, though they may not be capturing natural brain function.