Cross-modal training reduced s...

Cross-modal training reduced susceptibility to the sound-induced flash illusion and generalized across stimulus onset asynchronies - Psychological Research

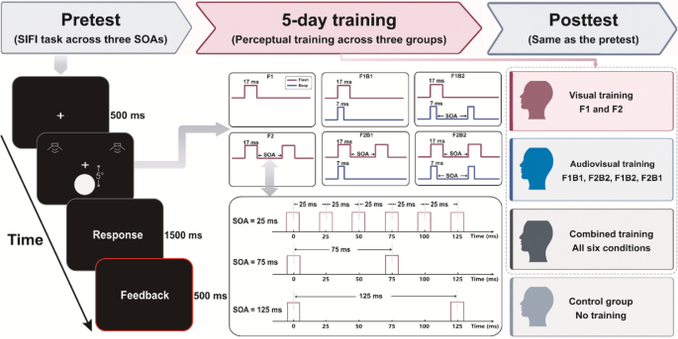

The sound-induced flash illusion (SiFI) is a typical auditory-dominated multisensory illusion, and its susceptibility can be modified by perceptual training. However, the effects of different training protocols and their transfer effects on SiFI remain unclear. The present study employed the SiFI paradigm combined with feedback training to examine the effects of different types of training on SiFI performance across multiple stimulus onset asynchronies (SOAs). SOA was manipulated in the pretest and posttest to determine whether training effects would generalize to untrained temporal intervals. Participants were assigned to four groups, including a control group that completed only the pretest and posttest and three training groups that received 5 days of combined training, audiovisual training, or visual-only training respectively. The results showed that perceptual training significantly improved performance on the SiFI task, but the magnitude of improvement differed across training modalities. Audiovisual and combined training were more effective than visual-only training, and these benefits generalized from the trained SOA to untrained SOAs, indicating transfer of the training effect across temporal intervals. These findings suggest that cross-modal training is more effective than unimodal visual training in reducing SiFI susceptibility and provide further evidence for the plasticity of multisensory perceptual processing.