OpenAI showed a “build a snake game” task on GPT-5.3-Codex-Spark and GPT-5.3-Codex at medium. Both completed task, but the Cerebras-backed Spark ran in 9 seconds, compared with nearly 43 seconds on the non-Spark model. If you want to see the video of the side-by-side, here is a link. The Spark model is said to be higher quality than the GPT-5.1-Codex as well as much faster.

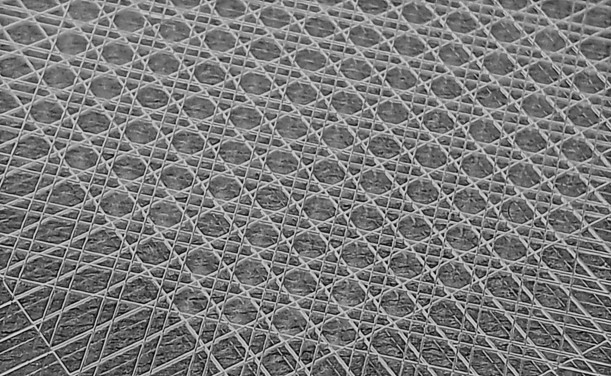

https://www.servethehome.com/openai-gpt-5-3-codex-spark-now-running-at-1k-tokens-per-secondon-big-cerebras-chips/ #WaferScale