sys/arch/m68k/include: asm.h

christos: Make the _C_LABEL definition match exactly <sys/cdefs_elf.h> because

atomic_cas.S now includes <sys/ras.h> and <sys/ras.h> includes <sys/cdefs.h>

because it wants things like __CONCAT(). I think it is better to do it this

way rather than #undef here or in atomic_cas.S because then if the definition

changes it will break again.

http://cvsweb.netbsd.org/bsdweb.cgi/src/sys/arch/m68k/include/asm.h.diff?r1=1.37&r2=1.38

@shugo gcc -EするとNULLが/usr/lib/gcc/x86_64-linux-gnu/13/include/stddef.hでdefineされていることがわかって、下記のように、Cなら(void *)に、C++なら整数になるみたいです。

:

#if defined (_STDDEF_H) || defined (__need_NULL)

#undef NULL /* in case <stdio.h> has defined it. */

#ifdef __GNUG__

#define NULL __null

#else /* G++ */

#ifndef __cplusplus

#define NULL ((void *)0)

#else /* C++ */

#define NULL 0

#endif /* C++ */

#endif /* G++ */

#endif /* NULL not defined and <stddef.h> or need NULL. */

#undef __need_NULL

:

Embajada británica convoca altos mandos militares argentinos a una reunión secreta

As it happens, we still use CVS in our operating system project (there are reasons for doing this, but migration to git would indeed make sense).

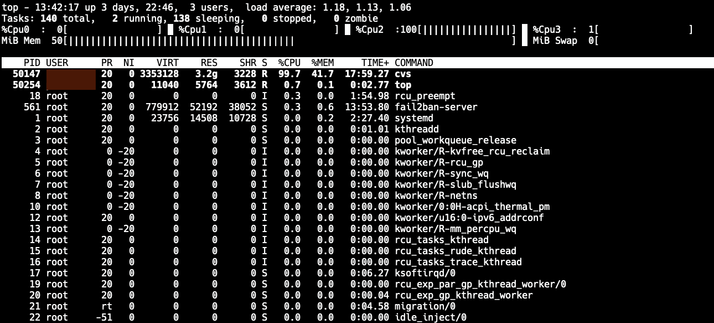

While working on our project, we occasionally have to do a full checkout of the whole codebase, which is several gigabytes. Over time, this operation has gotten very, very, very slow - I mean "2+ hours to perform a checkout" slow.

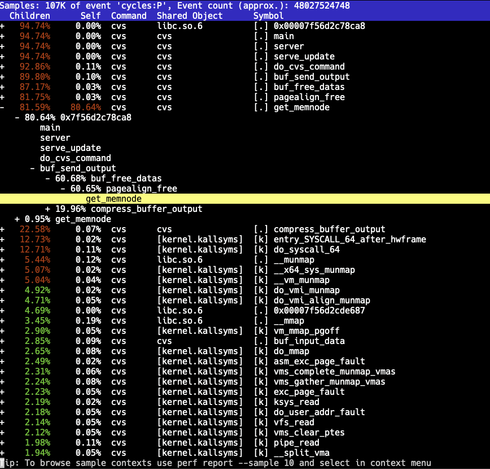

This was getting quite ridiculous. Even though it's CVS, it shouldn't crawl like this. A quick build of CVS with debug symbols and sampling the "cvs server" process with Linux perf showed something peculiar: The code was spending the majority of the time inside one function.

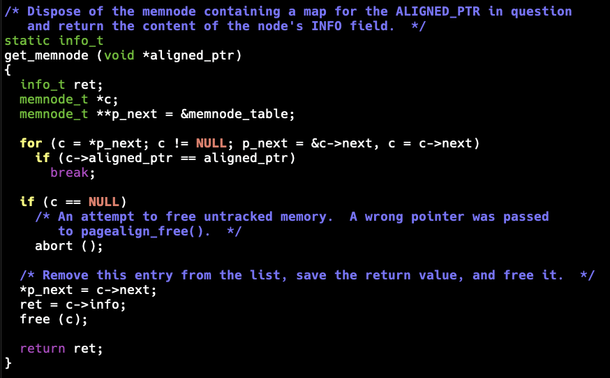

So what is this get_memnode() function? Turns out this is a support function from Gnulib that enables page-aligned memory allocations. (NOTE: I have no clue why CVS thinks doing page-aligned allocations is beneficial here - but here we are.)

The code in question has support for three different backend allocators:

1. mmap

2. posix_memalign

3. malloc

Sounds nice, except that both 1 and 3 use a linked list to track the allocations. The get_memnode() function is called when deallocating memory to find out the original pointer to pass to the backend deallocation function: The node search code appears as:

for (c = *p_next; c != NULL; p_next = &c->next, c = c->next)

if (c->aligned_ptr == aligned_ptr)

break;

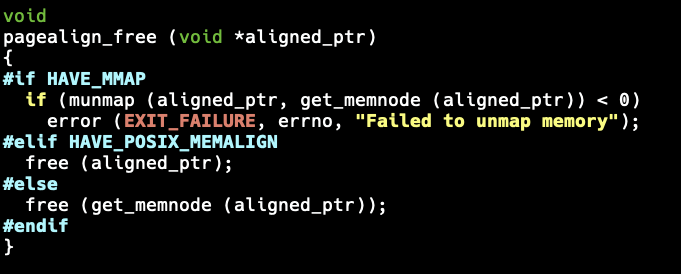

The get_memnode() function is called from pagealign_free():

#if HAVE_MMAP

if (munmap (aligned_ptr, get_memnode (aligned_ptr)) < 0)

error (EXIT_FAILURE, errno, "Failed to unmap memory");

#elif HAVE_POSIX_MEMALIGN

free (aligned_ptr);

#else

free (get_memnode (aligned_ptr));

#endif

This is an O(n) operation. CVS must be allocating a huge number of small allocations, which will result in it spending most of the CPU time in get_memnode() trying to find the node to remove from the list.

Why should we care? This is "just CVS" after all. Well, Gnulib is used in a lot of projects, not just CVS. While pagealign_alloc() is likely not the most used functionality, it can still end up hurting performance in many places.

The obvious easy fix is to prefer the posix_memalign method over the other options (I quickly made this happen for my personal CVS build by adding tactical #undef HAVE_MMAP). Even better, the list code should be replaced with something more sensible. In fact, there is no need to store the original pointer in a list; a better solution is to allocate enough memory and store the pointer before the calculated aligned pointer. This way, the original pointer can be fetched from the negative offset of the pointer passed to pagealign_free(). This way, it will be O(1).

I tried to report this to the Gnulib project, but I have trouble reaching gnu.org services currently. I'll be sure to do that once things recover.

I'd track a stack of control-flow preprocessor lines tracking whether to keep or discard the lines between them, inserting #line where needed. This is how I'd handle #if, #elif, #else, #endif, #ifdef, #ifndef, #elifdef, #elifndef.

Some of these take identifiers whose presence it should check in the macros table, others would interpret infix expressions via a couple stacks & The Shunting Yard Algorithm. Or they simply end a control-flow block.

#undef removes an entry from the macros table.

3/4

Weekly GCC update:

Optimization improvements:

* Copy prop for aggregates improvements; now into args

* Recongize integer zero as zeroing for memset into aggregate copy or memcpy

* Improve VN support over aggregates copies

* Recongize saturation multiple more

* Allow for more mergeable constants to be placed in the mergeable section

C++ improvements/changes:

* Implement C++20 (Defect report) P1766R1: Mitigating minor modules maladies

* Fix parsing of non-comma variadic methods with default args

* Warn on #undef/#define cpp.predefined macros

* Implement C++26 P1306R5 - Expansion statements

* Implement P2036R3 - Change scope of lambda trailing-return-type

* Finish up P2115R0 implementation (unnamed unscoped enums)

Target changes:

* epiphany and rl78 are marked as obsolete targets

* LoongArch: 128bit atomics support

* LoongArch: _BitInt support

* x86: Add target("80387") function attribute

* RISCV: Add MIPS prefetch extensions

* aarch64: Fix CMPBR extension