🎙️ On Stage at BSides Luxembourg 2026: New Talk Revealed

🧠🤝 𝗧𝗘𝗔𝗠𝗜𝗡𝗚, 𝗧𝗥𝗨𝗦𝗧, 𝗔𝗡𝗗 𝗧𝗛𝗥𝗘𝗔𝗧𝗦: 𝗛𝗢𝗪 𝗛𝗨𝗠𝗔𝗡𝗦 𝗜𝗡𝗧𝗘𝗥𝗔𝗖𝗧 𝗪𝗜𝗧𝗛 𝗚𝗘𝗡𝗘𝗥𝗔𝗧𝗜𝗩𝗘 𝗔𝗜 𝗜𝗡 𝗦𝗘𝗖𝗨𝗥𝗜𝗧𝗬 – Dr. Tailia Malloy 🔐

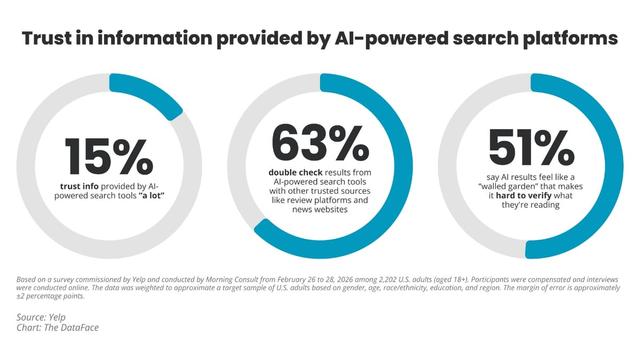

As AI becomes part of everyday security workflows, the real challenge isn’t just the technology—it’s how humans trust, use, and collaborate with it.

This talk explores how generative AI is reshaping cybersecurity tasks like network analysis, social engineering defense, and secure software development. By combining human-computer interaction research with real-world security use cases, it reveals how trust, teaming, and human behavior shape both the strengths and risks of AI in security.

Dr. Tailia Malloy (She/They) is a postdoctoral researcher at the University of Luxembourg, specializing in human-AI interaction, cognitive modeling, and the application of generative AI in cybersecurity—from phishing defense to secure code generation.

📅 Conference Dates: 6–8 May 2026 | 09:00–18:00

📍 14, Porte de France, Esch-sur-Alzette, Luxembourg

🎟️ Tickets: https://2026.bsides.lu/tickets/

📅 Schedule Link: https://pretalx.com/bsidesluxembourg-2026/schedule/

👉 Browse sessions, track talks in real time, and plan your schedule on Hacker Tracker: https://hackertracker.app/schedule?conf=BSIDESLUX2026

# BSidesLuxembourg2026 #AISecurity #HumanAI #CyberSecurity #HCI #GenerativeAI #TrustInAI