"Back in 2024 I wrote that AI helps me remove boring work at the margins. This is fine for a lone writer, but how to scale this to an entire team of technical writers? How to make the system helpful but not intrusive? These are all questions I’m starting to answer now, partly through experimentation, but also through dialogue with practitioners and colleagues. One answer I’m testing these days relies on GitHub Agentic Workflows.

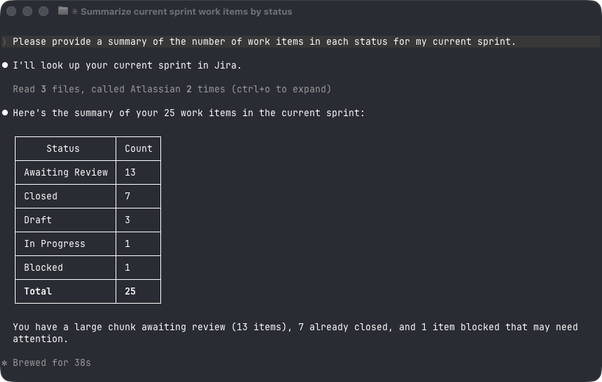

Following Four modes of AI-augmented technical writing, I thought of a way of distributing tooling effort across all modes through a tiered system where each level holds a different relationship with the writer. The result is four tiers: intake, local assistance, automated governance, and an MCP server that provides reliable knowledge to all. The idea is that AI assists the writer not just while writing, but also before and after they work on docs."

https://passo.uno/agentic-workflows-for-docs/

#TechnicalWriting #AI #GenerativeAI #LLMs #AIAgents #AgenticAI #AgenticWorkflows #SoftwareDocumentation #GitHub #DocsAsCode