When I set up this Debian box with ZFS I tried setting up ZFS swap to see how it worked. There were warnings in the OpenZFS docs but I gave it a go anyway.

The system is configured with two fast NVME connected to the CPU and mirrored for the root pool. Swap was created as part of the rpool.

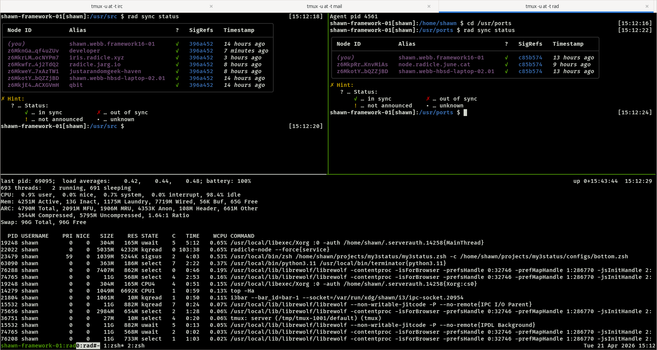

It's a bad configuration. After a number of hours the load average consistently heads into the high teens or higher. Turning off swap makes it drop immediately to ~1.0 (typical for this box running a desktop and futzing around in browsers and shells).

As far as I can tell it's not possible to resize a ZFS partition in situ so I added the swap partition on the third NVME that is formatted EXT4 (south bridge x4).

Not ideal but it is perfectly serviceable.

NVME drives are so fast, would I really notice a difference by the seat of the pants with the swap on partitions on the north bridge connected mirrored devices? Probably not.

Leaving sleeping dragons for now.