RAM overflow even after closing all tasks #ram #thinkpad #memoryusage #2510 #ubuntubudgie

How to significantly reduce memory usage of GTK4 applications in computers with low RAM running Ubuntu? #ram #memoryusage #gtk4

How to significantly reduce memory usage of GTK4 applications in computers with low RAM running Ubuntu?

I use Ubuntu on a Chromebook with only 4GB of memory, and GTK4 apps consume a lot of memory. Examples: Even a hello world window may consume more than 100 mb of memory One app was reported to hav...

Why is my Ubuntu VM running out of memory and closing unrar? #memoryusage

Ars Technica: Google’s TurboQuant AI-compression algorithm can reduce LLM memory usage by 6x. “Google Research recently revealed TurboQuant, a compression algorithm that reduces the memory footprint of large language models (LLMs) while also boosting speed and maintaining accuracy.”

https://rbfirehose.com/2026/03/26/ars-technica-googles-turboquant-ai-compression-algorithm-can-reduce-llm-memory-usage-by-6x/

Ars Technica: Google’s TurboQuant AI-compression algorithm can reduce LLM memory usage by 6x

Ars Technica: Google’s TurboQuant AI-compression algorithm can reduce LLM memory usage by 6x. “Google Research recently revealed TurboQuant, a compression algorithm that reduces the memory fo…

#AIEngineering #memoryusage #cheatsheet #bestpractice

https://machinelearningmastery.com/3-ways-to-speed-up-model-training-without-more-gpus/

3 Ways to Speed Up Model Training Without More GPUs - MachineLearningMastery.com

Most training jobs run slower than they need to, not because the GPU is weak, but because it isn’t being used efficiently. This article shows three proven ways to speed up model training without adding more GPUs.

Also, Apple apps are no better.

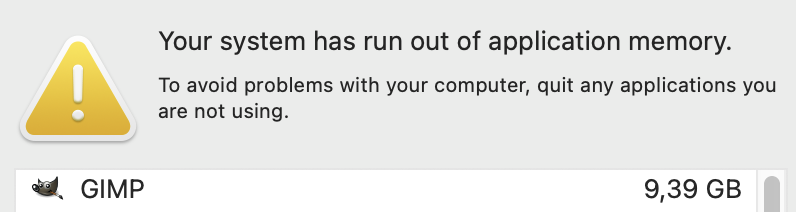

Also, Gimp with all images closed.

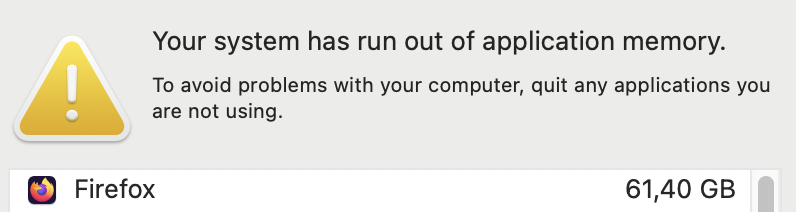

Uh, Firefox.