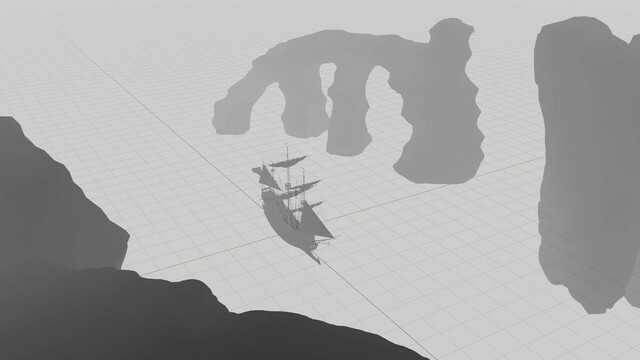

RT @TomLikesRobots: Another test combining

#stablediffusion #depth2img with

#ebsynth This time a cel-shaded animation from a video of me (Not usually so croaky and full of cold!).

Background masked out. Need to see if better source using DSLR and lighting improves quality of end animation.

#aiart

QT @TomLikesRobots: A very quick test using depth guided

#img2img and

#EbSynth from @scrtwpns

Temporal coherence is far better than vid2vid and

#depth2img creates a really accurate keyframe.

I need to do a deep dive into this. Masking and using AI generated environments?

#stablediffusion #aiart

[quoted tweet is unavailable]

2023-01-01 20:27:57 UTC