https://firethering.com/granite-4-1-ibm-open-source-model-family/ #IBM #Competition #HackerNews #ngated

Granite 4.1: IBM's 8B Model Is Competing With Models Four Times Its Size - Firethering

IBM just released Granite 4.1, a family of open source language models built specifically for enterprise use. Three sizes, Apache 2.0 licensed and trained on 15 trillion tokens with a level of pipeline obsession that's worth understanding. But there's one result in the benchmarks I keep coming back to. The 8B model. Dense architecture, no MoE tricks, no extended reasoning chains. It matches or beats Granite 4.0-H-Small across basically every benchmark they ran. That older model has 32B parameters with 9B active. This one has 8 billion. Full stop. That result is either very impressive or it means the old model was underbuilt. Probably both. Here's how they built it, what the numbers actually say, and whether any of it matters for your use case.

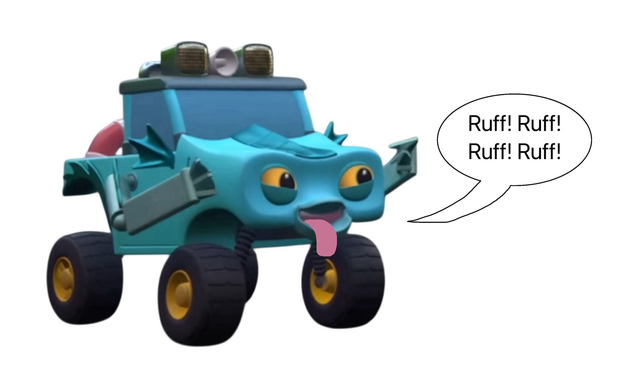

#mightymonsterwheelies #barking #actinglikeadog

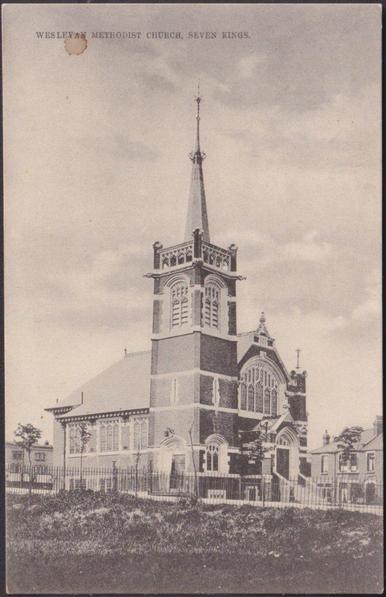

Wesleyan Methodist Church, Seven Kings, Essex, 1908 - JW King Postcard

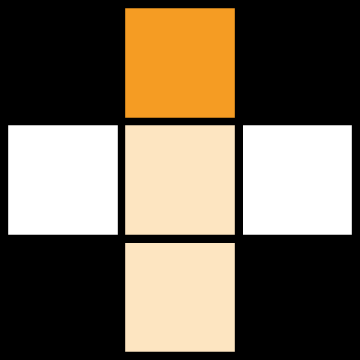

How about a 7x7 today? Hand-crafted by yours truly. :)

Click below for "Teeny Barking Breeds" and have a super-duper Thursday!

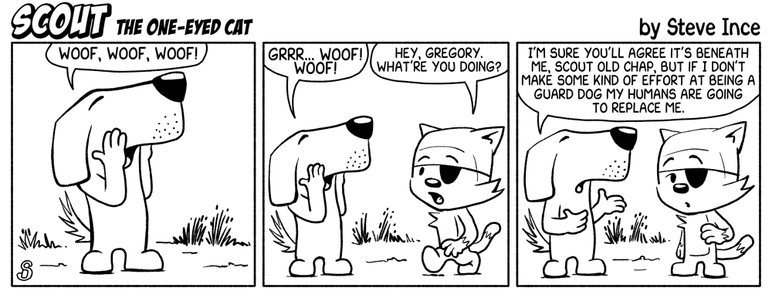

heard it all before...

heard it all before...

#mightymonsterwheelies #barking #actinglikeadog