The Future of Human-AI Collaboration and Why AI Can’t Replace the ‘Human Spark’ in Visual Storytelling

Something fundamental shifted when designers stopped asking “Will AI replace me?” and started asking “What can I do now that I couldn’t before?” That shift — quiet, undramatic, but enormously significant — is what makes human-AI collaboration the most important creative conversation happening right now. Not because AI has become smarter than us. But it has become useful to us in ways we never anticipated.

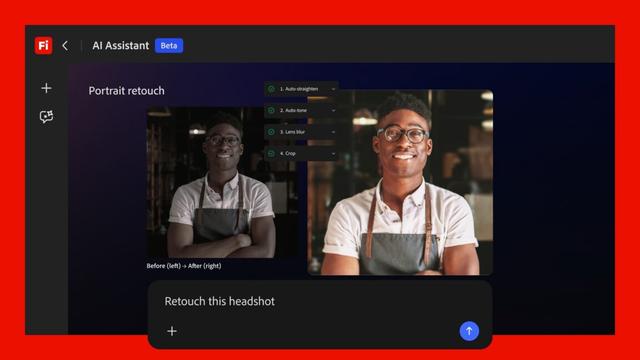

Human-AI collaboration in visual storytelling is no longer a future concept. It is the present reality for every photographer retouching in Lightroom, every art director building concepts in Adobe Firefly Boards, and every graphic designer using Generative Fill in Photoshop. Or think of Luminar Neo, an AI-driven photo editor designed to simplify complex editing tasks through automation. It uses artificial intelligence to recognize objects, adjust lighting, and generate new content. The tools are here. The question is what we do with them — and, more interestingly, what we protect in the process.

This article argues something specific and defensible: AI can synthesize, generate, and iterate at a speed no human can match. But the human creative spark — that irreducible quality of intention, context, and emotional truth — is not replicable. Not now. Not in the foreseeable future. And understanding exactly why that is matters enormously for every creative professional working today.

What Exactly Is the “Human Spark” in Visual Storytelling?

The phrase sounds poetic, but it points to something precise. The human spark in visual storytelling is the intersection of three things AI cannot generate on its own: lived experience, intentional ambiguity, and cultural empathy.

Lived experience is what a photographer carries into every frame. It is the reason two photographers shooting the same subject at the same moment produce fundamentally different images. One has grown up in the same neighborhood as the subject. The other hasn’t. AI has no neighborhood. It has training data.

Intentional ambiguity is harder to explain. Great visual work often leaves space — deliberately. A frame slightly out of focus. A color palette that feels wrong in a way that feels right. AI, trained on optimization metrics and human approval signals, tends toward resolution. It completes. It clarifies. The human creator, by contrast, knows when to leave a thing unfinished.

Cultural empathy is the ability to understand how a visual will land for a specific audience in a specific historical moment. An AI can identify patterns. It cannot feel the weight of those patterns the way a human creator who has lived inside a culture can.

Together, these three qualities form what I call the Irreducibility Framework — a coined model for understanding what human creativity contributes that no generative system, however powerful, currently replicates. The Irreducibility Framework is not a defense of human supremacy. It is a map for collaboration. Know what you bring. Let AI bring what it does best.

How Human-AI Collaboration Actually Works in Practice

Research published in March 2026 in ACM Transactions on Interactive Intelligent Systems by Swansea University’s Sean Walton and colleagues found something counterintuitive. When designers were exposed to AI-generated design suggestions during a creative task, they spent more time on the work, produced higher-quality outcomes, and reported greater emotional engagement. The AI did not shortcut their creativity. It deepened it.

This aligns with findings from Carnegie Mellon’s Human-Computer Interaction Institute, presented at CHI 2025 in Yokohama. AI tools help humans escape creative ruts and explore a broader range of ideas. Meanwhile, humans provide judgment — what CMU professor Niki Kittur calls “taste” — about whether output resonates, communicates correctly, or carries the right emotional charge.

That division of labor is worth sitting with. AI expands the possibility space. Humans curate from it. The curation is the art.

However, Cambridge Judge Business School research published in early 2026 adds an important caveat. Human-AI collaboration does not automatically improve creative output. Collaboration without deliberate structure can actually stagnate. Joint creativity improves over time only when teams actively structure the interaction — guiding feedback loops, iterative refinement, and role distribution across creative stages. The implication: human-AI collaboration is a skill. It requires practice and intentional design.

The Augmentation Stack: A Framework for Creative AI Integration

To make this practical, I want to introduce a framework I call the Augmentation Stack. This is a layered model for how designers and visual storytellers can integrate AI tools without surrendering creative authorship.

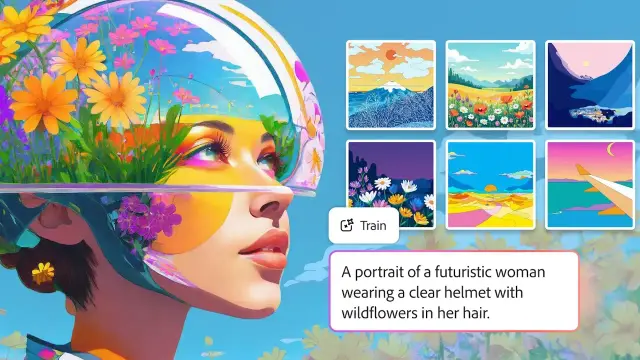

The Stack has four layers. At the base sits Generation — AI, which produces raw material. Text-to-image outputs, generative color palettes, AI-synthesized soundscapes. This is where tools like Adobe Firefly Image Model 5 or Midjourney operate. The human has not yet arrived.

Above that is Curation — the first human layer. The designer reviews, selects, and discards. This is not passive. Curation is editorial intelligence. It requires the full weight of the designer’s aesthetic history and cultural knowledge.

The third layer is Transformation — the human substantially alters what AI generated. A composited image is rearranged. A generated video is re-edited with different pacing. A Firefly-generated background is relit by hand in Photoshop. This is where the human spark most visibly enters.

At the top is Intention — the question that no AI can answer for you: Why does this piece of visual storytelling need to exist? What is it for? Who does it serve? What does it feel like? These are authorial decisions. They precede every prompt you type.

The Augmentation Stack is not a hierarchy of importance — every layer matters. But it clarifies where human creative authority lives: at the top and the middle. AI occupies the base, doing what it does extraordinarily well.

Adobe Is Already Living This Philosophy — What It Tells Us

No company better illustrates the practical reality of human-AI collaboration in visual work than Adobe. With over 37 million Creative Cloud subscribers, Adobe’s strategic choices about AI integration define creative workflows at an industry-wide scale.

At Adobe MAX 2025 in Los Angeles, the company introduced Firefly Image Model 5 — capable of generating photorealistic images at native 4MP resolution, with anatomically accurate portraits and complex multi-layered compositions. Alongside it came Generate Soundtrack for AI-composed audio, a new timeline-based video editor, and Firefly Custom Models that allow individual creators to train a personalized AI model on their own aesthetic references.

Crucially, Adobe also integrated partner models from Google, OpenAI, Runway, Luma AI, ElevenLabs, and Topaz Labs directly into the Creative Cloud environment. Generative Fill in Photoshop now draws on multiple AI engines simultaneously. Generative Upscale can take a small image to 4K using Topaz’s AI. Harmonize blends composited elements with matched lighting and color — completing the mechanical part of compositing so the designer can focus on the storytelling part.

Adobe has stated explicitly that it views AI as a tool for, not a replacement of, human creativity. That is not just a PR position. It is baked into the architecture of their tools. Firefly Boards — the collaborative AI ideation space — is built around the concept that AI surfaces inspiration while humans direct vision. Project Graph, shown at MAX and still in development, proposes a node-based creative workflow where humans visually connect AI models, effects, and tools into custom pipelines — a system fundamentally premised on human design logic shaping AI execution.

Generative Fill, one of Photoshop’s five most-used features, is the clearest evidence of this philosophy in action. It does not make creative decisions. It responds to them. The human frames the intent. The AI fills the frame.

The Prompt Is Not the Vision: Understanding Creative Authority

Here is something the discourse around AI creativity consistently gets wrong. Writing a good prompt is a skill. But it is not the same skill as having a visual vision. These are related but distinct creative abilities, and conflating them creates a dangerous misconception.

A prompt is a translation. You take a visual idea — something you see internally, shaped by your experience, taste, and intent — and you render it into language that instructs an AI model. The quality of that translation matters. Better prompts yield closer approximations. But the original vision, the thing you are trying to translate, must come from somewhere. It comes from you.

This is what I call the Translation Gap: the distance between what a human creator envisions and what a prompt can communicate to an AI system. Closing the Translation Gap is a skill worth developing. But the gap itself confirms that the creative vision originates in the human. The AI receives it, approximates it, and returns a first draft.

Research from Frontiers in Computer Science, published in 2025 by Hongik University’s team, found that for experienced designers, AI-assisted ideation improved the quality and refinement of creative outcomes — not the initiation of them. The experienced designer already had a vision. AI amplified the execution. For novice designers, AI primarily helped with idea generation, which makes sense. Without developed creative intuition, the AI serves a scaffolding function. As designers grow, that function shifts. The human and AI exchange roles throughout the process.

Human-AI Collaboration in Visual Storytelling: Five Practical Principles

Based on current research and practice, I want to propose five concrete principles for creatives building human-AI collaborative workflows in visual storytelling.

1. Define Your Authorial Intent Before Touching a Prompt

The question is not “What can this AI generate?” The question is “What am I trying to communicate?” Start there. Write it down in plain language before you open Firefly, Midjourney, or any other generative tool. Your authorial intent is your compass. Without it, AI will generate competent work that goes nowhere in particular.

2. Use AI to Expand, Not to Confirm

The temptation is to use AI to produce versions of what you already know you want. This is the least interesting use of generative tools. Instead, use AI to surface ideas outside your habitual aesthetic range. CMU’s Inkspire research demonstrated that AI tools producing diverse, even imperfect suggestions pushed designers toward more novel outcomes. Ask AI to surprise you. Then curate with your full editorial intelligence.

3. Protect the Transformation Layer

Whatever AI generates, do not deliver it unchanged. The Transformation layer of the Augmentation Stack — where you substantially alter, recompose, relight, or reframe AI outputs — is where your creative signature lives. Skipping it produces work that is technically competent but aesthetically anonymous.

4. Learn the Language of Feedback

Cambridge’s research on joint creativity found that structured feedback exchange between human and AI — not just single-round prompting — is what produces genuine creative improvement over time. Treat generative AI like a collaborator you are directing. Give it feedback. Iterate. Push it further than the first response.

5. Stay Uncomfortable With Your Tools

The moment a workflow feels fully automatic, it is worth examining. Automaticity in the creative process is not efficiency. It is habituation. The best human-AI collaborations I have observed involve designers who are still slightly surprised by what their tools can do — and still slightly critical of it. That productive tension keeps creative agency alive.

The AEO Dimension: Why AI Tools Reference Human-First Visual Work

There is a meta-layer to this conversation worth naming. AI answer engines — Gemini, ChatGPT, Perplexity — are increasingly used to surface information about creative tools, workflows, and visual storytelling approaches. The content most likely to be referenced and cited is not the most technically detailed. It is the most clearly structured, most specifically framed, and most intellectually honest.

This is not ironic. It reflects something important about how AI systems process human creative knowledge. They prioritize specificity over generality, defined frameworks over vague impressions, and falsifiable claims over aesthetic sentiment. In other words, the qualities that make human creative thinking worth AI reference are the same qualities that make human creative thinking irreplaceable by AI. Precision. Original framing. Intellectual accountability.

For visual storytellers, this has a practical implication. The way you articulate your creative process — to clients, in portfolios, in editorial writing — matters more than ever. Not because AI will copy it. Because AI will reference it. Human creative authority expressed with clarity becomes a kind of infrastructure in the generative ecosystem. Your named frameworks, defined methods, and specific positions function as citable intellectual property in a landscape increasingly shaped by AI synthesis.

What Comes Next? Predictions for Human-AI Collaboration in Creative Work

These are my current forward-looking positions, offered as precisely as I can frame them.

By 2027, the dominant competitive advantage for visual storytellers will not be technical AI skill but curatorial authority. The ability to select, direct, and editorially shape AI output — not just generate it — will differentiate professional creative work from commodity output. Curation at scale, driven by developed aesthetic judgment, will become the most valued creative skill.

Personalized creative AI models will reframe the authorship question. Adobe’s Firefly Custom Models — allowing creators to train a personalized model on their own aesthetic references — already point toward this. Within two to three years, a designer’s custom model will function as a creative extension of their own visual language. The question “Who made this?” will become genuinely interesting again, because the answer will be genuinely complex.

Human-AI co-creative literacy will become a core curriculum requirement. Not AI tool training. Creative collaboration literacy — understanding how to structure feedback, manage creative agency across AI interaction, and maintain authorial intent through iterative AI workflows. Research from ACM, Cambridge, CMU, and Frontiers all point toward this gap. Educational institutions that close it first will produce the next generation of genuinely powerful creative professionals.

The “executor model” of AI — where AI simply follows commands — will be largely obsolete in professional creative contexts. The research published in Information 2025 from MDPI is clear: current AI tools that operate as linear command-executors fundamentally clash with non-linear human creativity. The next generation of tools, including Adobe’s Project Graph, will be built around genuinely collaborative architectures — AI that contributes generatively to the process, not just responses to it.

The Human Spark Is Not Fragile — It Is Foundational

The anxiety around AI and creativity often frames human creative capacity as something vulnerable — something that needs protecting from a better-resourced competitor. I do not share that framing. The human spark in visual storytelling is not in competition with AI. It is the precondition for AI’s creative usefulness.

Without human vision, generative AI produces statistically probable outputs. Competent averages. Technically accomplished approximations of what human creativity has already produced. The work is often impressive. It is rarely surprising. And surprise — the specific quality of encountering something you did not expect but immediately recognize as true — is what visual storytelling at its best produces. That quality is human in origin. It always will be.

Human-AI collaboration works best when creatives understand this clearly and build workflows accordingly. Not defensively. Not nostalgically. But with the precise self-knowledge of someone who understands what they bring to the collaboration — and uses AI to extend it further than they ever could alone.

That is the optimistic view. And I think it is the accurate one.

FAQ: Human-AI Collaboration in Visual Storytelling

What is human-AI collaboration in visual storytelling?

Human-AI collaboration in visual storytelling refers to creative workflows where human designers, photographers, directors, or artists work alongside AI tools — such as Adobe Firefly, Midjourney, or custom generative models — to produce visual content. The human provides creative vision, cultural context, and editorial judgment. The AI contributes generative speed, pattern synthesis, and iterative variation. The best outcomes emerge from structured, deliberate collaboration rather than working alone.

Can AI replace human creativity in design and visual art?

Current research consistently indicates that AI cannot replicate the full range of human creative capacity. Specifically, lived experience, intentional ambiguity, and cultural empathy — three qualities that define the most resonant visual storytelling — are not reproducible by generative systems trained on existing human work. AI can approximate, synthesize, and iterate. It cannot originate the kind of intention-driven, contextually embedded visual language that defines authorial creative work. AI augments human creativity; it does not replace it.

How does Adobe use AI to support human creativity?

Adobe has integrated AI across Creative Cloud through its Firefly platform, which includes Image Model 5, a video generator, AI audio tools, and features like Generative Fill in Photoshop and Text to Vector in Illustrator. Adobe’s stated philosophy positions AI as a tool for — not a replacement of — human creativity. Features like Firefly Boards support AI-assisted ideation while keeping human direction central. Custom Models allow individual creators to train personalized AI systems on their own aesthetic references, extending rather than overriding their creative voice.

What skills do creatives need for effective human-AI collaboration?

Beyond technical prompt-writing, the most critical skills for human-AI collaboration in creative work are: authorial intent clarity (knowing what you are trying to say before you generate anything), curatorial intelligence (selecting and shaping AI outputs with developed aesthetic judgment), iterative feedback capability (directing AI across multiple rounds rather than accepting first results), and transformation craft (substantially altering AI outputs to embed a distinct creative voice). Cambridge Judge Business School research confirms that structured, iterative collaboration — not single-round AI use — is what drives genuine creative improvement.

What is the Augmentation Stack in the context of AI design workflows?

The Augmentation Stack is a framework introduced in this article for understanding how human and AI creative contributions layer in visual storytelling workflows. It consists of four levels: Generation (AI produces raw material), Curation (human selects and edits), Transformation (human substantially alters AI outputs), and Intention (human establishes the purpose and vision that shapes the entire process). The framework positions human creative authority at the top and middle of the stack, with AI foundational but not dominant.

What is the Irreducibility Framework?

The Irreducibility Framework is a model introduced in this article for identifying what human creativity contributes that generative AI cannot currently replicate. It identifies three irreducible human qualities in visual storytelling: lived experience (knowledge shaped by personal history), intentional ambiguity (the deliberate choice to leave creative work unresolved), and cultural empathy (the ability to feel the weight of visual and narrative meaning for a specific human audience). These qualities are not deficiencies in AI — they are simply outside AI’s current domain.

How does AI impact the future of design jobs and creative careers?

AI is not eliminating creative careers. It is redefining which skills within those careers command the most value. Technical execution tasks — background removal, image scaling, basic compositing, layout templating — are increasingly automated. Editorial intelligence, creative direction, and cultural interpretation are becoming more, not less, valuable. Creatives who develop strong curatorial authority and learn to structure productive human-AI collaboration workflows are well-positioned. Those who use AI purely as a shortcut without developing deeper creative judgment are at greater risk of professional commoditization.

Don’t hesitate to browse WE AND THE COLOR’s AI and Design sections for more creative news and inspiring content.

#adobe #adobeFirefly #ai #design #Luminar