I just found out that #decibelLinux AKA #Gentoo Studio exists.

Apparently it's been around for a while. They recommed using #SystemRescueCD to install which is a little funky but, not surprizing in the slightest. lol

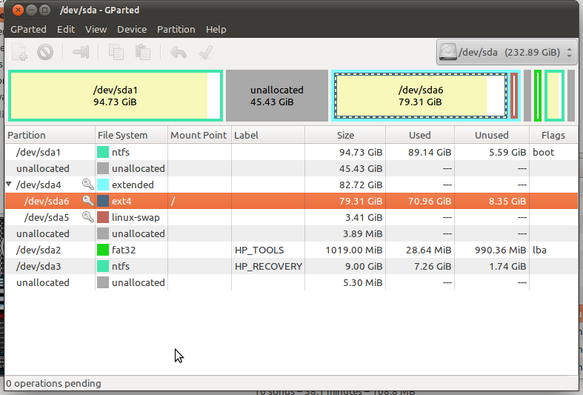

Today I spent transferring files off my old #laptop b/c I realized that it wasn't using the ancient dedicated GPU & I messed up installing the drivers like a n00b. So, now I'm going to try out some new OSes. See what I can get installed & run RAID 0.

Other contenders are:

• #AVLinuxMX, #EnlightenmentDE & #MokshaDE versions

• #LMDE which'll make a solid fallback b/c I am most familiar w/ #Debian based distros.

Recovering from a failed Proxmox upgrade

Today I'll look at how I recovered from a failed Proxmox 8 to 9 upgrade on a small form factor Dell that I use. This system is one of two in the cluster, and it failed during the upgrade process. This failure was not caught by the pve8to9 utility, which gave me a clean report prior to the upgrade. However, during the last few steps of the u

https://dustinrue.com/2025/12/recovering-from-a-failed-proxmox-upgrade/

Recovering from a failed Proxmox upgrade

Today I’ll look at how I recovered from a failed Proxmox 8 to 9 upgrade on a small form factor Dell that I use. This system is one of two in the cluster, and it failed during the upgrade process. This failure was not caught by the pve8to9 utility, which gave me a clean report prior to the upgrade. However, during the last few steps of the upgrade, I got the message below, which made me think I […]

https://dustinrue.com/2025/12/recovering-from-a-failed-proxmox-upgrade/

https://www.linux-magazine.com/Online/Features/Resetting-Passwords-with-SystemRescueCd?utm_source=mlm

#TBT #SystemRescueCD #Linux #FOSS #GRUB #LILO #OpenSource #backup

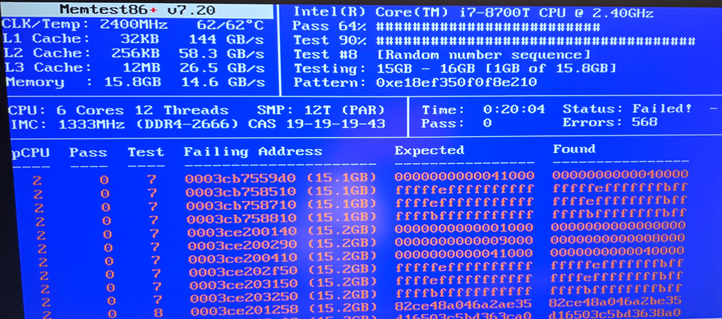

. . . sigh . . . Some times the RAM fairies get tired I guess. I get it, what is #shit is when humans lie . . . and hide this fact, and onsell them on as a component in a 'tested' 'working' thing, that fails to boot every 7th time.

#SystemRescueCD and #memtest86 are awesome by the way.