🚀 NEW on We ❤️ Open Source 🚀

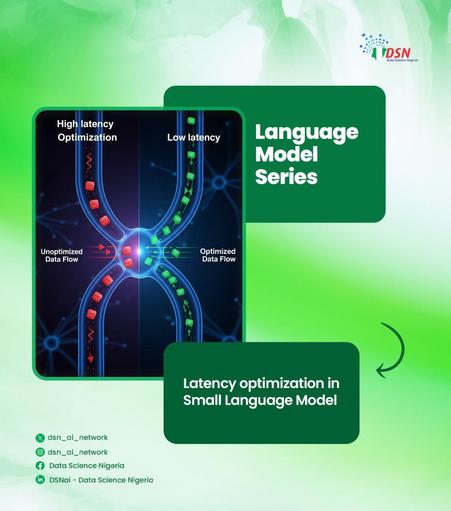

Small language models are becoming a serious production choice, not just a compromise.

Nihal Kaul looks at how open source tooling helps teams fine-tune, serve, and deploy smaller models with more control.

https://allthingsopen.org/articles/small-language-models-open-source-ai-production