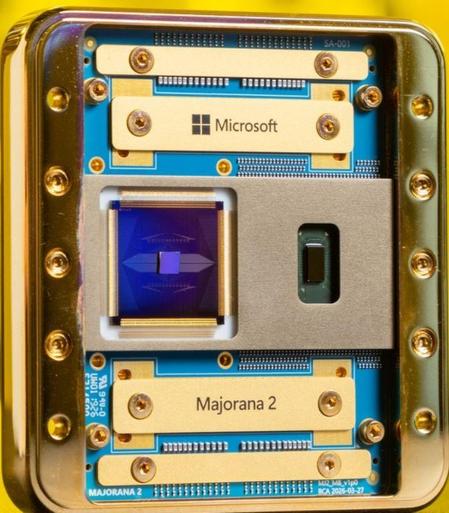

Majorana V2 Announced.

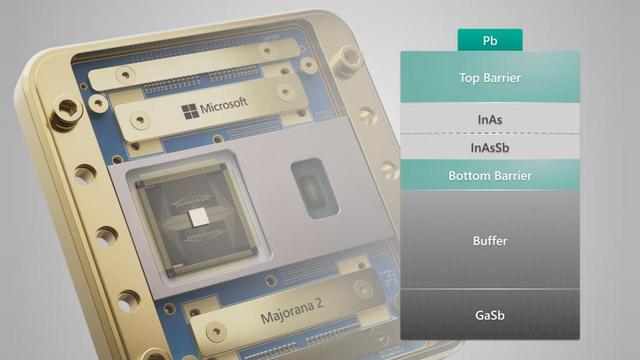

Microsoft announces Majorana 2 claiming enhanced qubit performance with qubit lifetimes exceeding 20 seconds. (Majorana 1 has qubit lifetimes between one and 12 milliseconds) I could not locate definitive availability info. https://quantum.microsoft.com/en-us/insights/blogs/majorana-2-scalable-quantum-processor #Quantum #Microsoft #Majorana2 #QuantumProcessor #QuantumComputing #Qubits #TopologicalQubits #AI #QuantumChip