ChatGPT is not Enough: Enhancing Large Language Models with Knowledge Graphs for Fact-aware Language Modeling

https://arxiv.org/abs/2306.11489

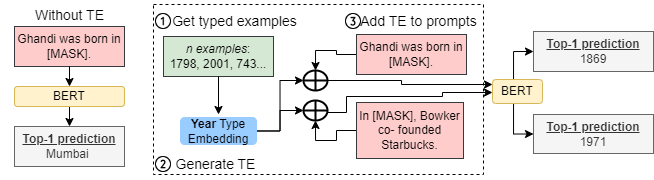

ChatGPT, a large language model (LLM), has gained considerable attention due to its powerful emergent abilities. Some researchers suggest that LLMs could replace structured knowledge bases like knowledge graphs (KGs) ...

#PretrainedLanguageModels #PretrainedModels #PLMs #LargeLanguageModels #LanguageModels #NLP #GPT #ChatGPT #KG #KnowledgeGraphs #epistimology