21.5-inch AI Touch Panel PC is powered by NVIDIA Jetson Orin NX module for industrial HMI applications

21.5-inch AI Touch Panel PC is powered by NVIDIA Jetson Orin NX module for industrial HMI applications

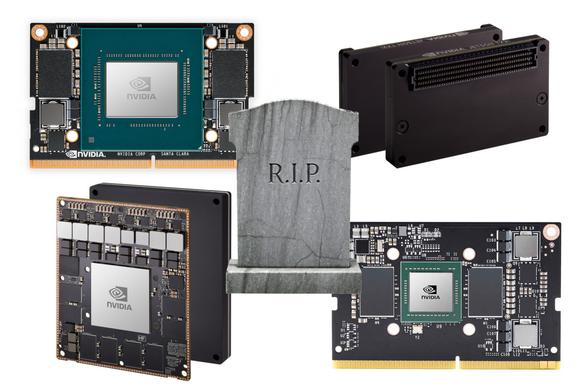

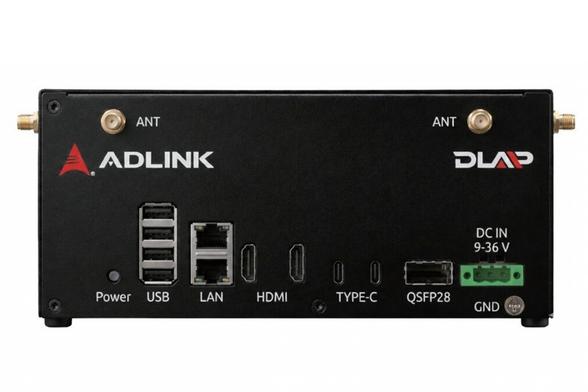

AAEON NIKY-2215-NX is a 21.5-inch Full HD AI Touch Panel PC powered by the NVIDIA Jetson Orin NX 8GB/16GB and designed for AI-enhanced HMI applications such as production line inspection systems and industrial monitoring dashboards. The panel PC features two GbE RJ45 jacks, four USB 3.2 Type-A ports, CAN Bus, RS-232/422/485, and DIO DB-9/15 connectors, and three M.2 sockets for NVMe storage, WiFI/Bluetooth, and 4G LTE/5G cellular connectivity. It can operate in a -5°C to +55°C temperature range, offers vibration and shock tolerance, and takes 12V to 24V DC input. AAEON NIKY-2215-NX specifications: SoM - NVIDIA Jetson Orin NX Jetson Orin NX 16GB – 8-core Arm Cortex-A78AE CPU, 1024-core NVIDIA Ampere GPU, up to 157 TOPS, 16GB LPDDR5 Jetson Orin NX 8GB – 6-core Arm Cortex-A78AE CPU, 1024-core NVIDIA Ampere GPU, up to 117 TOPS, 8GB LPDDR5 Storage - 128 GB (default) NVMe SSD via M.2 2280 M-Key socket Panel

boosts appreciated

boosts appreciated