My Journey to a reliable and enjoyable locally hosted voice assistant

#HackerNews #MyJourneyToVoiceAssistant #LocalHosting #VoiceTech #HomeAutomation #VoiceAssistant

My Journey to a reliable and enjoyable locally hosted voice assistant

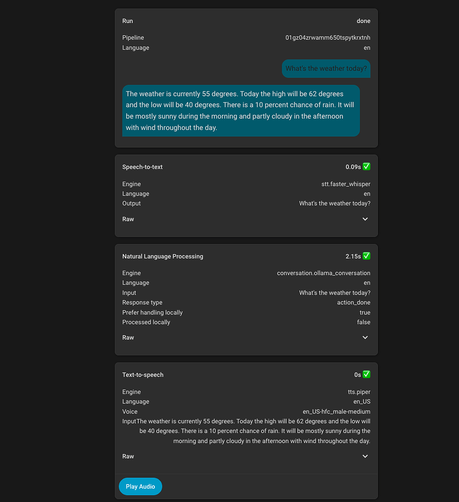

I have been watching HomeAssistant’s progress with assist for some time. We previously used Google Home via Nest Minis, and have switched to using fully local assist backed by local first + llama.cpp (previously Ollama). In this post I will share the steps I took to get to where I am today, the decisions I made and why they were the best for my use case specifically. Links to Additional Improvements Here are links to additional improvements posted about in this thread. New Features Security C...