🤔 Random thought. I do not mean this in a pejorative way. More anthropologically speaking, in terms of "studying people" and observing their interactions with each another & emerging tech.

– How folks choose to use AI (or not) reveals little glimpses into one's thought process and ability (willingness?) to pay attention to seemingly inconsequential details. Code nuance, so to speak.

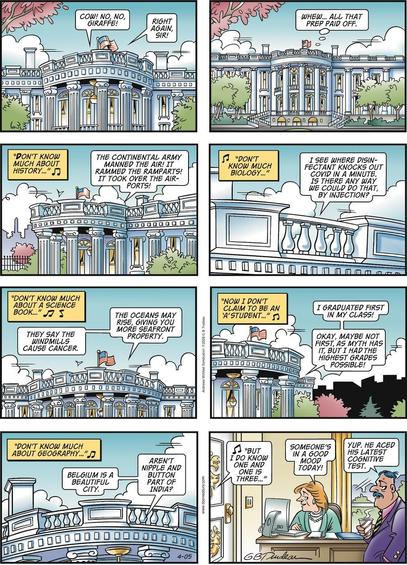

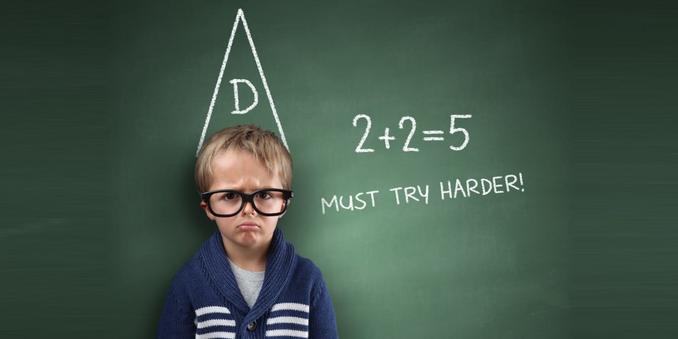

– In that way, it is a bit like the phenomena of the Dunning-Kruger effect. That is a fascinating story (link in the comments). Two psychologists posited that without a certain baseline level of self-awareness, humans tend to over-estimate their own abilities.

– I think that tends to compound with AI. Someone can prompt their way to passable results. Which to the untrained eye (vibe-* coder, designer, businessperson) look just like the "real thing."

– Emulating versions of success they have seen others have. Without comprehension. Like me in the 1990s, thinking if I could wear Air Jordans then… Maybe? A half-Asian kid could play basketball at that level.

Such workflows tend to result in some comical outcomes. Those who create the vibe-work present it to others who vibe-evaluate it, and everyone continues along happily until something breaks. That inadvertently places a heavier burden on those who understand the fundamentals, to serve as a backstop against quality control.

I half-jokingly said to another principal engineer friend:

> "I miss the days when iffy devs tended to move slowly. Now they can churn out iffy stuff at ludicrous speed."

Essentially, the difference between these statements.

1️⃣ "AI writes better code than me. It can keep large chunks of contextual details straight. Whereas I cannot. Ergo, AI is better at writing code than all developers."

2️⃣ "AI writes better code than me. I use it to generate things that appear correct to my eyes. However, levels of human competence exist beyond my own."