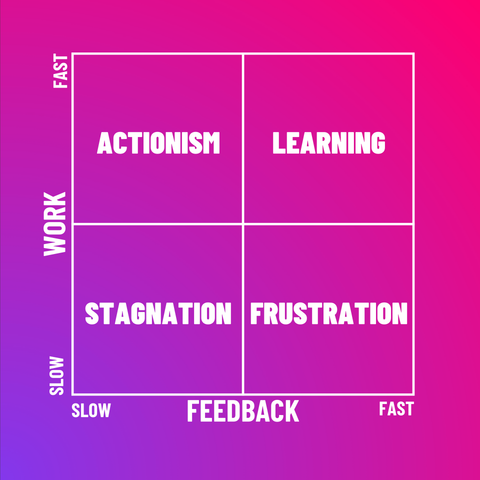

Most organizations have no problem with output, but rather with responding to signals. This is a problem because when t(decision) > t(production), the system becomes structurally disconnected from reality. It is completely worthless to deliver faster if decisions take too long, because then any knowledge gained is immediately devalued.

A thread 🧵

#SystemsThinking #WorkFeedbackLoop #Flow #TheoryOfConstraints #DecisionLatency

(1/2)