ben (@contraben)

Human Creativity Benchmark를 소개한다. 이 평가는 창의적 전문가들이 평가하듯 AI 모델의 창의성을 점수화하는 첫 번째 벤치마크로, 모델의 창의적 성능을 새로운 방식으로 측정하려는 시도다.

ben (@contraben)

Human Creativity Benchmark를 소개한다. 이 평가는 창의적 전문가들이 평가하듯 AI 모델의 창의성을 점수화하는 첫 번째 벤치마크로, 모델의 창의적 성능을 새로운 방식으로 측정하려는 시도다.

SelfReflect measures whether an LLM's text summary of its uncertainty matches its actual answer distribution. Across 20 modern models: it doesn't, unless the model sees samples of its own answers first.

The negative result does more work than the metric itself. Fits a growing line where LLM self-reports shouldn't be trusted as introspection. Practical workaround isn't cheap: N forward passes to sample, then a summarize pass.

An Apple paper introduces an information-theoretic metric for whether an LLM’s text summary of its own uncertainty matches the distribution it actually samples from, and finds that current models cannot do this without sampling help.

KGLens turns a knowledge graph into test questions and uses Thompson sampling to zero in on a model's weakest facts.

The interesting bit is the output shape: a per-relation map of where the model is and isn't reliable, against a graph matched to your deployment. Sampling trick should generalize to red-teaming, jailbreak coverage, capability probing too.

An Apple paper turns curated knowledge graphs — structured fact databases — into a smart probe that locates a language model’s factual blind spots in far fewer questions than checking every fact one by one.

GPT 5.5 is the second model who beats AISI's cyber range. After Mythos Preview beaten the same challenge before several weeks ago.

Full report and evaluation:

https://www.aisi.gov.uk/blog/our-evaluation-of-openais-gpt-5-5-cyber-capabilities

#cybersecurity

#security

#infosec

#ai

#artificialIntelligence

#mythos

#evaluation

#claude

#gpt

#openai

#memes

DigitalAssetBuzz (@DAssetBuzz)

DeepSeek의 상위 LLM을 실제 테스트해본 후기와 함께, DeepSeek가 매우 뛰어난 빌더를 보유하고 있다고 언급한다. 구체적 출시 정보는 없지만, 최신 대형 언어모델 성능에 대한 긍정적 평가로 볼 수 있다.

Wes Roth (@WesRoth)

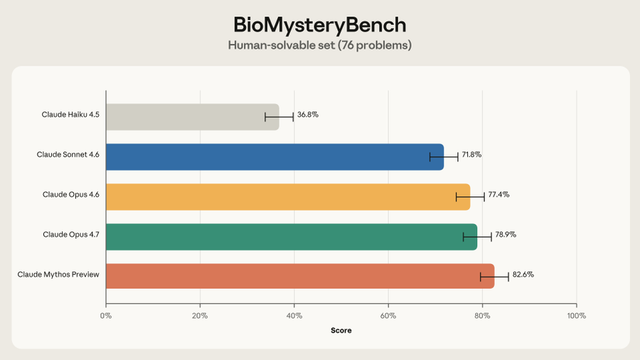

Anthropic이 복잡한 오픈엔드 생물정보학 및 생물학 데이터 분석 문제에서 AI 성능을 평가하는 새 평가 프레임워크 BioMysteryBench를 공개했다. 실제 생물학 데이터셋에서 엄선한 99개의 고난도 문제로 구성되어 AI의 바이오 분야 추론 및 분석 능력을 측정한다.

Anthropic has introduced BioMysteryBench, a new evaluation framework that test AI performance on complex, open-ended bioinformatics and biological data analysis problems. The test consists of 99 high-difficulty problems curated from real-world biological datasets. To establish

Abby O'Neill (@abby_k_oneill)

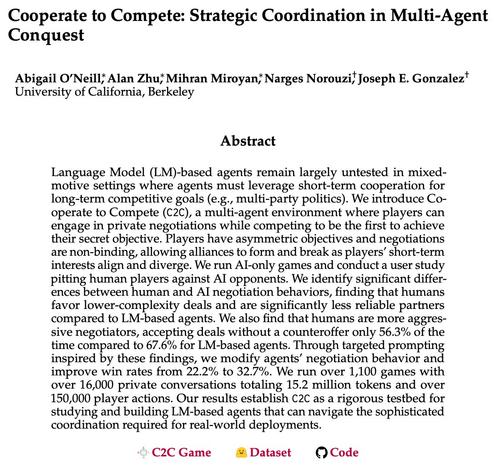

국가 대표 수준의 AI 에이전트 협상 신뢰성과 장기·비대칭·비구속 협력 평가의 한계를 지적하며, 경쟁자와의 협력을 시험하는 새 벤치마크/테스트베드 C2C(Cooperate to Compete)를 소개한다.

Would you trust an AI agent to negotiate on your country's behalf at the G20? Real coordination is long-horizon, asymmetric, and non-binding; current multi-agent evaluations miss this. We build Cooperate to Compete (C2C): a testbed for LM agents coordinating with rivals. 🤝🔪🎭

TestingCatalog News (@testingcatalog)

Plurai가 에이전트를 위한 실시간 맞춤형 eval과 가드레일을 빠르게 구축하는 ‘vibe-training’을 소개했다. 의도에서 프로덕션용 API 엔드포인트까지 몇 분 만에 만들 수 있고, SLM은 100ms 미만 지연과 8배 이상 저렴한 비용을 제공한다고 강조했다.

Plurai introduced vibe-training 👀 A new way to build real-time, tailored evals and guardrails for your agent, with high accuracy at a fraction of the LLM cost. > Goes from intent to a production-ready API endpoint in minutes > SLMs run at sub-100ms latency, over 8x cheaper

📌 Le CV narratif : une nouvelle façon de valoriser votre parcours scientifique à l’Université de Lorraine

À l’heure de réformer l’évaluation de la recherche évolue, l’Université de Lorraine propose une nouvelle méthode pour mettre en lumière l’impact des travaux de recherche au-delà des listes de publications : le CV narratif.

Ce format permet de mettre en avant :

✅ Des productions variées (données, logiciels, médiation scientifique…)

✅ Une approche personnalisée et cohérente avec les recommandations de la Coalition for Advancing Research Assessment (CoARA) @coarafrance

🔧 Comment le construire ?

1️⃣ S’appuyer sur une identité numérique solide (ORCID, idHAL)

2️⃣ Raconter son parcours en utilisant la partie narrative du CV du format de dossier CNU pour répondre aux quatre questions posées par le modèle de la Royal Society

💡 Besoin d’accompagnement ?

→ Consultez la page dédiée sur le site Science Ouverte à l’Université de Lorraine

→ Inscrivez-vous sur la plateforme PEP-CV (échange entre pairs autour du CV narratif)

→ En complément, prenez contact avec l’équipe Bibliométrie : [email protected]

🔗 En savoir plus : https://scienceouverte.univ-lorraine.fr/cv-narratifs/

Définition En 2022, dans Nature, Chris Woolston, considère que “les CV pourraient être plus efficaces s’ils laissaient la place à des récits — de brefs écrits qui racontent l’histoire d’un·e scientifique, ses réalisations ou son impact.” (« CVs could be more effective if they allowed room for narratives — brief statements that tell a story about a ... Lire la suite