#LearnLockpickingWithAlice lesson 11 (part two): DIY padlock shims!

Who wants to spend a $5 on fancy padlock shims, when you can make shitty ones for the price of a soda can (and maybe a finger or two)?

Today, I'm going to teach y'all how to turn a soda can into like 6 disposable padlock shims.

⚠️ Soda cans are razor sharp on the cut edges, please be careful; your fingers will thank you 🫶

1. To start, get yourself an empty can of soda, beer, energy drink, etc.

2. Using some scissors you don't care about, cut the top and bottom off along the bevel. You should now have a tube that is open at both ends.

3. Cut the tube down one side and flatten it out into a rectangle, then cut it into 2.5" x 1" strips.

4. Take a strip and (with a marker) divide it into a 4x4 grid.

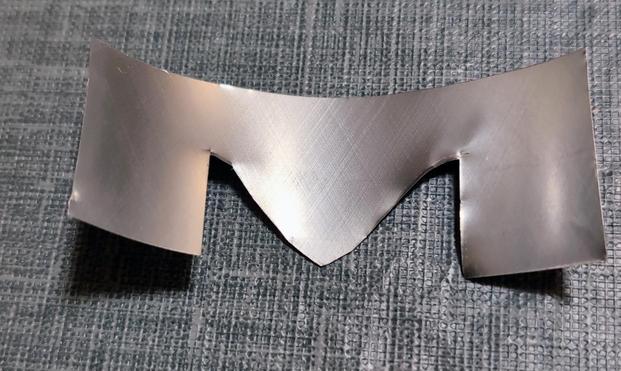

5. Then cut an "M" shape out of the bottom half of the 2.5" x 1" rectangle.

6. Fold the top ¼ of the rectangle down.

7. Fold the legs of the "M" up and over the top on each side.

8. Shape the shim around a lock shackle.

9. Shim something.