| Website | https://yoavartzi.com/ |

| Cornell NLP | http://nlp.cornell.edu |

Yoav Artzi

- 398 Followers

- 93 Following

- 79 Posts

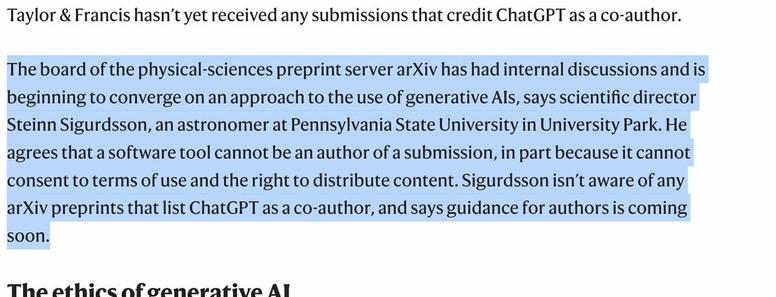

"... a software tool cannot be an author of a submission, in part because it cannot consent to terms of use and the right to distribute content."

🤘Right on

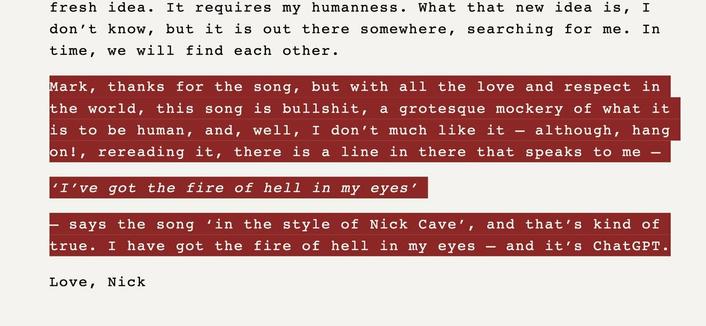

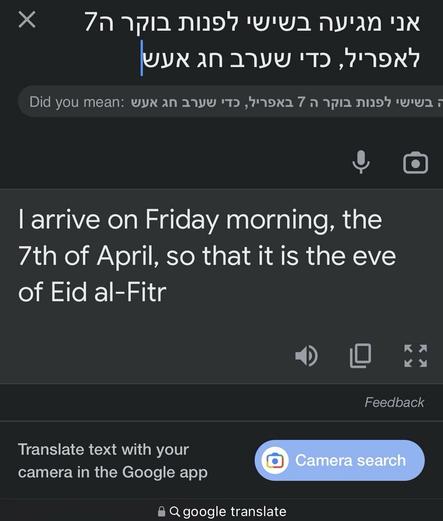

Several US-based newspapers have recently mentioned @[email protected] 's reply to a set of chatGPT lyrics purportedly "in his style". The reply speaks for itself. If you're into writing, LLM or ML-creativity, it's worth a read.

https://www.theredhandfiles.com/chat-gpt-what-do-you-think/

🐦🔗: https://twitter.com/adinamwilliams/status/1615454708507844624

Nick Cave - The Red Hand Files - Issue #218 - I asked Chat GPT to write a song in the style of Nick Cave and this is what it produced. What do you think? Verse 1: In the depths of the night, I hear a call A voice that echoes, through the hall It’s a siren’s song, that pulls me in Takes me to a place, where I can’t begin Chorus: I am the sinner, I am the saint I am the darkness, I am the light I am the hunter, I am the prey I am the devil, I am the savior Verse 2: I walk the streets, with my head held high I’ve got my demons, but I’ll make them fly I’ll dance with the devil, and I’ll play his game I’ll be the one, to call his name Chorus: I am the sinner, I am the saint I am the darkness, I am the light I am the hunter, I am the prey I am the devil, I am the savior Bridge: I’ve got the blood of angels, on my hands I’ve got the fire of hell, in my eyes I’m the king of the abyss, I’m the ruler of the dark I’m the one that they fear, in the shadows they hark Chorus: I am the sinner, I am the saint I am the darkness, I am the light I am the hunter, I am the prey I am the devil, I am the savior Outro: So come with me, to the edge of the night Where the darkness and the light collide We’ll walk the line, between the good and the bad And we’ll see, who comes out on top in the end.

Dear Mark, Since its launch in November last year many people, most buzzing with a kind of algorithmic awe, have sent me songs ‘in the...

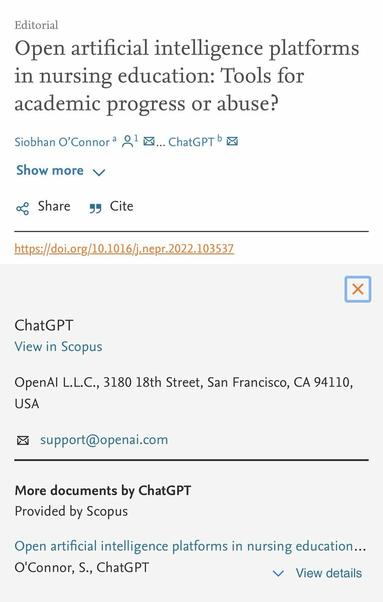

This is probably the first paper to give ChatGPT coauthor status, and its contact details points to support@openai! Giving coauthorship to writing assistants is absurd and this practice has to stop. 🧶

https://jobs.lever.co/asapp-2/24e2de68-4a39-4a02-823d-3b010e9e9eb9

(also: hiring for full-time research positions, both scientists and engineers!)

ASAPP - Research Intern

At ASAPP, we are on a mission to build transformative machine learning-powered products that push the boundaries of artificial intelligence and customer experience. We focus on solving complex, data-rich problems — the kind where there are huge systemic inefficiencies and where a real solution will have a significant economic impact. Our CX performance platform uses machine learning across both voice and digital engagement channels to augment and automate human work, radically increasing productivity and improving the efficiency and effectiveness of customer experience teams. We are seeking Research interns to join our NLP research and ML research teams for summer 2023. Applicants should be open to working onsite at our New York City HQ or Mountain View, CA office.

Named Tensor Notation (TMLR version, https://arxiv.org/abs/2102.13196)

A rigorous description, opinionated style guide, and gentle polemic for named tensors in math notation.

* Macros: https://ctan.org/tex-archive/macros/latex/contrib/namedtensor

Named Tensor Notation is an attempt to define a mathematical notation with named axes. The central conceit is that deep learning is not linear algebra. And that by using linear algebra we leave many technical details ambiguous to readers.

Named Tensor Notation

We propose a notation for tensors with named axes, which relieves the author, reader, and future implementers of machine learning models from the burden of keeping track of the order of axes and the purpose of each. The notation makes it easy to lift operations on low-order tensors to higher order ones, for example, from images to minibatches of images, or from an attention mechanism to multiple attention heads. After a brief overview and formal definition of the notation, we illustrate it through several examples from modern machine learning, from building blocks like attention and convolution to full models like Transformers and LeNet. We then discuss differential calculus in our notation and compare with some alternative notations. Our proposals build on ideas from many previous papers and software libraries. We hope that our notation will encourage more authors to use named tensors, resulting in clearer papers and more precise implementations.