Computational functionalism is such an unreasonable axiom for a property that is inherently tied to internal experience and thus to intensionality. And if I see one more paper like "we assume computational functionalism" when writing about purported potential consciousness of AI systems I am going to lose it, because the massive leap of faith is in declaring computational functionalism remotely reasonable, let alone true.

@wmacmil

- 5 Followers

- 28 Following

- 14 Posts

who cares about prison time, my worst nightmare is my shitty commit messages making it into the court record

even tho im sick, back on the #road after a few days in stavanger . also #preikestolen is a total #shitshow , find another hike if ur ever around here

only do you stumble upon this in scandinavia

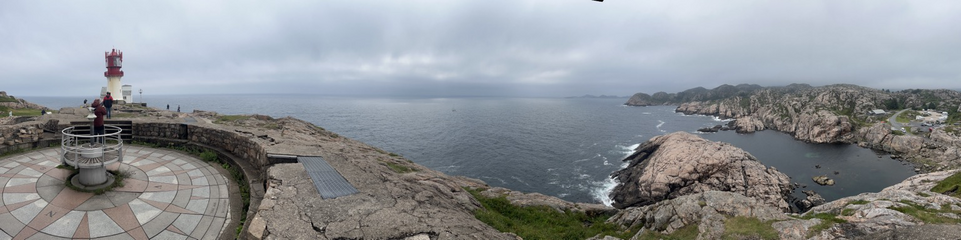

the southernmost point of norways mainland

keepin on keepin on #bicycle

rode all day in the rain, felt a bit cursed, but was remediated when i came to my sleeping place, a wwii artilery spot with an epic view. sleeping at on the grave of a french canon 🙈☮️