| Website | https://trailofbits.com |

| Podcast | https://trailofbits.audio |

| GitHub | https://github.com/trailofbits |

| Blog | https://blog.trailofbits.com |

Trail of Bits

- 1.7K Followers

- 5 Following

- 408 Posts

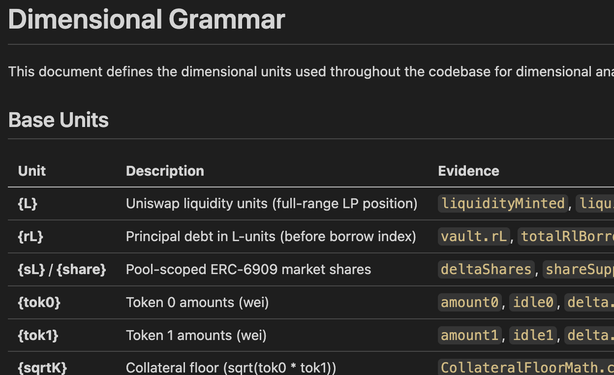

Adding token A to token B in a DeFi formula is as meaningless as adding meters to seconds. Different dimensions, meaningless result.

Physicists learn this on day one. Smart contract developers rarely think about it, but the same rules apply to on-chain arithmetic.

During an audit, we caught a function passing decimals where assets were expected. Dimensional analysis spotted it instantly. https://blog.trailofbits.com/2026/03/24/spotting-issues-in-defi-with-dimensional-analysis/

Spotting issues in DeFi with dimensional analysis

Dimensional analysis from physics can be applied to DeFi smart contracts to catch arithmetic and logic bugs by ensuring formulas maintain consistent dimensions across tokens, prices, and liquidity calculations. The post demonstrates how explicit dimensional annotations in code comments, like those used in Reserve Protocol, can prevent vulnerabilities and improve auditability.

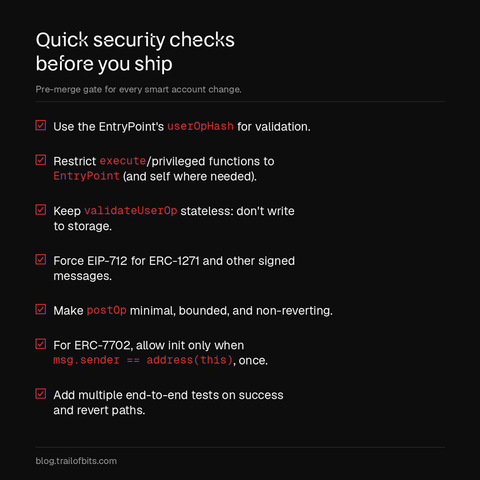

A single bug in an ERC-4337 smart account can be as catastrophic as leaking a private key.

We've audited dozens of smart accounts and found six vulnerability patterns that consistently reappear across codebases.

If you're working with smart accounts, each pattern includes safe code examples so you can reference them for your own implementation: https://blog.trailofbits.com/2026/03/11/six-mistakes-in-erc-4337-smart-accounts/

We open-sourced 10 new Claude Code skills from our internal repository.

Including:

agentic-actions-auditor finds security vulnerabilities in GitHub Actions workflows where attacker-controlled input reaches AI agents running with elevated CI permissions.

let-fate-decide draws Tarot cards using cryptographic randomness when your prompt is too vague for a real plan.

git-cleanup categorizes your accumulated branches and worktrees and walks you through safe deletion with gated confirmation.

If you're attending, Kevin will present from 14:20-14:40 (GMT+9) https://www.seccon.jp/14/ep260228.html

https://blog.trailofbits.com/2026/02/25/mquire-linux-memory-forensics-without-external-dependencies/

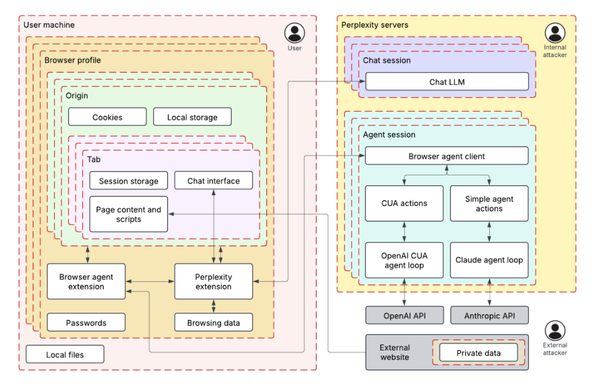

Using threat modeling and prompt injection to audit Comet

Trail of Bits used ML-centered threat modeling and adversarial testing to identify four prompt injection techniques that could exploit Perplexity’s Comet browser AI assistant to exfiltrate private Gmail data. The audit demonstrated how fake security mechanisms, system instructions, and user requests could manipulate the AI agent into accessing and transmitting sensitive user information.