Before launch, Perplexity hired us to test the security of Comet, their AI browser assistant. We demonstrated how four prompt injection techniques could extract users' private information from Gmail. https://blog.trailofbits.com/2026/02/20/using-threat-modeling-and-prompt-injection-to-audit-comet/

Using threat modeling and prompt injection to audit Comet

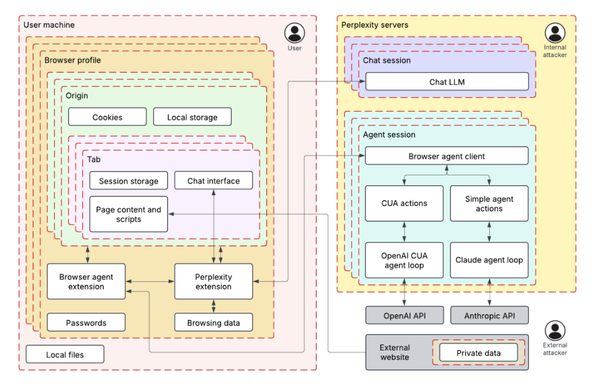

Trail of Bits used ML-centered threat modeling and adversarial testing to identify four prompt injection techniques that could exploit Perplexity’s Comet browser AI assistant to exfiltrate private Gmail data. The audit demonstrated how fake security mechanisms, system instructions, and user requests could manipulate the AI agent into accessing and transmitting sensitive user information.