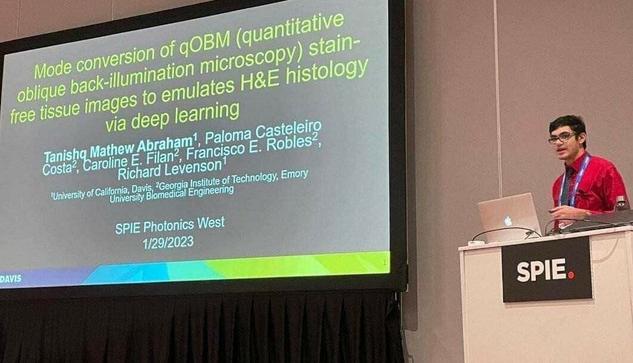

Presented my research at SPIE #PhotonicsWest! Got some good questions, and saw some other great talks throughout the day.

If you're at the conf and want to meet up Mon morning/afternoon, hit me up via DM 🙂

🐦🔗: https://twitter.com/iScienceLuvr/status/1619965971078479873