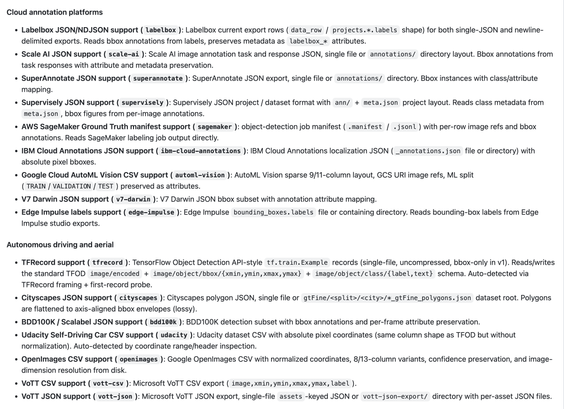

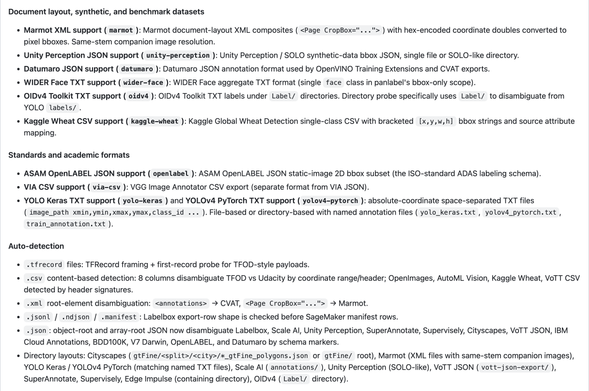

Just made a bumper release this evening: 25 new format adapters covering the major cloud annotation platforms, autonomous-driving and aerial datasets, document layout, synthetic data, and the long tail of academic/community formats. Panlabel now reads and writes 40+ object detection annotation formats, which I think covers almost all the options!

I think I'll move onto a new domain / format now. Either segmentation or maybe I'll dip my toes into text datasets / formats!

https://github.com/strickvl/panlabel/releases/tag/v0.7.0