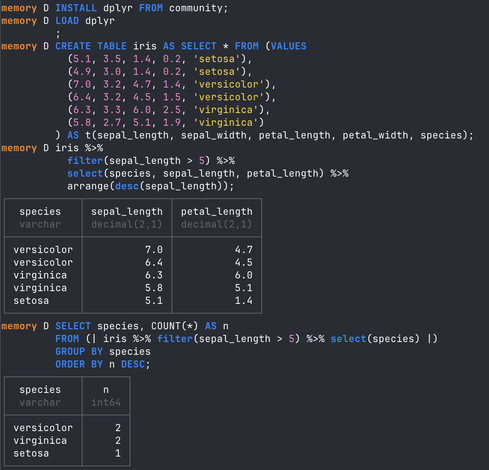

I've been keeping an eye on this since I first heard about it, and it looks like it now works! duckdb has a dplyr extension which can be installed - use dplyr code to slice and dice data within a duckdb session.

https://duckdb.org/community_extensions/extensions/dplyr

Shoutouts to the author @mrchypark and to @DSLC for their 'DuckDB in Action' book club.