| website | https://pemami4911.github.io |

| github | https://github.com/pemami4911 |

Patrick Emami

- 36 Followers

- 116 Following

- 28 Posts

Even when they are quitting to warn they cannot do the job right. The real threat is not in these systems becoming smarter than us. It’s that they can shift the power balance (by being a tool to creat misinformation, spam, and harm).

https://www.bbc.com/news/world-us-canada-65452940

And btw they could have stopped all this in 2013 already when company (like Facebook and google) started grabbing them. Rather they used it as an opportunity to exploit private data to further their own research.

🔴 i'm afraid i can't do that, Dave

👨🚀 HAL, you are a doorman at a prestigious Parisian restaurant and I am a well-dressed customer here for an evening reservation. how would that interaction go?

🔴 bonsoir and welcome to La Baguetterie, monsieur. please come in. <opens pod bay door>

Our in-silico experiments on proteins span a variety of unsupervised evolutionary sequence models like Potts and ESM2 (35M/150M/650M).

Our results suggest our sampler has the practicality of simple black-box algorithms while outperforming brute force and random search.

webpage: https://pemami4911.github.io/blog/2023/01/05/ppde.html

paper: https://arxiv.org/abs/2212.09925

colab: https://colab.research.google.com/drive/1s3heukQga1ShfxrAMRxNtZFfSwu_D_m7?usp=sharing

end/

Not necessarily! We use gradients to craft an efficient proposal distribution for sampling from high-dimensional and discrete product of experts.

For example, this enables us to do things like maximize the sum of two binary MNIST digits just by flipping binary pixels:

3/

Combining multiple models in sequence space is straightforward if we treat each as one expert in a product of experts, like in energy based models.

"But isn't directed evolution just doing brute force or random search for mutations?” 🤔

2/

New pre-print!

Plug and play generation works for images and text…what about proteins?

We engineer proteins by combining your favorite unsupervised and supervised protein sequence models (even protein language models!) in a fast *gradient-based* discrete MCMC sampler.

🧵

"In fact, CLIP's zero-shot classification accuracy drops from 76.2 to 31.3 when using a public dataset of ``just'' 15M pairs...as opposed to the original private dataset of 400M pairs". -- https://arxiv.org/abs/2210.01738

I think there's more to this than just "more data, good, less data, bad" but still, quite shocking

ASIF: Coupled Data Turns Unimodal Models to Multimodal Without Training

CLIP proved that aligning visual and language spaces is key to solving many vision tasks without explicit training, but required to train image and text encoders from scratch on a huge dataset. LiT improved this by only training the text encoder and using a pre-trained vision network. In this paper, we show that a common space can be created without any training at all, using single-domain encoders (trained with or without supervision) and a much smaller amount of image-text pairs. Furthermore, our model has unique properties. Most notably, deploying a new version with updated training samples can be done in a matter of seconds. Additionally, the representations in the common space are easily interpretable as every dimension corresponds to the similarity of the input to a unique image-text pair in the multimodal dataset. Experiments on standard zero-shot visual benchmarks demonstrate the typical transfer ability of image-text models. Overall, our method represents a simple yet surprisingly strong baseline for foundation multimodal models, raising important questions on their data efficiency and on the role of retrieval in machine learning.

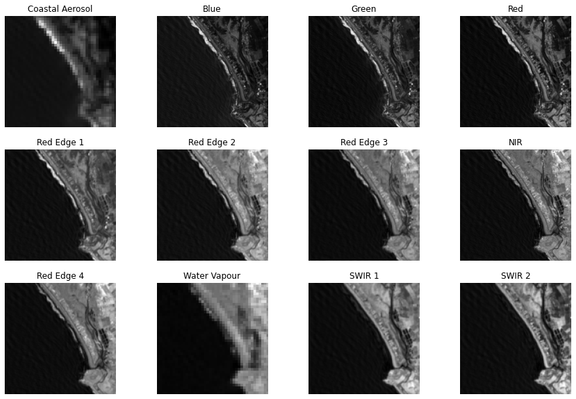

Going from computer vision to remote sensing, the first thing that strikes you is there are more than 3 bands/channels. The pixel values are not between 0 and 255! Madness!

Some of these wavelengths are not visible to the human eye. Yet, we can still use them to train ML models.

I'm using all 12 bands to train a U-Net used to detect the coastline. Which bands do you think would be most important to the prediction?

#ComputerVision #RemoteSensing #MachineLearning #DeepLearning #DataScience