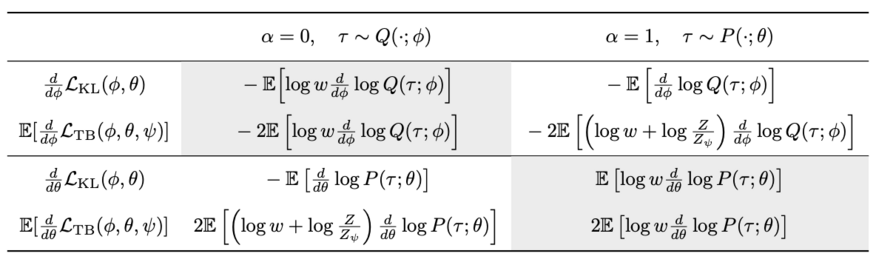

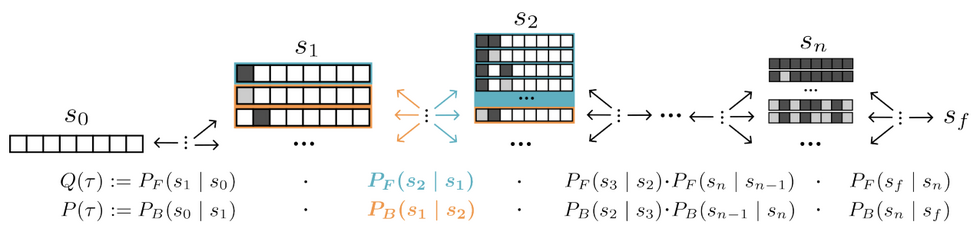

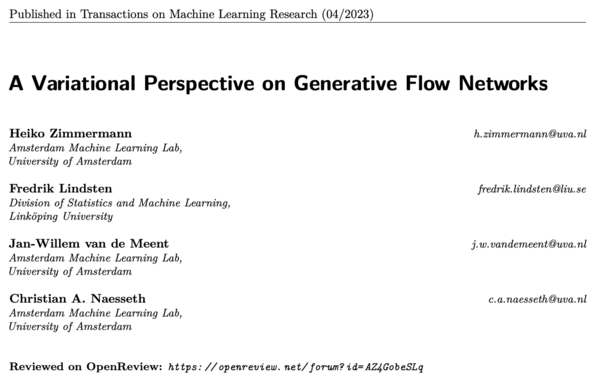

New work by Heiko, Fredrik, Jan-Willem and myself interpreting Generative Flow Networks (GFN) as generative models trained by variational inference!

| Website | https://naesseth.github.io/ |

| Scholar | https://scholar.google.com/citations?user=GQ6rOssAAAAJ |

| https://twitter.com/chris_naesseth |