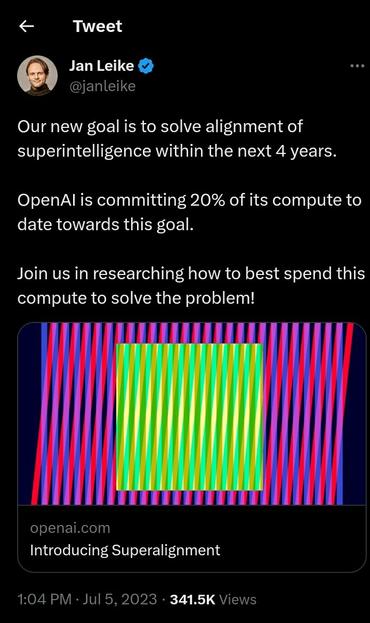

So very many questions really:

- define "smarter than us"

- define "human intent" in a completely general way covering all possible situations

- what if the eventual #SuperAI is "smarter" than your #Alignment Researcher?

- does alignment mean the system does exactly whatever the human intent is?

What is the intent is bad? Some humans have bad intents. In fact, all humans have bad intents sometimes, either intentionally or not.

In the end, humans need to be responsible for things we make.