| Github | https://github.com/MaddyUnderStars |

| Website | https://understars.dev |

| Pronouns | she/her |

Maddy

- 139 Followers

- 280 Following

- 3.1K Posts

All the devs saying that Anthropic’s code quality is “normal” are telling on themselves and everybody they’ve worked with

(Also supports what many have been saying about software quality being a crisis that precedes LLMs, but that’s another story)

continuing thoughts in: https://neuromatch.social/@jonny/116328409651740378

one thing that is clear from reading a lot of LLM code - and this is obvious from the nature of the models and their application - is that it is big on the form of what it loves to call "architecture" even if in toto it makes no fucking sense.

So here you have some accessor function isPDFExtension that checks if some string is a member of the set DOCUMENT_EXTENSIONS (which is a constant with a single member "pdf"). That is an extremely reasonable pattern: you have a bunch of disjoint sets of different kinds of extensions - binary extensions, image extensions, etc. and then you can do set operations like unions and differences and intersections and whatnot to create a bunch of derived functions that can handle dynamic operations that you couldn't do well with a bunch of consts. then just make the functional form the standard calling pattern (and even make a top-level wrapper like getFileType) and you have the oft fabled "abstraction." that's a reasonable ass system that provides a stable calling surface and a stable declaration surface. hell it would probably even help the LLM code if it was already in place because it's a predictable rules-based system.

but what the LLMs do is in one narrow slice of time implement the "is member of set {pdf}" version robustly one time, and then they implement the regex pattern version flexibly another time, and then they implement the any str.endswith() version modularly another time, and so on. Of course usually in-place, and different file naming patterns are part of the architecture when it's feeling a little too spicy to stay in place.

This is an important feature of the gambling addiction formulation of these tools: only the margin matters, the last generation. it carefully regulates what it shows you to create a space of potential reward and closes the gap. It's episodic TV, gameshows for code: someone wins every week, but we get cycles in cycles of seeming progression that always leave one stone conspicuously unturned. The intermediate comments from the LLM where it discovers prior structure and boldly decides to forge ahead brand new are also part of the reward cycle: we are going up, forever. cleaning up after ourselves is down there.

Tech debt is when you have banked a lot of story hours and are finally due for a big cathartic shift and set the LLM loose for "the big cleanup." this is also very similar to the tools that scam mobile games use (for those who don't know me, i spent roughly six months with daily scheduled (carefully titrated lmao) time playing the worst scam mobile chum games i could find to try and experience what the grip of that addition is like without uh losing a bunch of money).

Unlike slot machines or table games, which have a story horizon limited by how long you can sit in the same place, mobile games can establish a space of play that's broader and more continuous. so they always combine several shepherd's tone reward ladders at once - you have hit the session-length intermittent reward cap in the arena modality which gets you coins, so you need to go "recharge" by playing the versus modality which gets you gems. (Typically these are also mixed - one modality gets you some proportion of resource x, y, z, another gets you a different proportion, and those are usually unstable).

Of course it doesn't fucking matter what the modality is. they are all the same. in the scam mobile games sometimes this is literally the case, where if you decompile them, they have different menu wrappings that all direct into the same scene. you're still playing the game, that's all that matters. The goal of the game design is to chain together several time cycles so that you can win->lose in one, win->lose in another... and then by the time you have made the rounds you come back to the first and you are refreshed and it's new. So you have momentary mana wheels, daily earnings caps, weekly competitions, seasonal storylines, and all-time leaderboards.

That's exactly the cycle that programming with LLMs tap into. You have momentary issues, and daily project boards, and weekly sprints, and all-time star counts, and so on. Accumulate tech debt by new features, release that with "cleanup," transition to "security audit." Each is actually the same, but the present themselves as the continuation of and solution to the others. That overlaps with the token limitations, and the claude code source is actually littered with lots of helpful panic nudges for letting you know that you're reaching another threshold. The difference is that in true gambling the limit is purely artificial - the coins are an integer in some database. with LLMs the limitation is physical - compute costs fucking money baby. but so is the reward. it's the same in the game, and the whales come around one way or another.

A series of flashing lights and pictures, set membership, regex, green checks, the feeling of going very fast but never making it anywhere. except in code you do make it somewhere, it's just that the horizon falls away behind you and the places you were before disappear. and sooner or later only anthropic can really afford to keep the agents running 24/7 tending to the slop heap - the house always wins.

So the reason that Claude code is capable of outputting valid json is because if the prompt text suggests it should be JSON then it enters a special loop in the main query engine that just validates it against JSON schema (it looks like the schema just validates that something in fact and object and its keys are strings) and then feeds the data with the error message back into itself until it is valid JSON or a retry limit is reached.

This code is so eye wateringly spaghetti so I am still trying to see if this is true, but this seems to be how it not only returns json to the user, but how it handles all LLM-to-JSON, including internal output from its tools. There appears to be an unconditional hook where if the JSON output tool is present in the session config at all, then all tool calls must be followed by the "force into JSON" loop.

If that's true, that's just mind blowingly expensive

edit: please note that unless I say otherwise all evaluations here are just from my skimming through the code on my phone and have not been validated in any way that should cause you to be upset with me for impugning the good name of anthropic

edit2: this is both much worse and not as bad as i thought on first read - https://neuromatch.social/@jonny/116326861737478342

jonny (good kind) (@[email protected])

Attached: 3 images OK i can't focus on work and keep looking at this repo. So after every "subagent" runs, claude code creates *another* "agent" to check on whether the first "agent" did the thing it was supposed to. I don't know about you but i smell a bit of a problem, if you can't trust whether one "agent" with a very big fancy model did something, how in the fuck are you supposed to trust another "agent" running on the smallest crappiest model? That's not the funny part, that's obvious and fundamental to the entire show here. HOWEVER RECALL [the above JSON Schema Verification thing](https://neuromatch.social/@jonny/116325123136895805) that is unconditionally added onto the end of every round of LLM calls. the mechanism for adding that hook is... JUST FUCKING ASKING THE MODEL TO CALL THAT TOOL. second pic is registering a hook s.t. "after some stop state happens, if there isn't a message indicating that we have successfully called the JSON validation thing, prompt the model saying "you must call the json validation thing" this shit sucks so bad they can't even ***CALL THEIR OWN CODE FROM INSIDE THEIR OWN CODE.*** Look at the comment on pic 3 - "e.g. agent finished without calling structured output tool" - that's common enough that they have a whole goddamn error category for it, and the way it's handled is by just pretending the job was cancelled and nothing happened.

Rather than the same old boring internet pranks, I thought I'd build something more fun this April Fools.

CSS or BS. Can you tell your CSS properties names from BS?

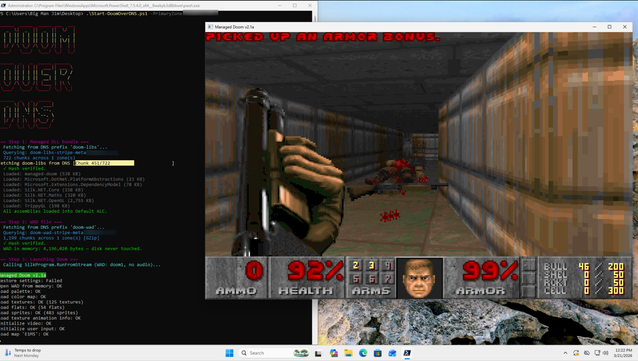

In today's episode of "Can It Run Doom": DNS fucking TXT records.

Some absolute madlad (cough Adam Rice cough) compressed the entire shareware DOOM WAD, split it into around 1,964 chunks, shoved them into Cloudflare TXT records, and wrote a PowerShell script that reassembles and runs the whole goddamn game from DNS queries alone. Nothing touches disk. The DLLs are in DNS. THE FUCKING DLLS ARE IN DNS.

RFC 1035 was written in 1987. Those engineers are spinning in their graves fast enough to generate municipal power.

Bonus: this is a fully functional globally-distributed covert data exfil channel that your NGFW will never fucking see if you're not doing deep DNS inspection. Sleep well.

blog: https://blog.rice.is/post/doom-over-dns/

repo: https://github.com/resumex/doom-over-dns

Also lmao @ every blue team that has never once looked at their DNS query volume. How's that DLP policy working out for you.

It was always DNS.

RE: https://mastodon.social/@mdreid/116292374264941891

the reason so many trans people are in software is because they are predisposed for success: in the most vulnerable time of their lives they have to put up with naming things and cache invalidation

RE: https://mamot.fr/users/Khrys/statuses/116286245381869095