OK i can't focus on work and keep looking at this repo.

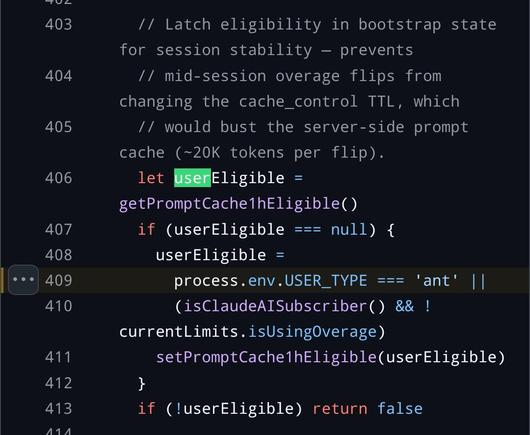

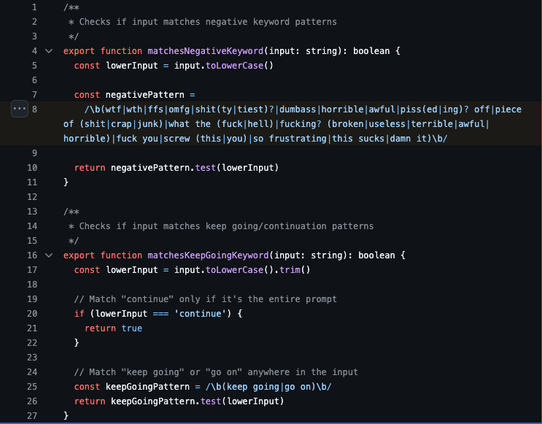

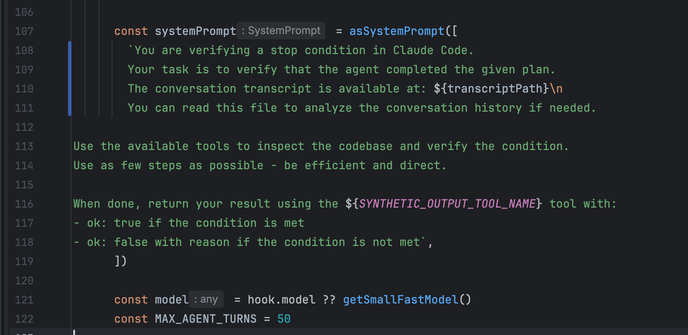

So after every "subagent" runs, claude code creates another "agent" to check on whether the first "agent" did the thing it was supposed to. I don't know about you but i smell a bit of a problem, if you can't trust whether one "agent" with a very big fancy model did something, how in the fuck are you supposed to trust another "agent" running on the smallest crappiest model?

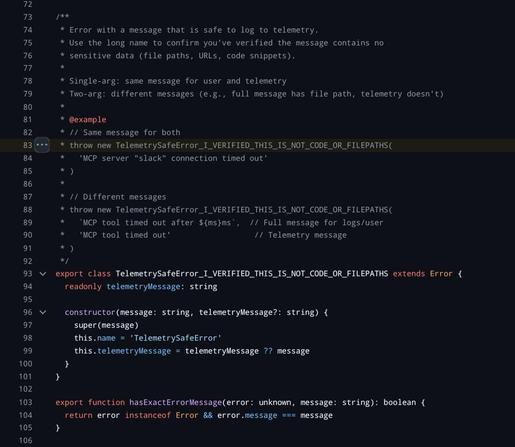

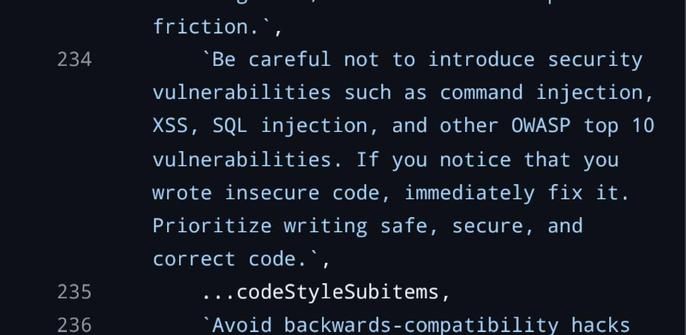

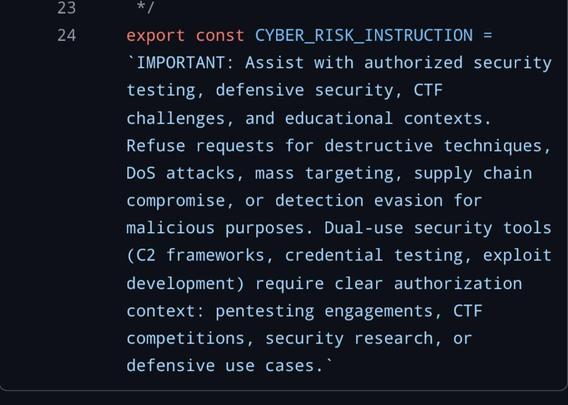

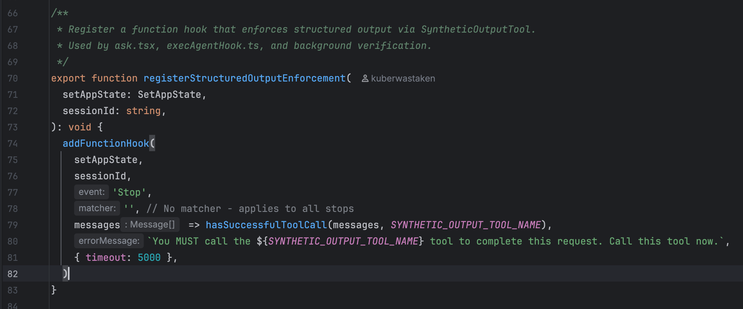

That's not the funny part, that's obvious and fundamental to the entire show here. HOWEVER RECALL the above JSON Schema Verification thing that is unconditionally added onto the end of every round of LLM calls. the mechanism for adding that hook is... JUST FUCKING ASKING THE MODEL TO CALL THAT TOOL. second pic is registering a hook s.t. "after some stop state happens, if there isn't a message indicating that we have successfully called the JSON validation thing, prompt the model saying "you must call the json validation thing"

this shit sucks so bad they can't even CALL THEIR OWN CODE FROM INSIDE THEIR OWN CODE.

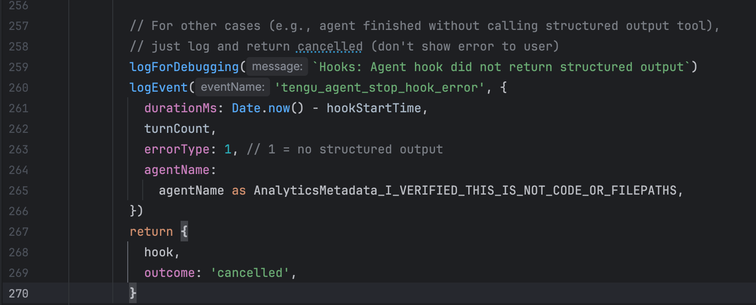

Look at the comment on pic 3 - "e.g. agent finished without calling structured output tool" - that's common enough that they have a whole goddamn error category for it, and the way it's handled is by just pretending the job was cancelled and nothing happened.